Table of Contents

Introduction

Part I. Page Content

Part II. User Experience

Part III. Content Publishing

Part IV. Content Distribution

Appendices

Foreword

The open web is an amazingly complex, evolving network of technologies. Entire industries and careers are built on the web and depend on its vibrant ecosystem to succeed. As critical as the web is, understanding how it’s doing has been surprisingly elusive. Since 2010, the mission of the HTTP Archive project has been to track how the web is built, and it’s been doing an amazing job of it. However, there has been one gap that has been especially challenging to close: bringing meaning to the data that the HTTP Archive project has been collecting and enabling the community to easily understand how the web is performing. That’s where the Web Almanac comes in.

The mission of the Web Almanac is to take the treasure trove of insights that would otherwise be accessible only to intrepid data miners, and package it up in a way that’s easy to understand. This is made possible with the help of industry experts who can make sense of the data and tell us what it means. Each of the 20 chapters in the Web Almanac focuses on a specific aspect of the web, and each one has been authored and peer reviewed by experts in their field. The strength of the Web Almanac flows directly from the expertise of the people who write it.

Many of the findings in the Web Almanac are worthy of celebration, but it’s also an important reminder of the work still required to deliver high-quality user experiences. The data-driven analyses in each chapter are a form of accountability we all share for developing a better web. It’s not about shaming those that are getting it wrong, but about shining a guiding light on the path of best practices so there is a clear, right way to do things. With the continued help of the web community, we hope to make this an annual tradition, so each year we can track our progress and make course corrections as needed.

There is so much to learn in this report, so start exploring and share your takeaways with the community so we can collectively advance our understanding of the state of the web.

— Rick Viscomi, Web Almanac Editor-in-Chief

JavaScript

Introduction

JavaScript is a scripting language that makes it possible to build interactive and complex experiences on the web. This includes responding to user interactions, updating dynamic content on a page, and so forth. Anything involving how a web page should behave when an event occurs is what JavaScript is used for.

The language specification itself, along with many community-built libraries and frameworks used by developers around the world, has changed and evolved ever since the language was created in 1995. JavaScript implementations and interpreters have also continued to progress, making the language usable in many environments, not only web browsers.

The HTTP Archivehttps://httparchive.org/ crawls millions of pageshttps://httparchive.org/reports/state-of-the-web#numUrls every month and runs them through a private instance of WebPageTesthttps://webpagetest.org/ to store key information of every page. (You can learn more about this in our methodology). In the context of JavaScript, HTTP Archive provides extensive information on the usage of the language for the entire web. This chapter consolidates and analyzes many of these trends.

How much JavaScript do we use?

JavaScript is the most costly resource we send to browsers; having to be downloaded, parsed, compiled, and finally executed. Although browsers have significantly decreased the time it takes to parse and compile scripts, download and execution have become the most expensive stageshttps://v8.dev/blog/cost-of-javascript-2019 when JavaScript is processed by a web page.

Sending smaller JavaScript bundles to the browser is the best way to reduce download times, and in turn improve page performance. But how much JavaScript do we really use?

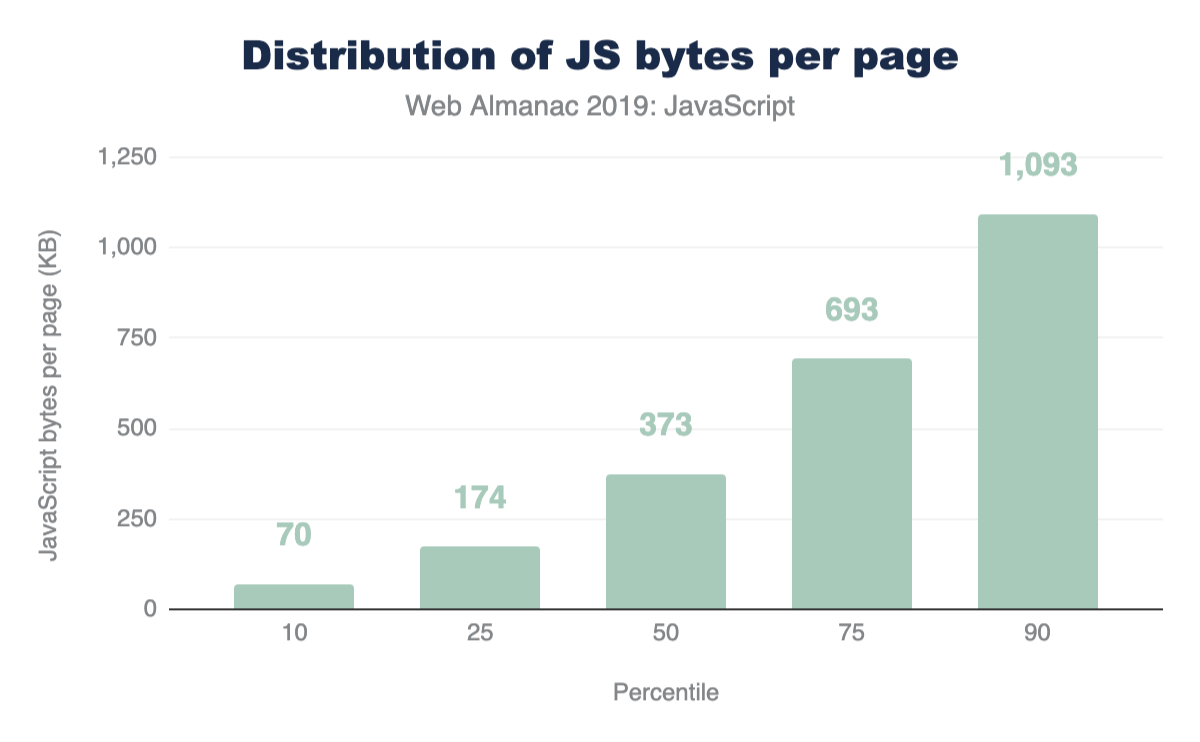

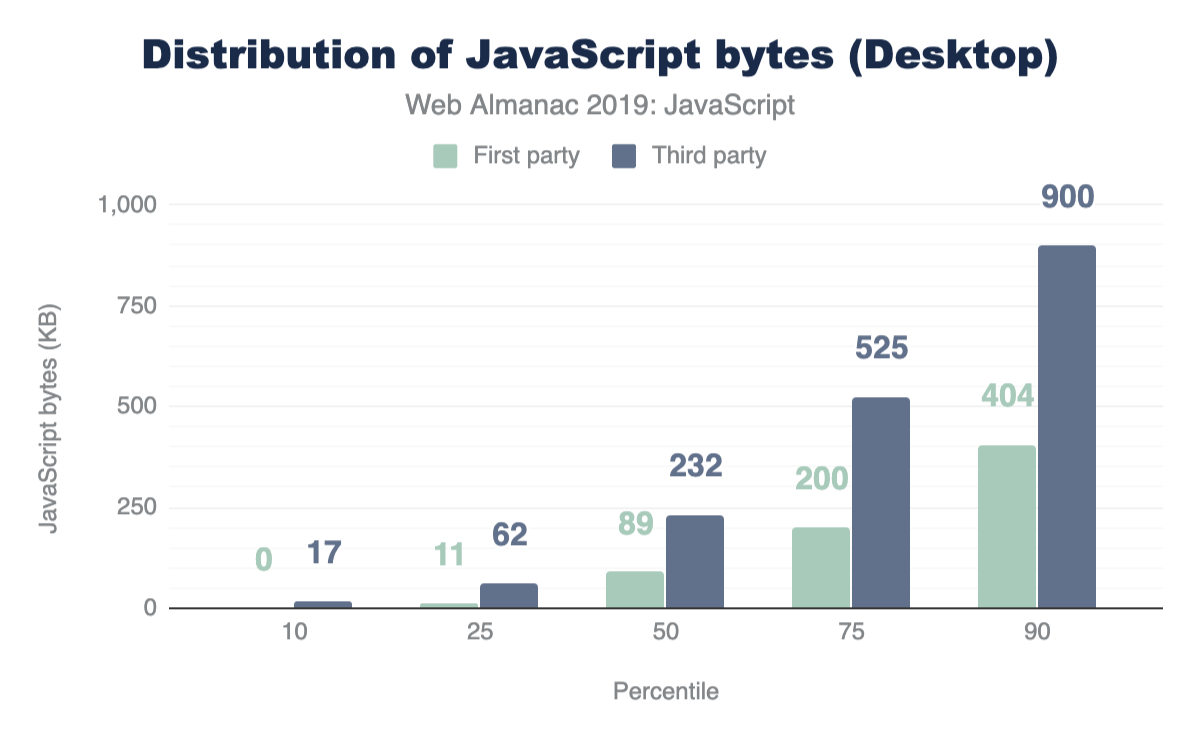

Figure 1.1 above shows that we use 373 KB of JavaScript at the 50th percentile, or median. In other words, 50% of all sites ship more than this much JavaScript to their users.

Looking at these numbers, it’s only natural to wonder if this is too much JavaScript. However in terms of page performance, the impact entirely depends on network connections and devices used. Which brings us to our next question: how much JavaScript do we ship when we compare mobile and desktop clients?

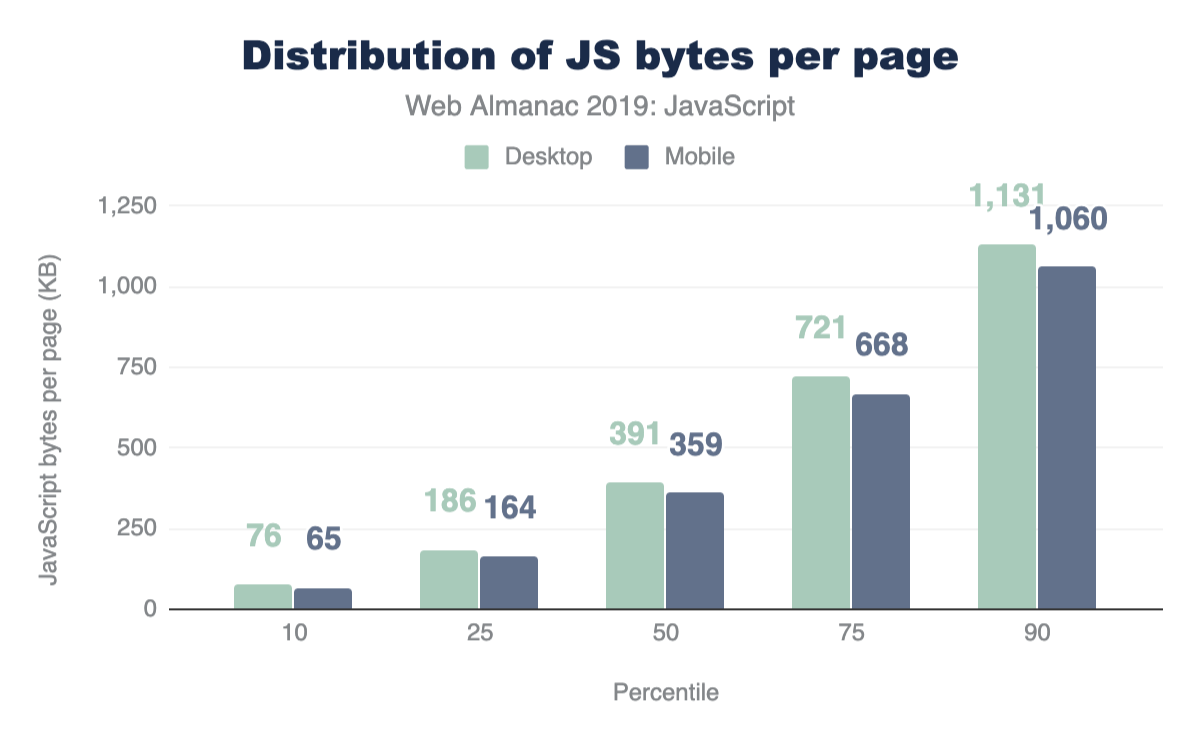

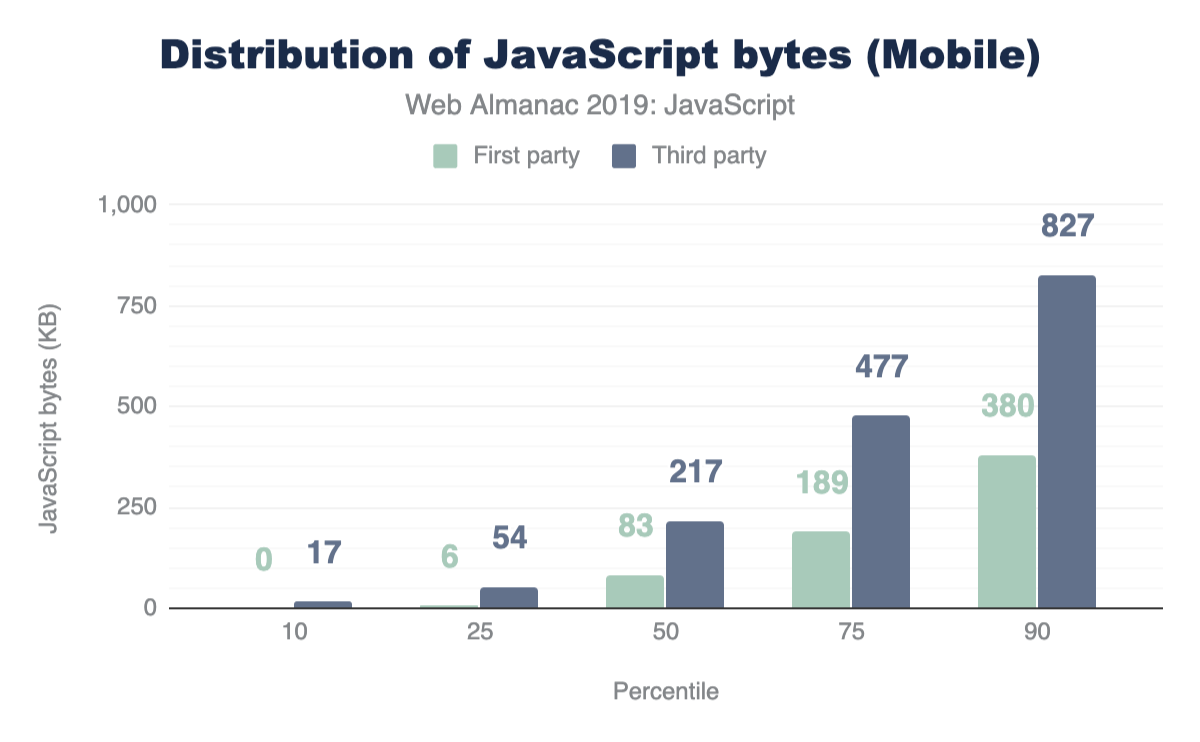

At every percentile, we’re sending slightly more JavaScript to desktop devices than we are to mobile.

Processing time

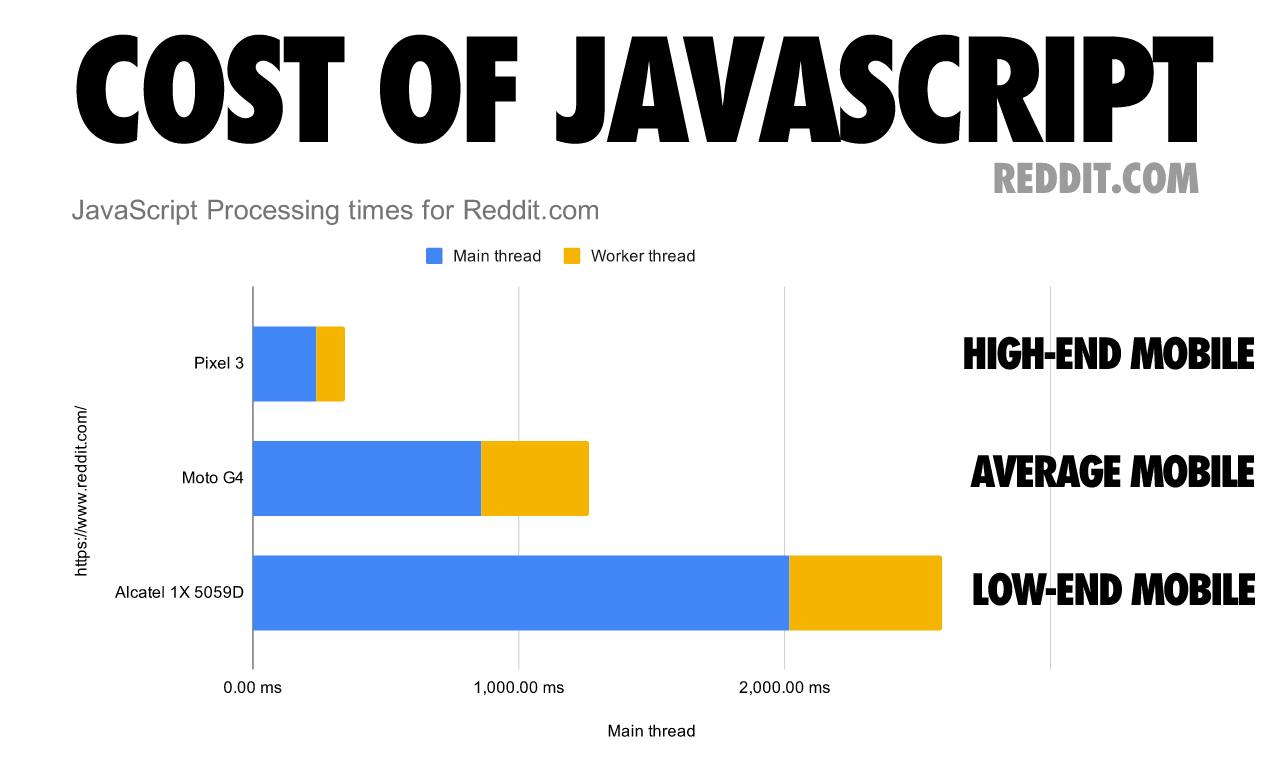

After being parsed and compiled, JavaScript fetched by the browser needs to processed (or executed) before it can be utilized. Devices vary, and their computing power can significantly affect how fast JavaScript can be processed on a page. What are the current processing times on the web?

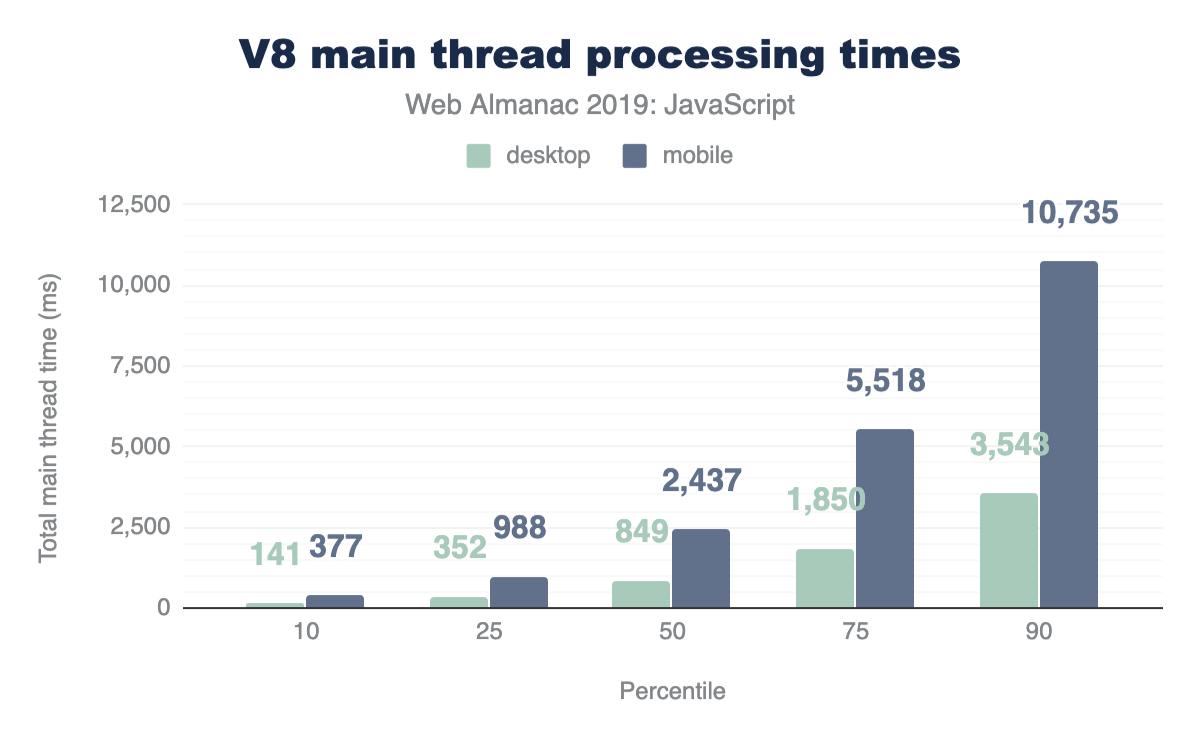

We can get an idea by analyzing main thread processing times for V8 at different percentiles:

At every percentile, processing times are longer for mobile web pages than on desktop. The median total main thread time on desktop is 849 ms, while mobile is at a larger number: 2,437 ms.

Although this data shows how much longer it can take for a mobile device to process JavaScript compared to a more powerful desktop machine, mobile devices also vary in terms of computing power. The following chart shows how processing times on a single web page can vary significantly depending on the mobile device class.

Number of requests

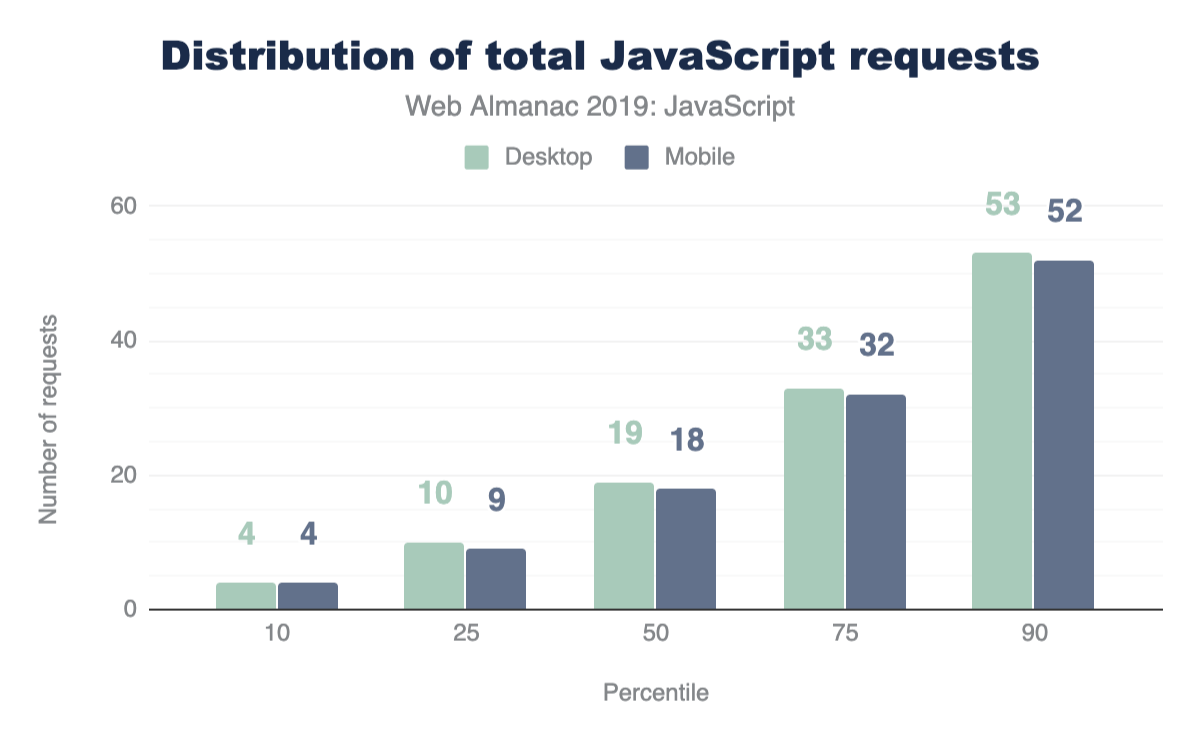

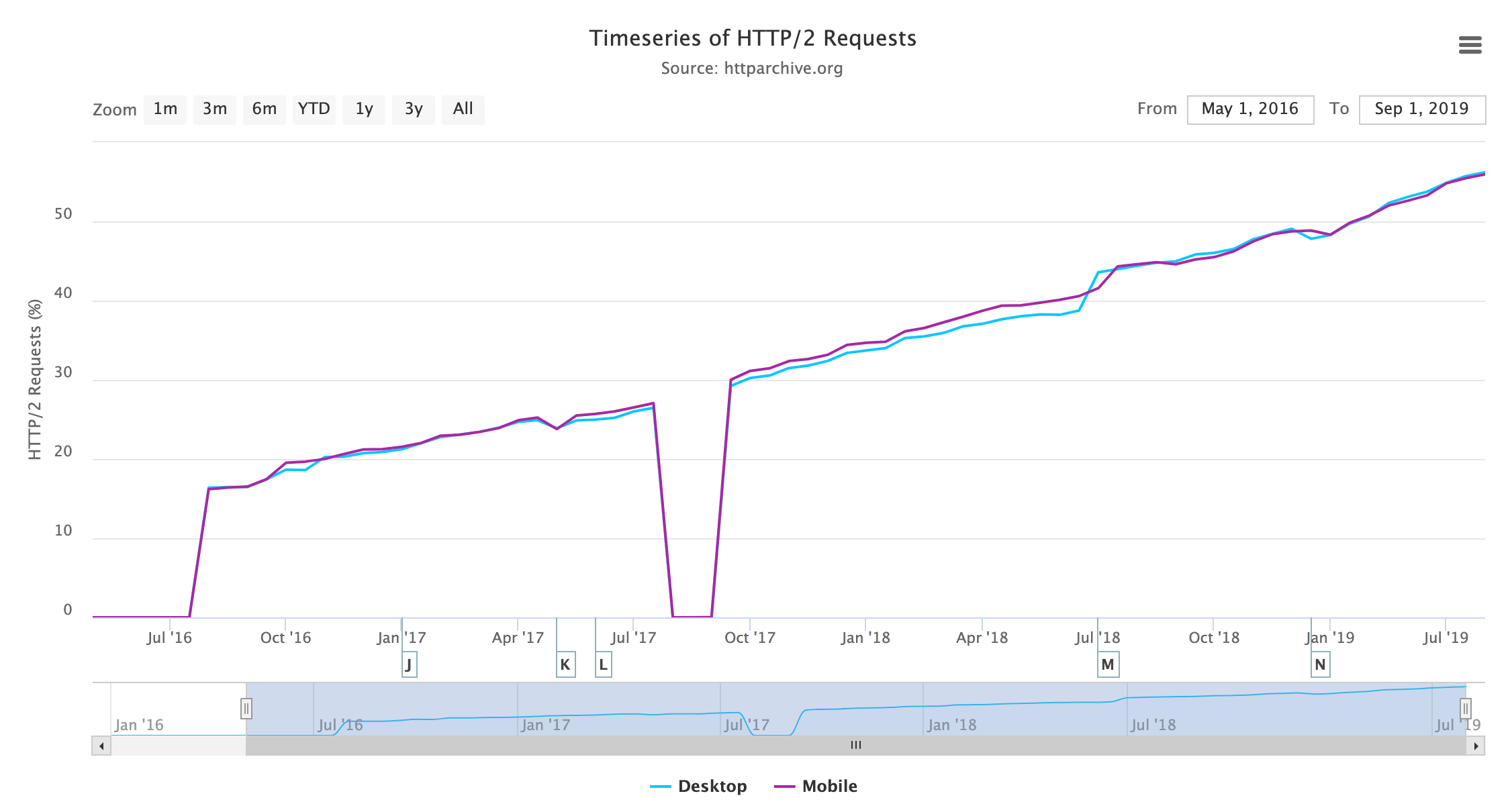

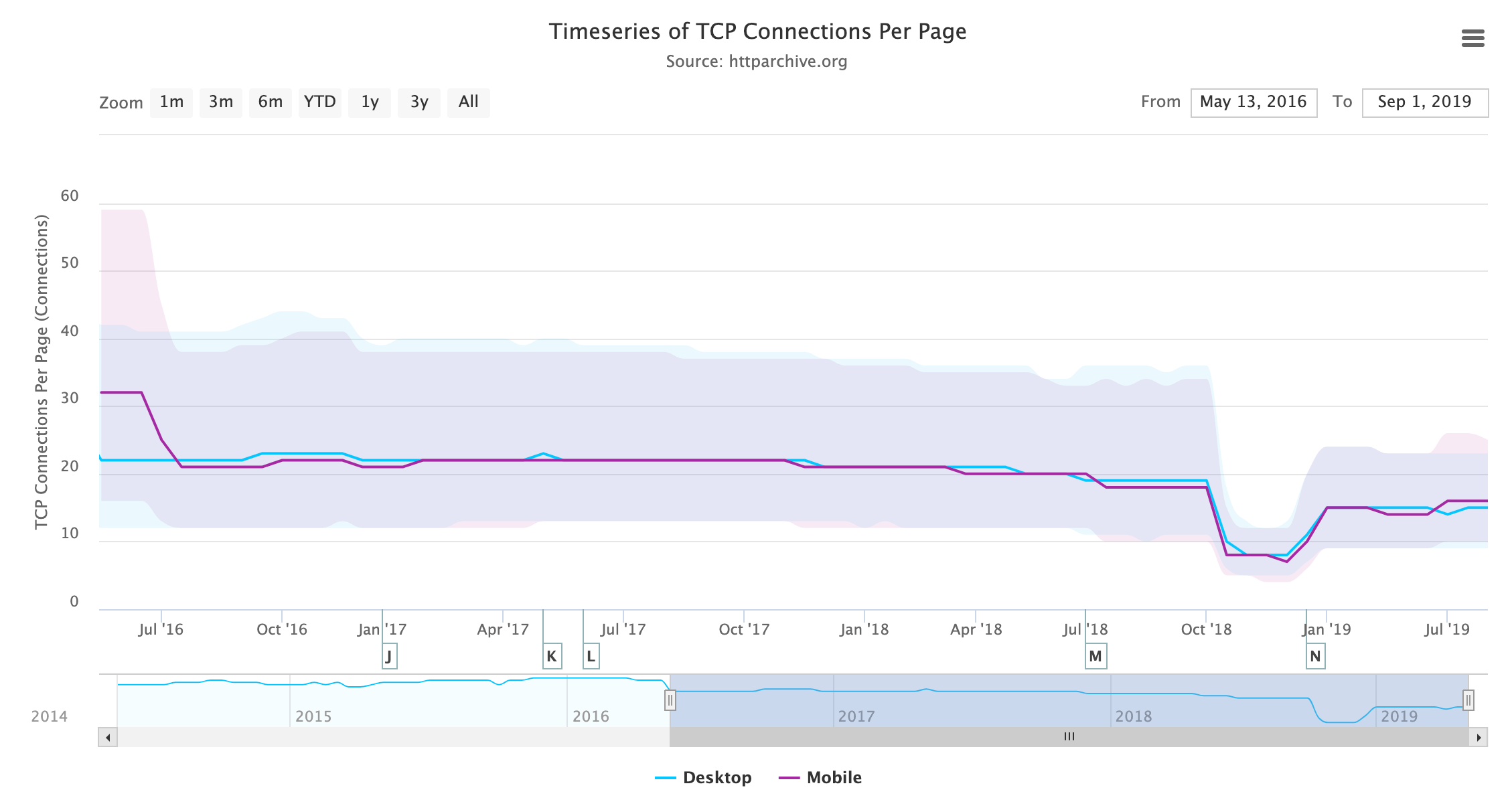

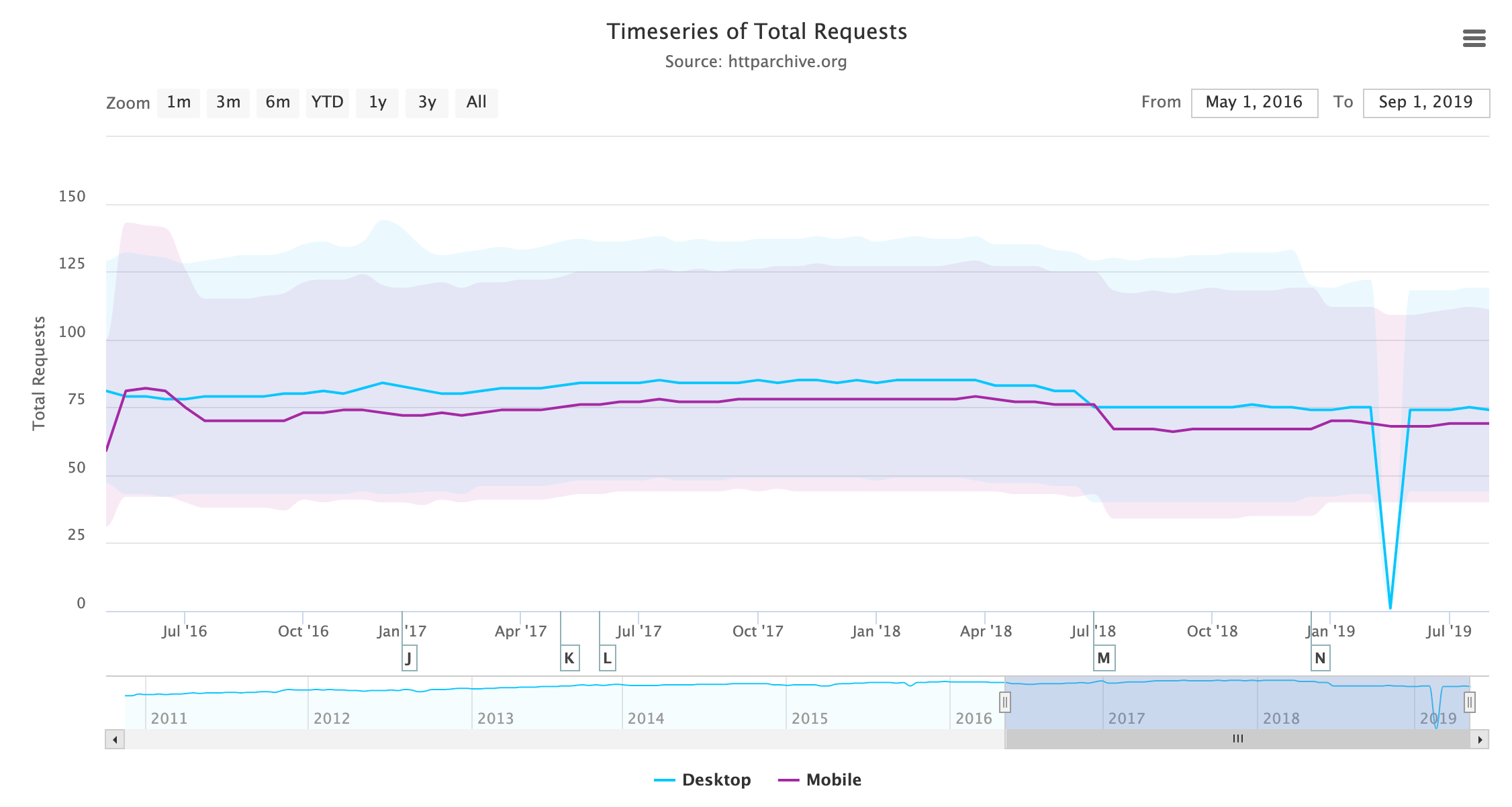

One avenue worth exploring when trying to analyze the amount of JavaScript used by web pages is the number of requests shipped. With HTTP/2, sending multiple smaller chunks can improve page load over sending a larger, monolithic bundle. If we also break it down by device client, how many requests are being fetched?

At the median, 19 requests are sent for desktop and 18 for mobile.

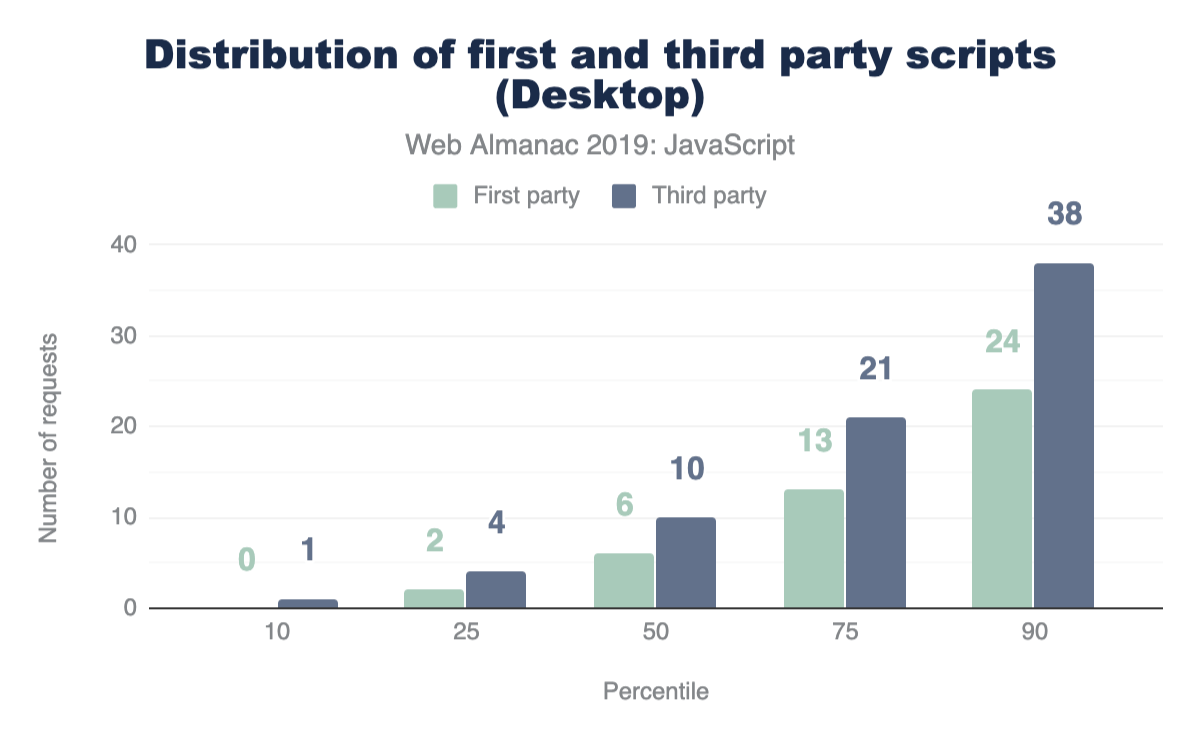

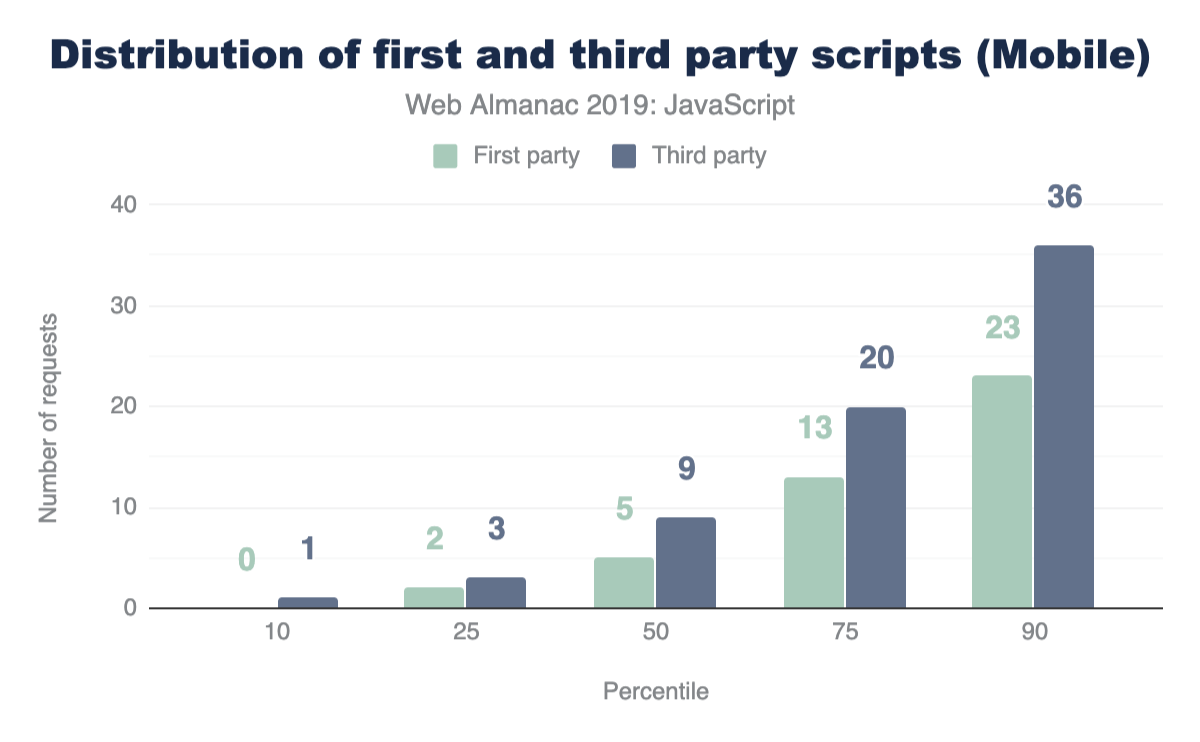

First-party vs. third-party

Of the results analyzed so far, the entire size and number of requests were being considered. In a majority of websites however, a significant portion of the JavaScript code fetched and used comes from third-party sources.

Third-party JavaScript can come from any external, third-party source. Ads, analytics and social media embeds are all common use-cases for fetching third-party scripts. So naturally, this brings us to our next question: how many requests sent are third-party instead of first-party?

For both mobile and desktop clients, more third-party requests are sent than first-party at every percentile. If this seems surprising, let’s find out how much actual code shipped comes from third-party vendors.

At the median, 89% more third-party code is used than first-party code authored by the developer for both mobile and desktop. This clearly shows that third-party code can be one of the biggest contributors to bloat. For more information on the impact of third parties, refer to the “Third Parties” chapter.

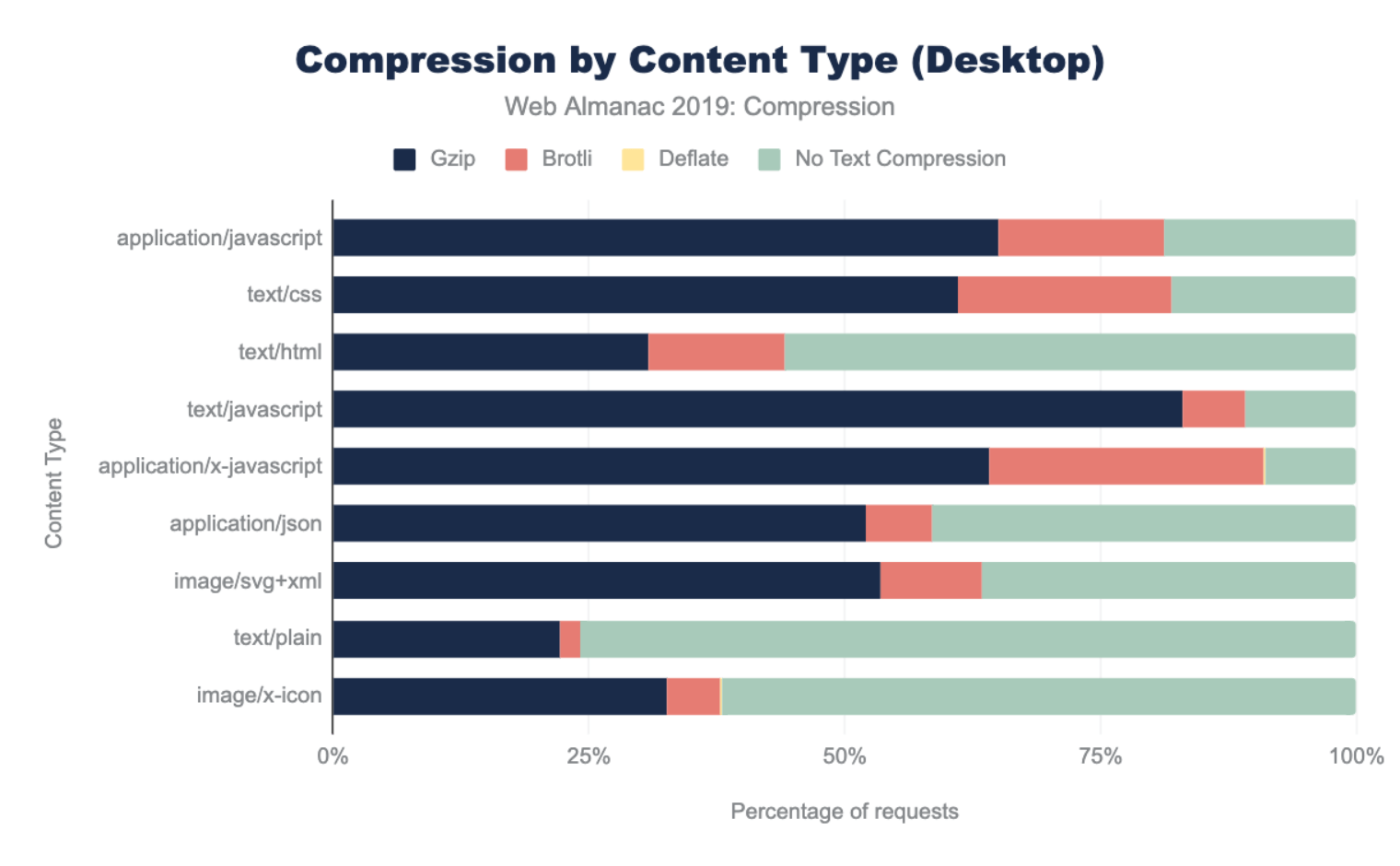

Resource compression

In the context of browser-server interactions, resource compression refers to code that has been modified using a data compression algorithm. Resources can be compressed statically ahead of time or on-the-fly as they are requested by the browser, and for either approach the transferred resource size is significantly reduced which improves page performance.

There are multiple text-compression algorithms, but only two are mostly used for the compression (and decompression) of HTTP network requests:

- Gziphttps://www.gzip.org/ (

gzip): The most widely used compression format for server and client interactions - Brotlihttps://github.com/google/brotli (

br): A newer compression algorithm aiming to further improve compression ratios. 90% of browsershttps://caniuse.com/#feat=brotli support Brotli encoding.

Compressed scripts will always need to be uncompressed by the browser once transferred. This means its content remains the same and execution times are not optimized whatsoever. Resource compression, however, will always improve download times which also is one of the most expensive stages of JavaScript processing. Ensuring JavaScript files are compressed correctly can be one of the most significant factors in improving site performance.

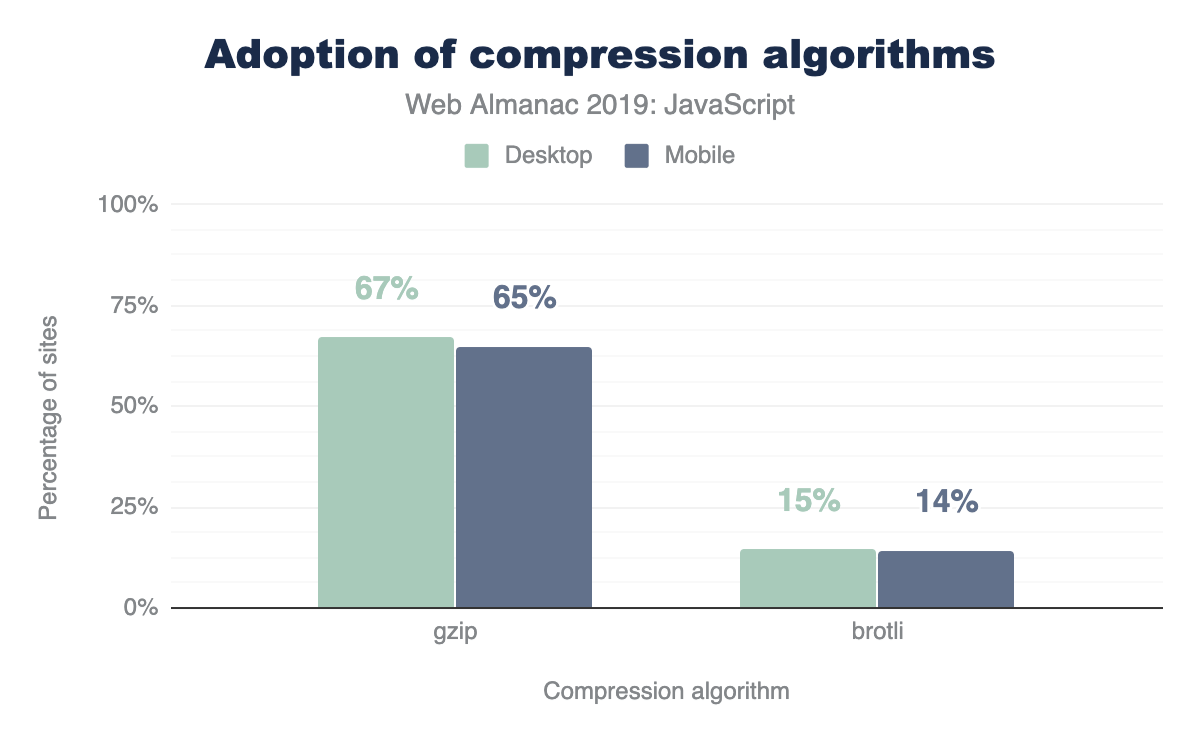

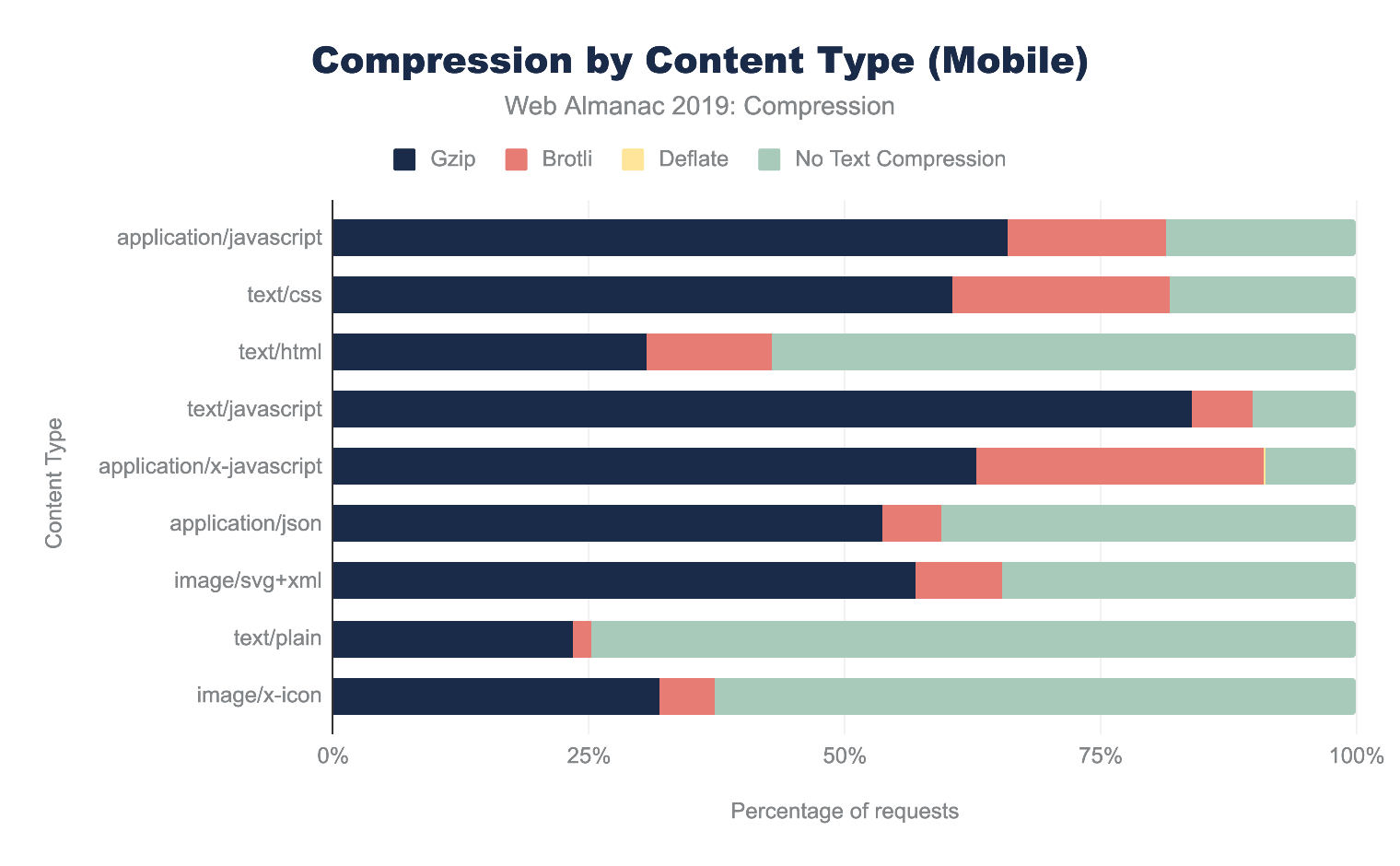

How many sites are compressing their JavaScript resources?

The majority of sites are compressing their JavaScript resources. Gzip encoding is used on ~64-67% of sites and Brotli on ~14%. Compression ratios are similar for both desktop and mobile.

For a deeper analysis on compression, refer to the “Compression” chapter.

Open source libraries and frameworks

Open source code, or code with a permissive license that can be accessed, viewed and modified by anyone. From tiny libraries to entire browsers, such as Chromiumhttps://www.chromium.org/Home and Firefoxhttps://www.openhub.net/p/firefox, open source code plays a crucial role in the world of web development. In the context of JavaScript, developers rely on open source tooling to include all types of functionality into their web page. Regardless of whether a developer decides to use a small utility library or a massive framework that dictates the architecture of their entire application, relying on open-source packages can make feature development easier and faster. So which JavaScript open-source libraries are used the most?

| Library | Desktop | Mobile |

|---|---|---|

| jQuery | 85.03% | 83.46% |

| jQuery Migrate | 31.26% | 31.68% |

| jQuery UI | 23.60% | 21.75% |

| Modernizr | 17.80% | 16.76% |

| FancyBox | 7.04% | 6.61% |

| Lightbox | 6.02% | 5.93% |

| Slick | 5.53% | 5.24% |

| Moment.js | 4.92% | 4.29% |

| Underscore.js | 4.20% | 3.82% |

| prettyPhoto | 2.89% | 3.09% |

| Select2 | 2.78% | 2.48% |

| Lodash | 2.65% | 2.68% |

| Hammer.js | 2.28% | 2.70% |

| YUI | 1.84% | 1.50% |

| Lazy.js | 1.26% | 1.56% |

| Fingerprintjs | 1.21% | 1.32% |

| script.aculo.us | 0.98% | 0.85% |

| Polyfill | 0.97% | 1.00% |

| Flickity | 0.83% | 0.92% |

| Zepto | 0.78% | 1.17% |

| Dojo | 0.70% | 0.62% |

jQueryhttps://jquery.com/, the most popular JavaScript library ever created, is used in 85.03% of desktop pages and 83.46% of mobile pages. The advent of many Browser APIs and methods, such as Fetchhttps://developer.mozilla.org/docs/Web/API/Fetch_API and querySelectorhttps://developer.mozilla.org/docs/Web/API/Document/querySelector, standardized much of the functionality provided by the library into a native form. Although the popularity of jQuery may seem to be declining, why is it still used in the vast majority of the web?

There are a number of possible reasons:

- WordPresshttps://wordpress.org/, which is used in more than 30% of sites, includes jQuery by default.

- Switching from jQuery to a newer client-side library can take time depending on how large an application is, and many sites may consist of jQuery in addition to newer client-side libraries.

Other top used JavaScript libraries include jQuery variants (jQuery migrate, jQuery UI), Modernizrhttps://modernizr.com/, Moment.jshttps://momentjs.com/, Underscore.jshttps://underscorejs.org/ and so on.

Frameworks and UI libraries

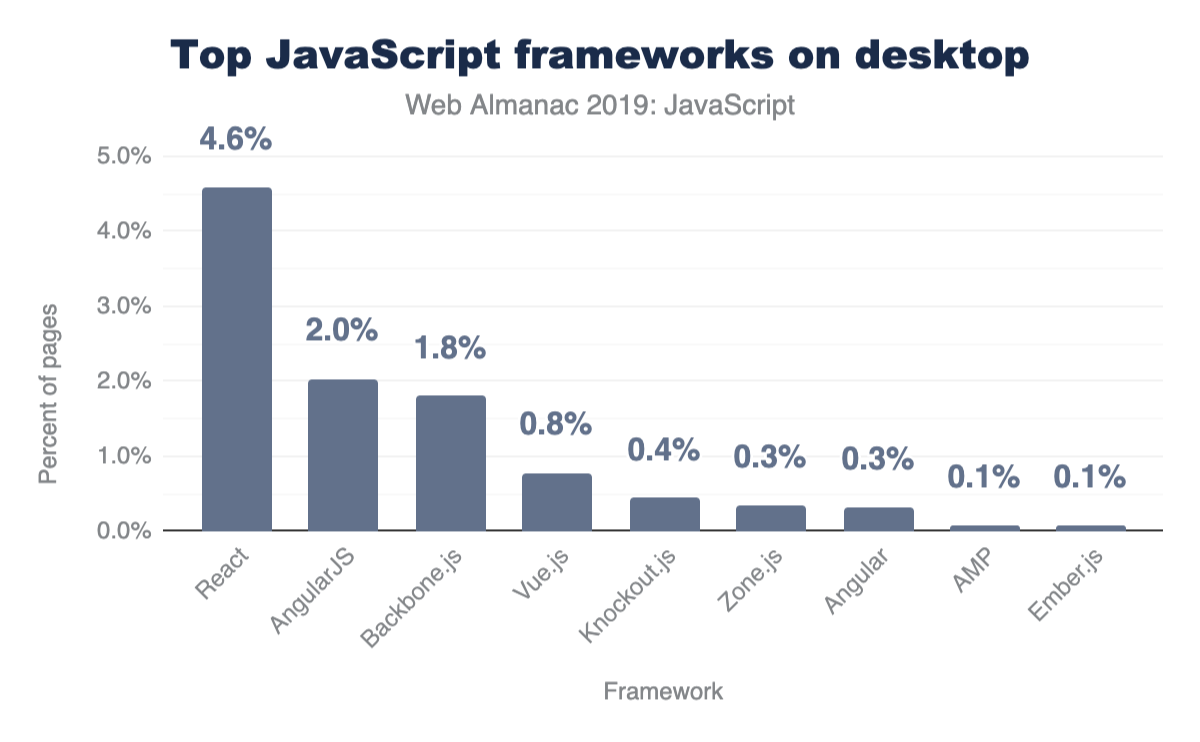

In the past number of years, the JavaScript ecosystem has seen a rise in open-source libraries and frameworks to make building single-page applications (SPAs) easier. A single-page application is characterized as a web page that loads a single HTML page and uses JavaScript to modify the page on user interaction instead of fetching new pages from the server. Although this remains to be the main premise of single-page applications, different server-rendering approaches can still be used to improve the experience of such sites. How many sites use these types of frameworks?

Only a subset of popular frameworks are being analyzed here, but it’s important to note that all of them either follow one of these two approaches:

- A model-view-controllerhttps://developer.chrome.com/apps/app_frameworks (or model-view-viewmodel) architecture

- A component-based architecture

Although there has been a shift towards a component-based model, many older frameworks that follow the MVC paradigm (AngularJShttps://angularjs.org/, Backbone.jshttps://backbonejs.org/, Emberhttps://emberjs.com/) are still being used in thousands of pages. However, Reacthttps://reactjs.org/, Vuehttps://vuejs.org/ and Angularhttps://angular.io/ are the most popular component-based frameworks (Zone.jshttps://github.com/angular/zone.js is a package that is now part of Angular core).

Differential loading

JavaScript moduleshttps://v8.dev/features/modules, or ES modules, are supported in all major browsershttps://caniuse.com/#feat=es6-module. Modules provide the capability to create scripts that can import and export from other modules. This allows anyone to build their applications architected in a module pattern, importing and exporting wherever necessary, without relying on third-party module loaders.

To declare a script as a module, the script tag must get the type="module" attribute:

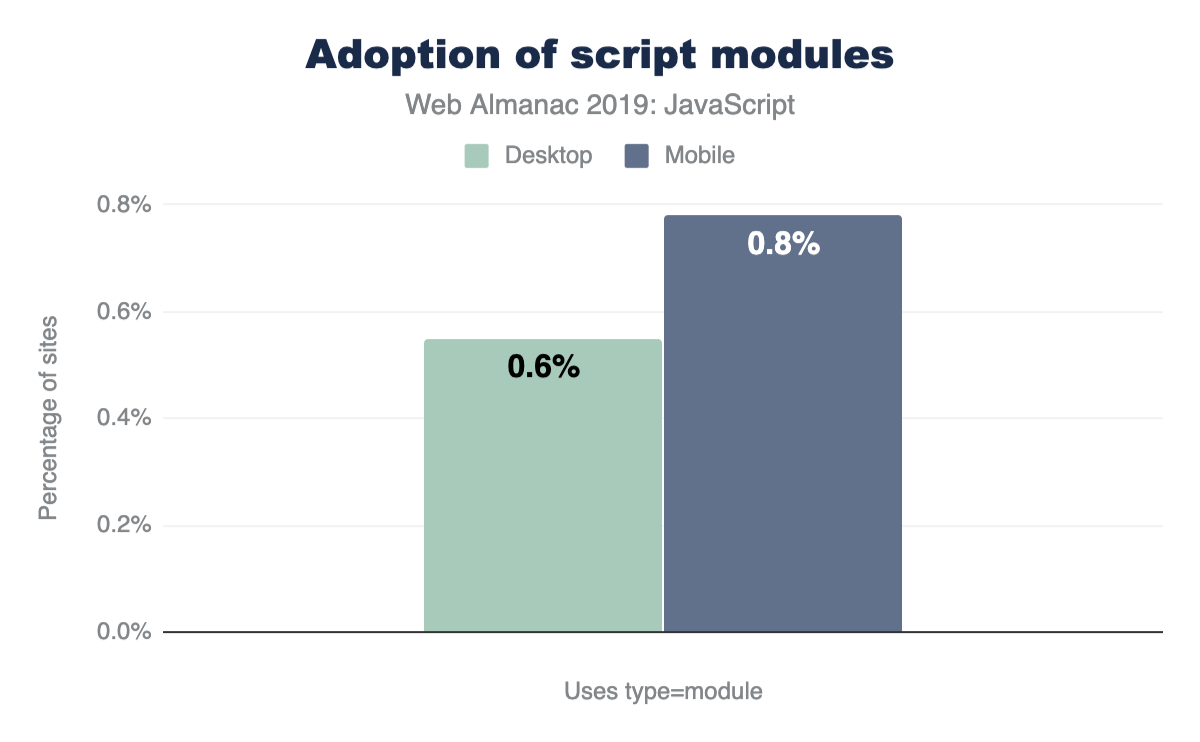

How many sites use type="module" for scripts on their page?

Browser-level support for modules is still relatively new, and the numbers here show that very few sites currently use type="module" for their scripts. Many sites are still relying on module loaders (2.37% of all desktop sites use RequireJShttps://github.com/requirejs/requirejs for example) and bundlers (webpackhttps://webpack.js.org/ for example) to define modules within their codebase.

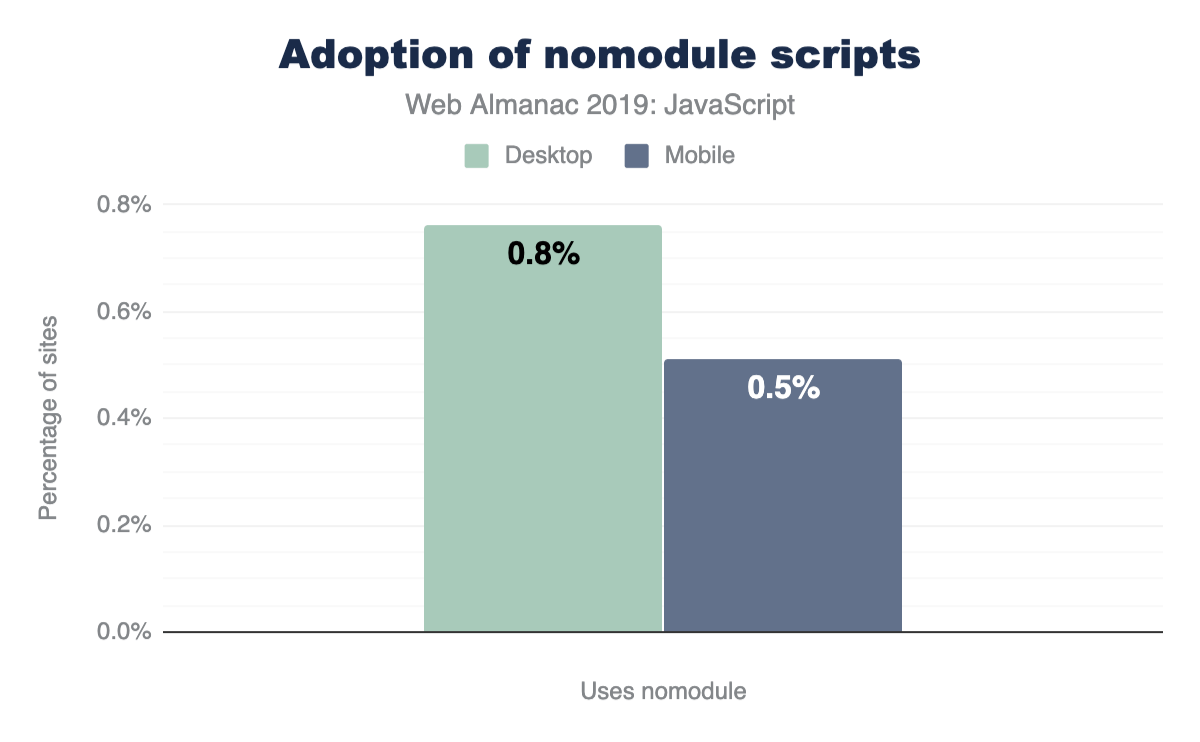

If native modules are used, it’s important to ensure that an appropriate fallback script is used for browsers that do not yet support modules. This can be done by including an additional script with a nomodule attribute.

When used together, browsers that support modules will completely ignore any scripts containing the nomodule attribute. On the other hand, browsers that do not yet support modules will not download any scripts with type="module". Since they do not recognize nomodule either, they will download scripts with the attribute normally. Using this approach can allow developers to send modern code to modern browsers for faster page loadshttps://web.dev/serve-modern-code-to-modern-browsers/. So, how many sites use nomodule for scripts on their page?

Similarly, very few sites (0.50%-0.80%) use the nomodule attribute for any scripts.

Preload and prefetch

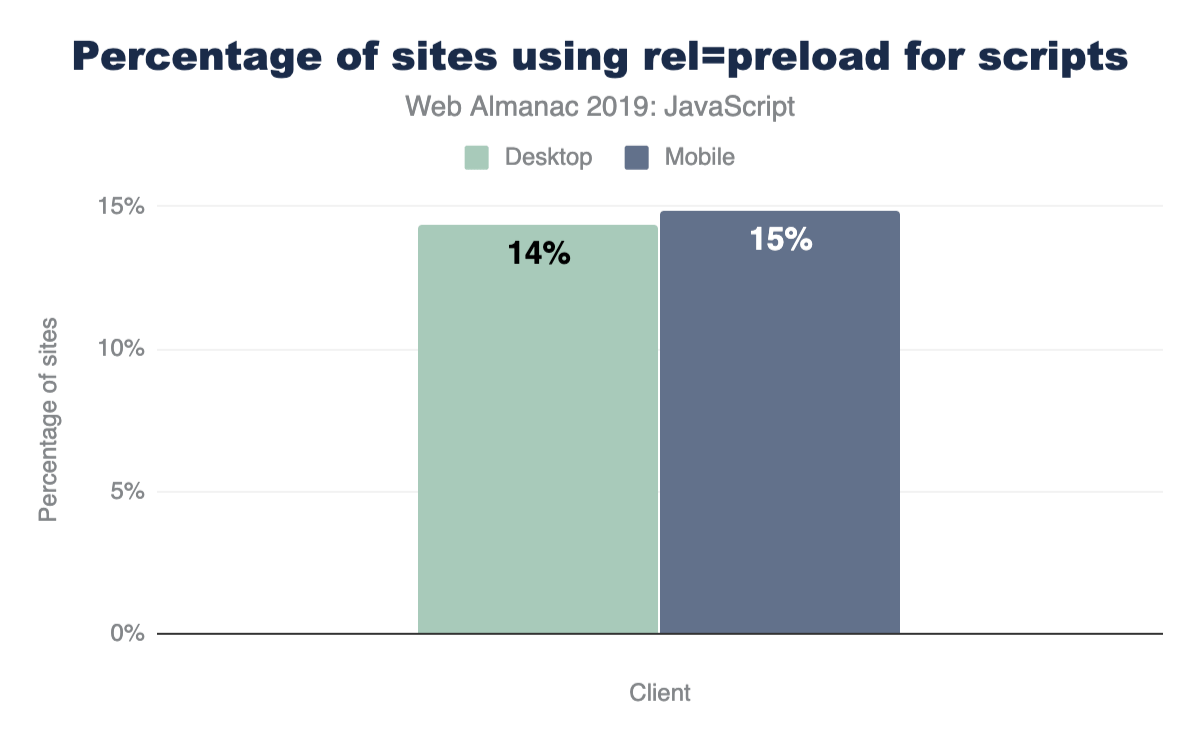

Preloadhttps://developer.mozilla.org/docs/Web/HTML/Link_types/preload and prefetchhttps://developer.mozilla.org/docs/Web/HTTP/Link_prefetching_FAQ are resource hints which enable you to aid the browser in determining what resources need to be downloaded.

- Preloading a resource with

<link rel="preload">tells the browser to download this resource as soon as possible. This is especially helpful for critical resources which are discovered late in the page loading process (e.g., JavaScript located at the bottom of your HTML) and are otherwise downloaded last. - Using

<link rel="prefetch">tells the browser to take advantage of any idle time it has to fetch these resources needed for future navigations

So, how many sites use preload and prefetch directives?

For all sites measured in HTTP Archive, 14.33% of desktop sites and 14.84% of mobile sites use <link rel="preload"> for scripts on their page.

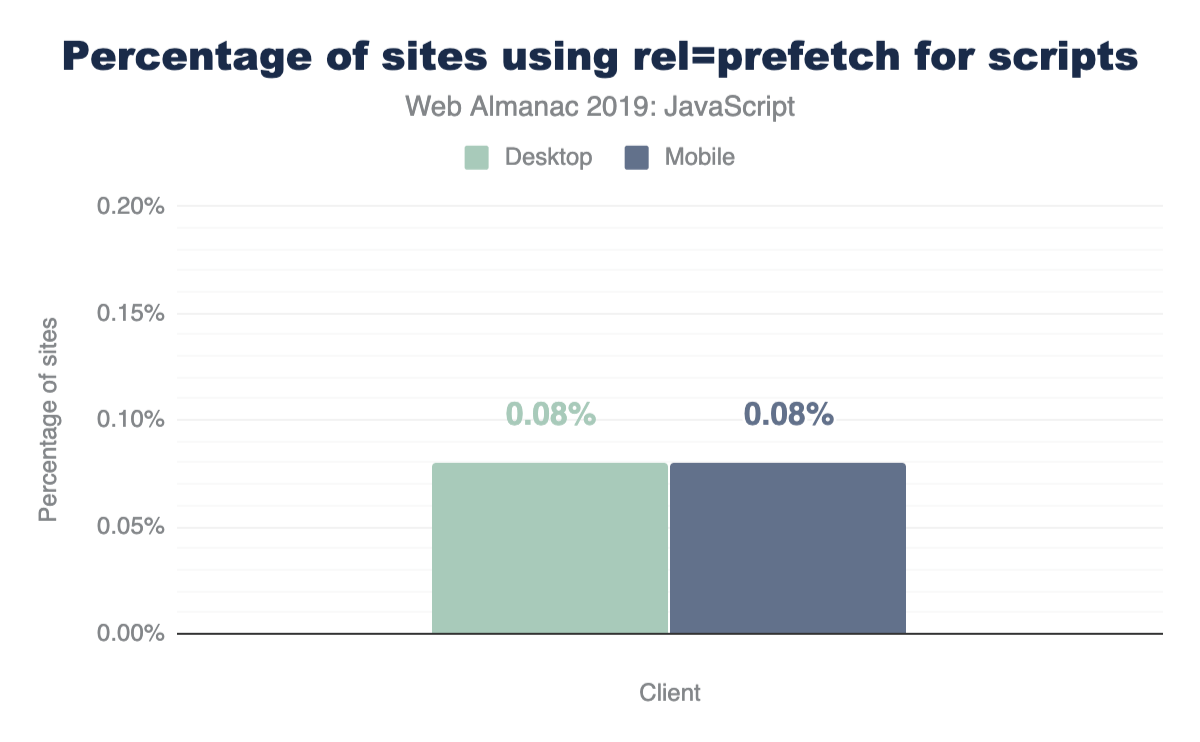

For prefetch, we have the following:

For both mobile and desktop, 0.08% of pages leverage prefetch for any of their scripts.

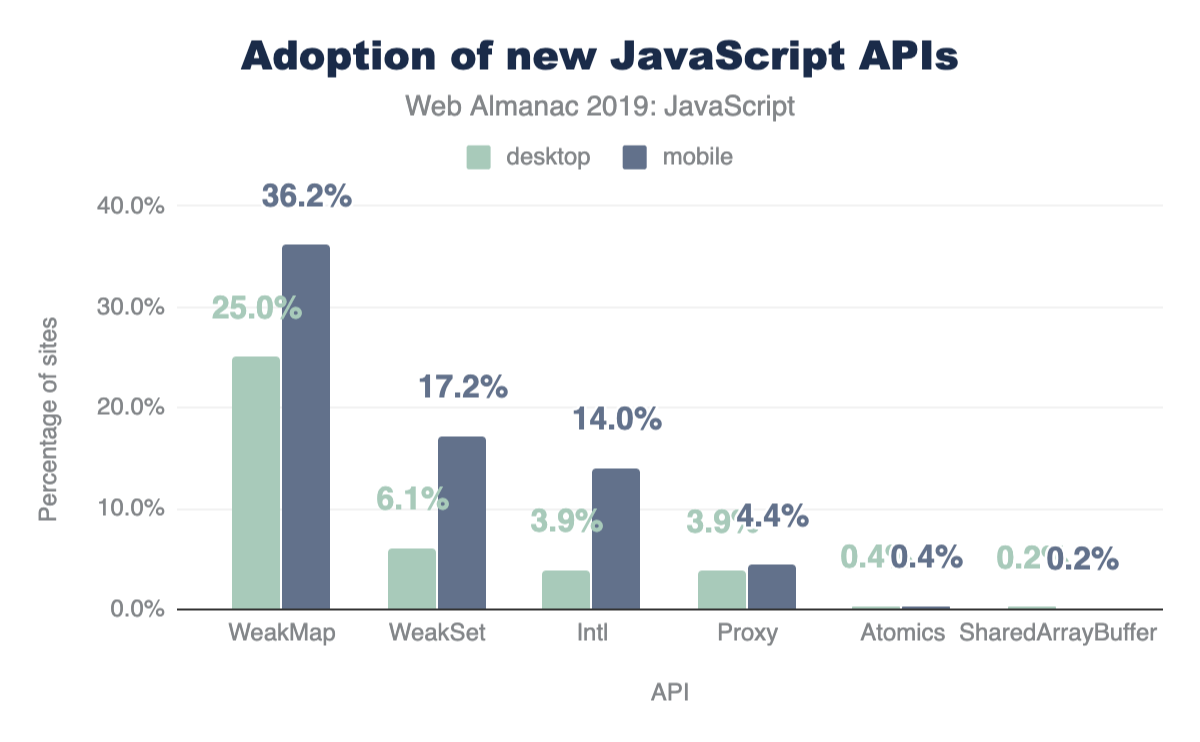

Newer APIs

JavaScript continues to evolve as a language. A new version of the language standard itself, known as ECMAScript, is released every year with new APIs and features passing proposal stages to become a part of the language itself.

With HTTP Archive, we can take a look at any newer API that is supported (or is about to be) and see how widespread its usage is. These APIs may already be used in browsers that support them or with an accompanying polyfill to make sure they still work for every user.

How many sites use the following APIs?

- Atomicshttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/Atomics

- Intlhttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/Intl

- Proxyhttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/Proxy

- SharedArrayBufferhttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/SharedArrayBuffer

- WeakMaphttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/WeakMap

- WeakSethttps://developer.mozilla.org/docs/Web/JavaScript/Reference/Global_Objects/WeakSet

Atomics (0.38%) and SharedArrayBuffer (0.20%) are barely visible on this chart since they are used on such few pages.

It is important to note that the numbers here are approximations and they do not leverage UseCounterhttps://chromium.googlesource.com/chromium/src.git/+/master/docs/use_counter_wiki.md to measure feature usage.

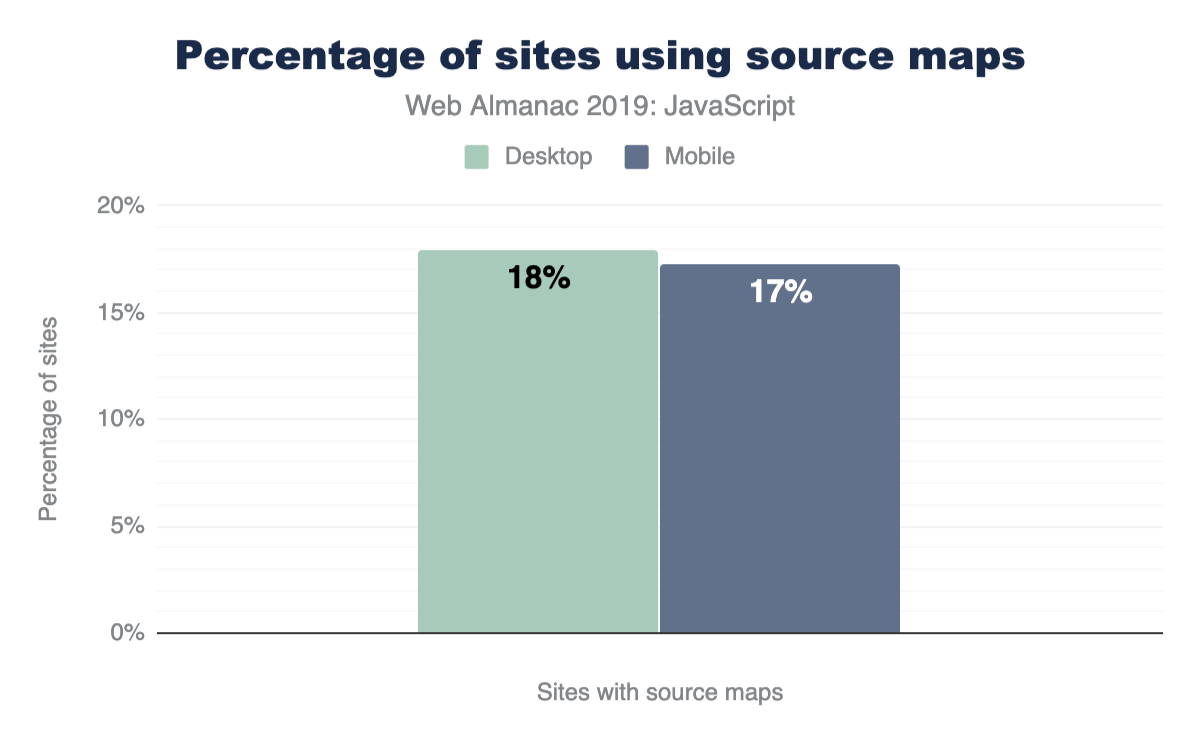

Source maps

In many build systems, JavaScript files undergo minification to minimize its size and transpilation for newer language features that are not yet supported in many browsers. Moreover, language supersets like TypeScripthttps://www.typescriptlang.org/ compile to an output that can look noticeably different from the original source code. For all these reasons, the final code served to the browser can be unreadable and hard to decipher.

A source map is an additional file accompanying a JavaScript file that allows a browser to map the final output to its original source. This can make debugging and analyzing production bundles much simpler.

Although useful, there are a number of reasons why many sites may not want to include source maps in their final production site, such as choosing not to expose complete source code to the public. So how many sites actually include sourcemaps?

For both desktop and mobile pages, the results are about the same. 17-18% include a source map for at least one script on the page (detected as a first-party script with sourceMappingURL).

Conclusion

The JavaScript ecosystem continues to change and evolve every year. Newer APIs, improved browser engines, and fresh libraries and frameworks are all things we can expect to happen indefinitely. HTTP Archive provides us with valuable insight on how sites in the wild use the language.

Without JavaScript, the web would not be where it is today, and all the data gathered for this article only proves this.

CSS

Introduction

Cascading Style Sheets (CSS) are used to paint, format, and layout web pages. Their capabilities span concepts as simple as text color to 3D perspective. It also has hooks to empower developers to handle varying screen sizes, viewing contexts, and printing. CSS helps developers wrangle content and ensure it’s adapting properly to the user.

When describing CSS to those not familiar with web technology, it can be helpful to think of it as the language to paint the walls of the house; describing the size and position of windows and doors, as well as flourishing decorations such as wallpaper or plant life. The fun twist to that story is that depending on the user walking through the house, a developer can adapt the house to that specific user’s preferences or contexts!

In this chapter, we’ll be inspecting, tallying, and extracting data about how CSS is used across the web. Our goal is to holistically understand what features are being used, how they’re used, and how CSS is growing and being adopted.

Ready to dig into the fascinating data?! Many of the following numbers may be small, but don’t mistake them as insignificant! It can take many years for new things to saturate the web.

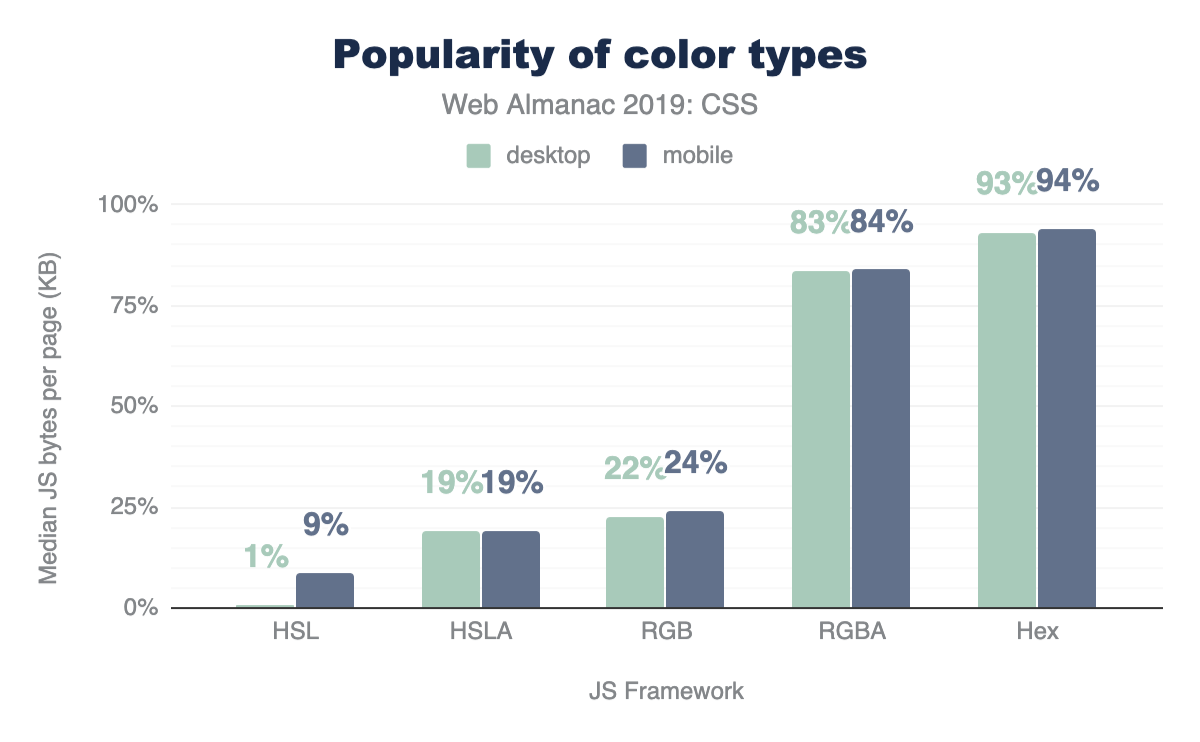

Color

Color is an integral part of theming and styling on the web. Let’s take a look at how websites tend to use color.

Color types

Hex is the most popular way to describe color by far, with 93% usage, followed by RGB, and then HSL. Interestingly, developers are taking full advantage of the alpha-transparency argument when it comes to these color types: HSLA and RGBA are far more popular than HSL and RGB, with almost double the usage! Even though the alpha-transparency was added later to the web spec, HSLA and RGBA are supported as far back as IE9https://caniuse.com/#feat=css3-colors, so you can go ahead and use them, too!

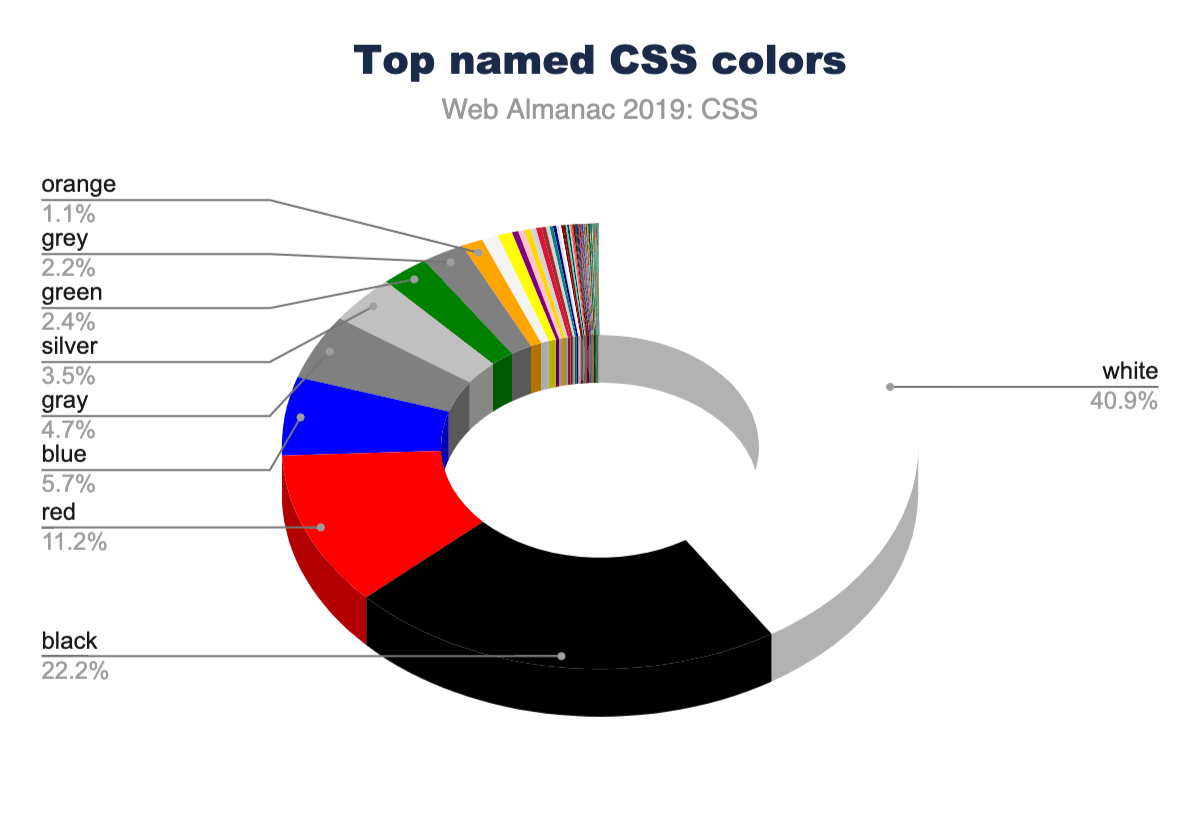

Color selection

There are 148 named CSS colorshttps://www.w3.org/TR/css-color-4/#named-colors, not including the special values transparent and currentcolor. You can use these by their string name for more readable styling. The most popular named colors are black and white, unsurprisingly, followed by red and blue.

Language is interestingly inferred via color as well. There are more instances of the American-style “gray” than the British-style “grey”. Almost every instance of gray colorshttps://www.rapidtables.com/web/color/gray-color.html (gray, lightgray, darkgray, slategray, etc.) had nearly double the usage when spelled with an “a” instead of an “e”. If gr[a/e]ys were combined, they would rank higher than blue, solidifying themselves in the #4 spot. This could be why silver is ranked higher than grey with an “e” in the charts!

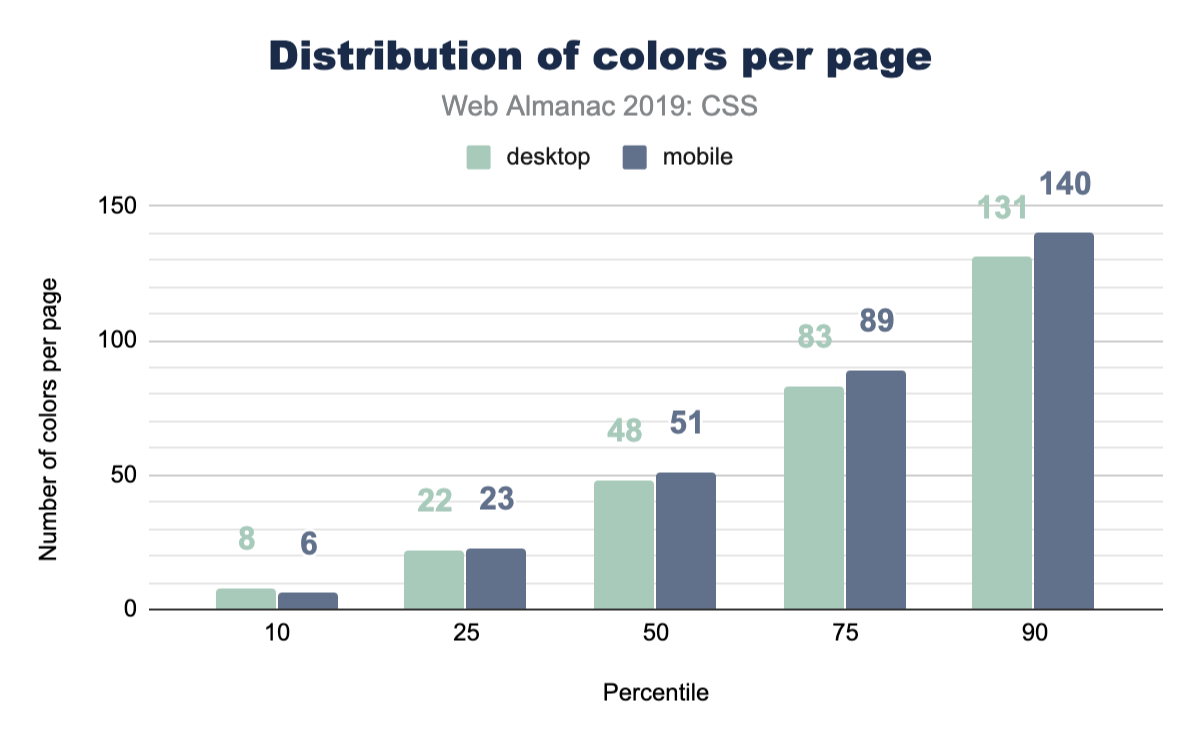

Color count

How many different font colors are used across the web? So this isn’t the total number of unique colors; rather, it’s how many different colors are used just for text. The numbers in this chart are quite high, and from experience, we know that without CSS variables, spacing, sizes and colors can quickly get away from you and fragment into lots of tiny values across your styles. These numbers reflect a difficulty of style management, and we hope this helps create some perspective for you to bring back to your teams or projects. How can you reduce this number into a manageable and reasonable amount?

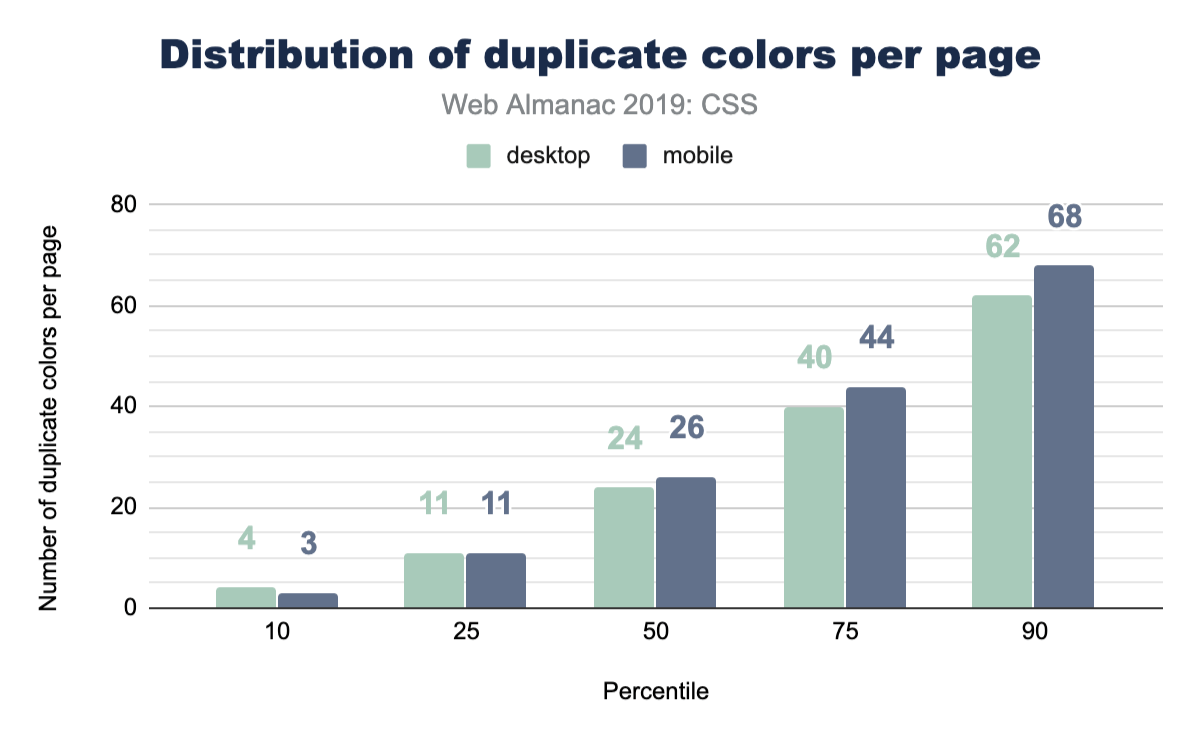

Color duplication

Well, we got curious here and wanted to inspect how many duplicate colors are present on a page. Without a tightly managed reusable class CSS system, duplicates are quite easy to create. It turns out that the median has enough duplicates that it could be worth doing a pass to unify them with custom properties.

Units

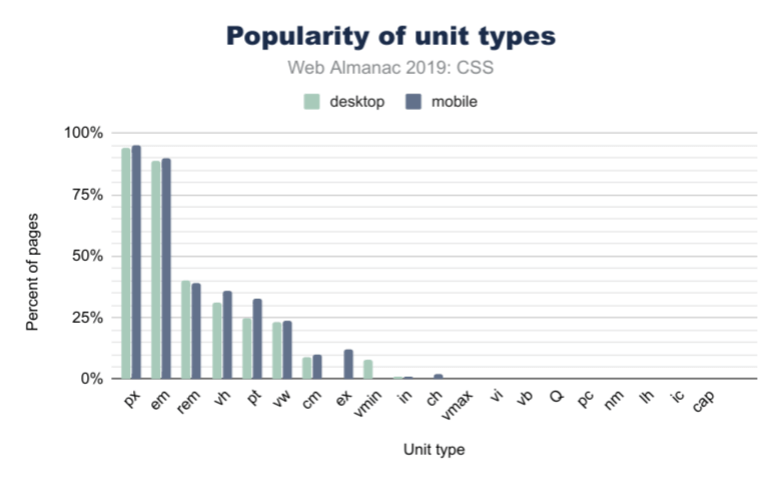

In CSS, there are many different ways to achieve the same visual result using different unit types: rem, px, em, ch, or even cm! So which unit types are most popular?

Length and sizing

Unsurprisingly, in Figure 2.5 above, px is the most used unit type, with about 95% of web pages using pixels in some form or another (this could be element sizing, font size, and so on). However, the em unit is almost as popular, with about 90% usage. This is over 2x more popular than the rem unit, which has only 40% frequency in web pages. If you’re wondering what the difference is, em is based on the parent font size, while rem is based on the base font size set to the page. It doesn’t change per-component like em could, and thus allows for adjustment of all spacing evenly.

When it comes to units based on physical space, the cm (or centimeter) unit is the most popular by far, followed by in (inches), and then Q. We know these types of units are specifically useful for print stylesheets, but we didn’t even know the Q unit existed until this survey! Did you?

Viewport-based units

We saw larger differences in unit types when it comes to mobile and desktop usage for viewport-based units. 36.8% of mobile sites use vh (viewport height), while only 31% of desktop sites do. We also found that vh is more common than vw (viewport width) by about 11%. vmin (viewport minimum) is more popular than vmax (viewport maximum), with about 8% usage of vmin on mobile while vmax is only used by 1% of websites.

Custom properties

Custom properties are what many call CSS variables. They’re more dynamic than a typical static variable though! They’re very powerful and as a community we’re still discovering their potential.

We felt like this was exciting information, since it shows healthy growth of one of our favorite CSS additions. They were available in all major browsers since 2016 or 2017, so it’s fair to say they’re fairly new. Many folks are still transitioning from their CSS preprocessor variables to CSS custom properties. We estimate it’ll be a few more years until custom properties are the norm.

Selectors

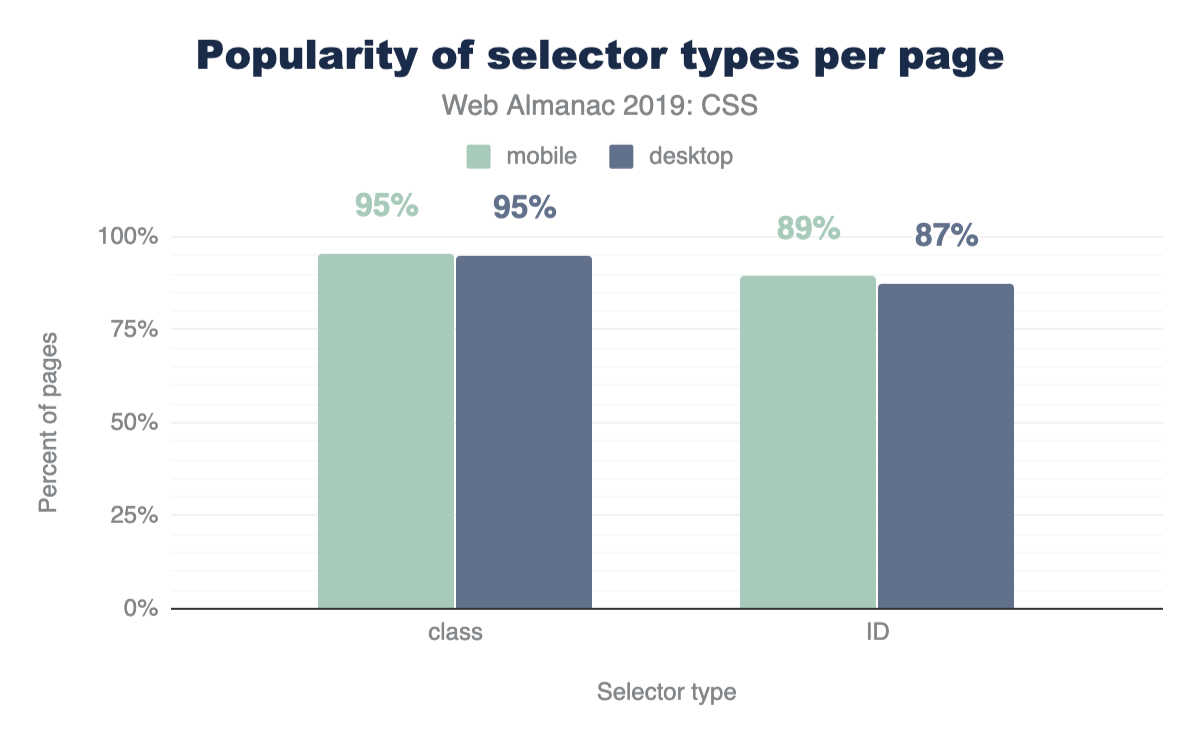

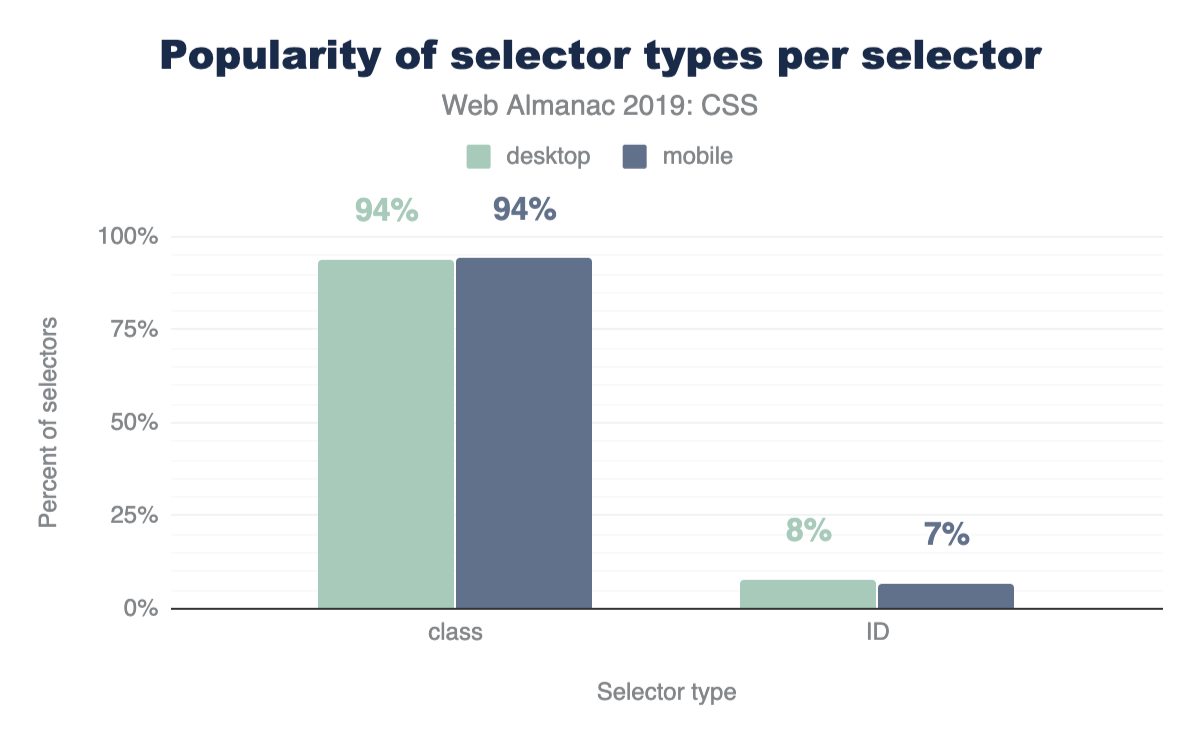

ID vs class selectors

CSS has a few ways to find elements on the page for styling, so let’s put IDs and classes against each other to see which is more prevalent! The results shouldn’t be too surprising: classes are more popular!

A nice follow up chart is this one, showing that classes take up 93% of the selectors found in a stylesheet.

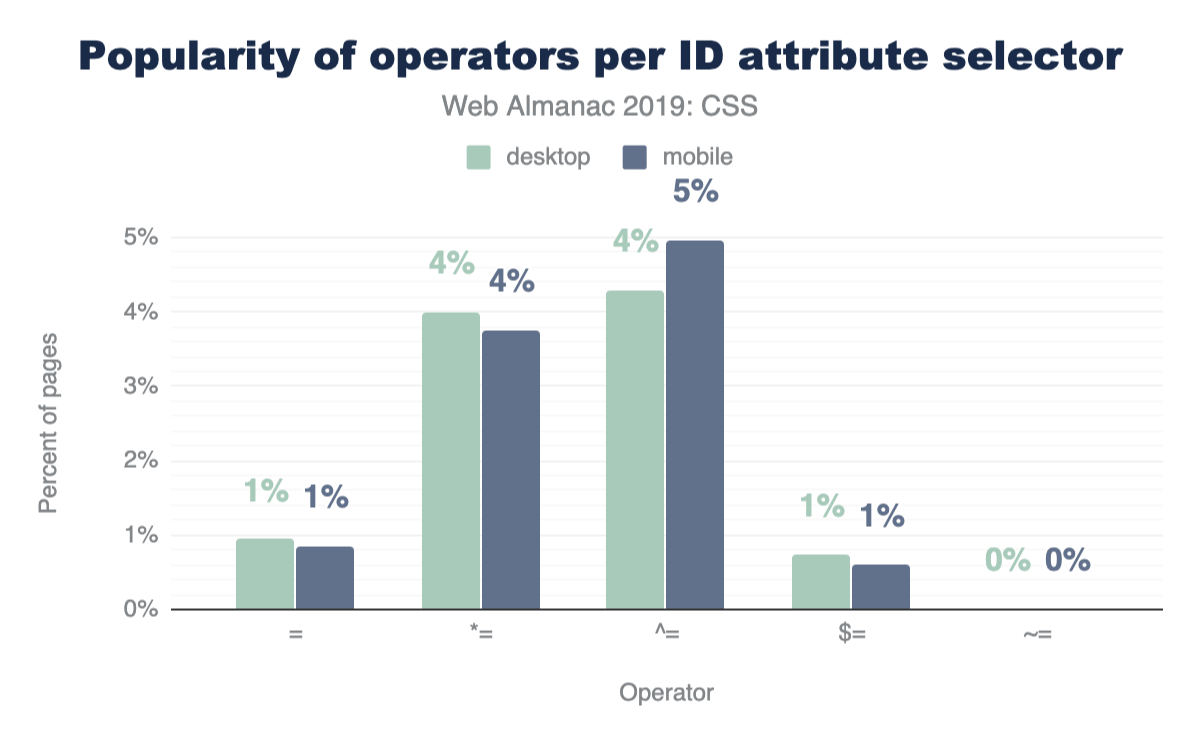

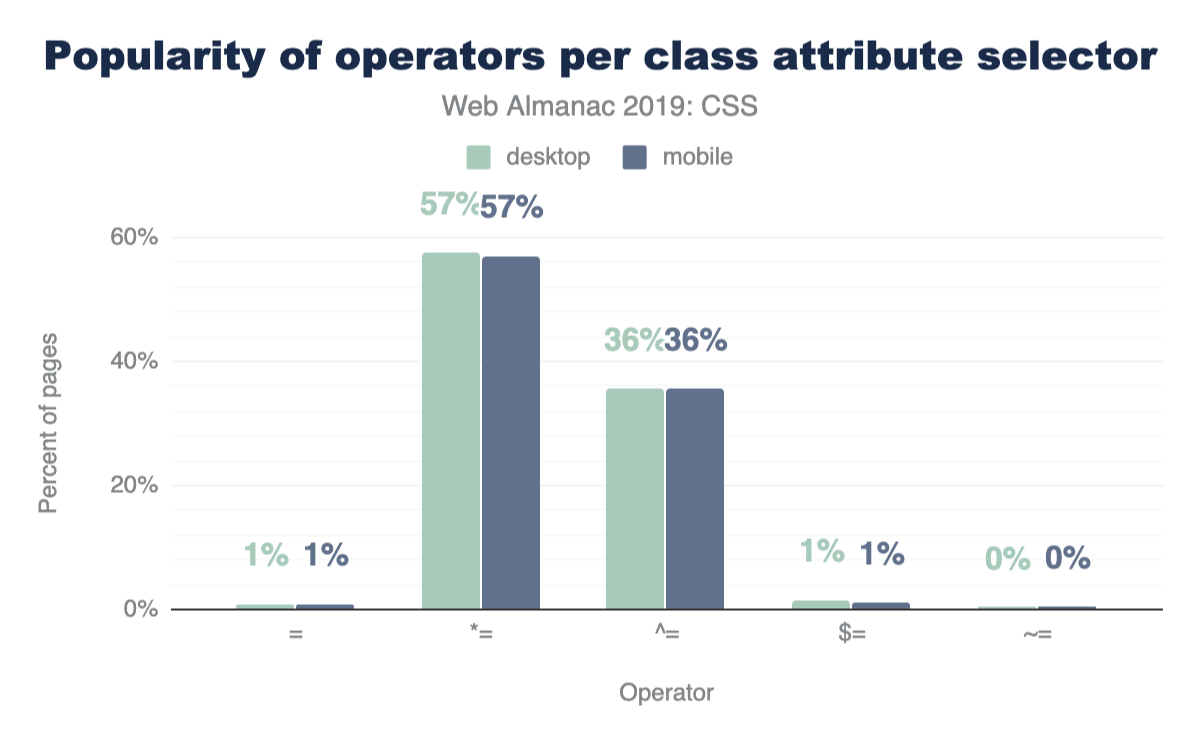

Attribute selectors

CSS has some very powerful comparison selectors. These are selectors like [target="_blank"], [attribute^="value"], [title~="rad"], [attribute$="-rad"] or [attribute*="value"]. Do you use them? Think they’re used a lot? Let’s compare how those are used with IDs and classes across the web.

These operators are much more popular with class selectors than IDs, which feels natural since a stylesheet usually has fewer ID selectors than class selectors, but still neat to see the uses of all these combinations.

Classes per element

With the rise of OOCSS, atomic, and functional CSS strategies which can compose 10 or more classes on an element to achieve a design look, perhaps we’d see some interesting results. The query came back quite unexciting, with the median on mobile and desktop being 1 class per element.

Layout

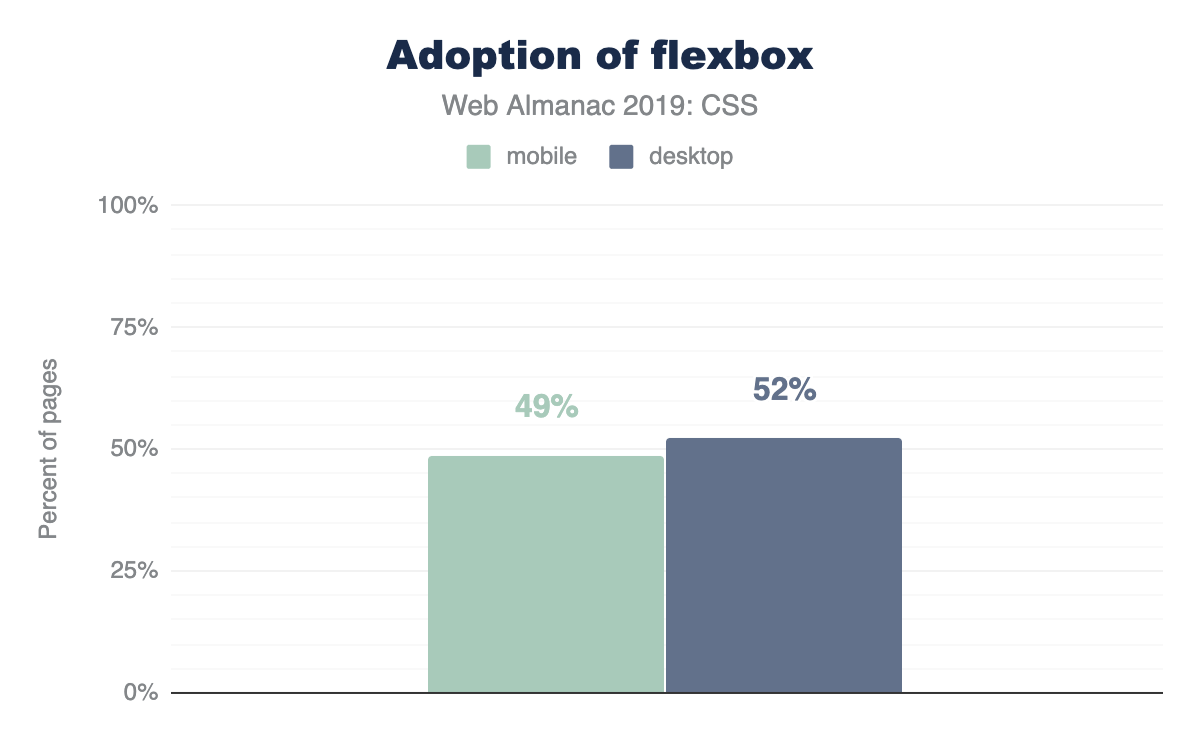

Flexbox

Flexboxhttps://developer.mozilla.org/docs/Web/CSS/CSS_Flexible_Box_Layout/Basic_Concepts_of_Flexbox is a container style that directs and aligns its children; that is, it helps with layout in a constraint-based way. It had a quite rocky beginning on the web, as its specification went through two or three quite drastic changes between 2010 and 2013. Fortunately, it settled and was implemented across all browsers by 2014. Given that history, it had a slow adoption rate, but it’s been a few years since then! It’s quite popular now and has many articles about it and how to leverage it, but it’s still new in comparison to other layout tactics.

Quite the success story shown here, as nearly 50% of the web has flexbox usage in its stylesheets.

Grid

Like flexbox, gridhttps://developer.mozilla.org/docs/Web/CSS/CSS_Grid_Layout too went through a few spec alternations early on in its lifespan, but without changing implementations in publicly-deployed browsers. Microsoft had grid in the first versions of Windows 8, as the primary layout engine for its horizontally scrolling design style. It was vetted there first, transitioned to the web, and then hardened by the other browsers until its final release in 2017. It had a very successful launch in that nearly all browsers released their implementations at the same time, so web developers just woke up one day to superb grid support. Today, at the end of 2019, grid still feels like a new kid on the block, as folks are still awakening to its power and capabilities.

This shows just how little the web development community has exercised and explored their latest layout tool. We look forward to the eventual takeover of grid as the primary layout engine folks lean on when building a site. For us authors, we love writing grid: we typically reach for it first, then dial our complexity back as we realize and iterate on layout. It remains to be seen what the rest of the world will do with this powerful CSS feature over the next few years.

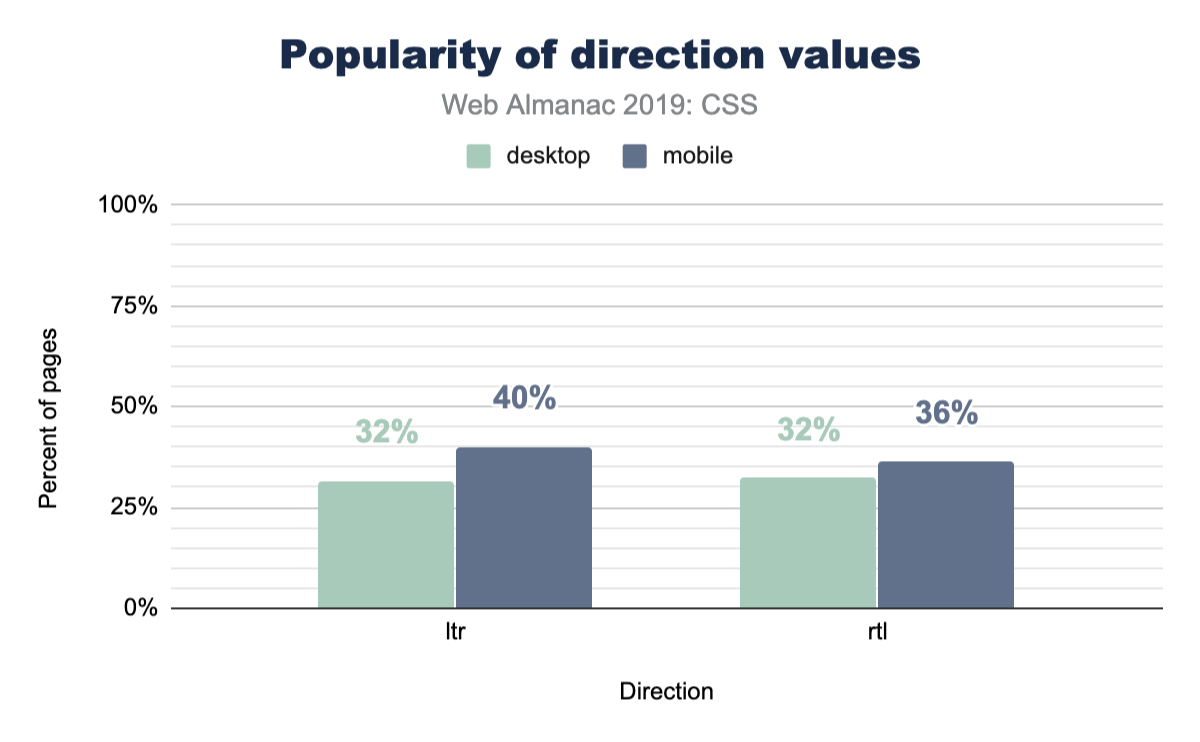

Writing modes

The web and CSS are international platform features, and writing modes offer a way for HTML and CSS to indicate a user’s preferred reading and writing direction within our elements.

Typography

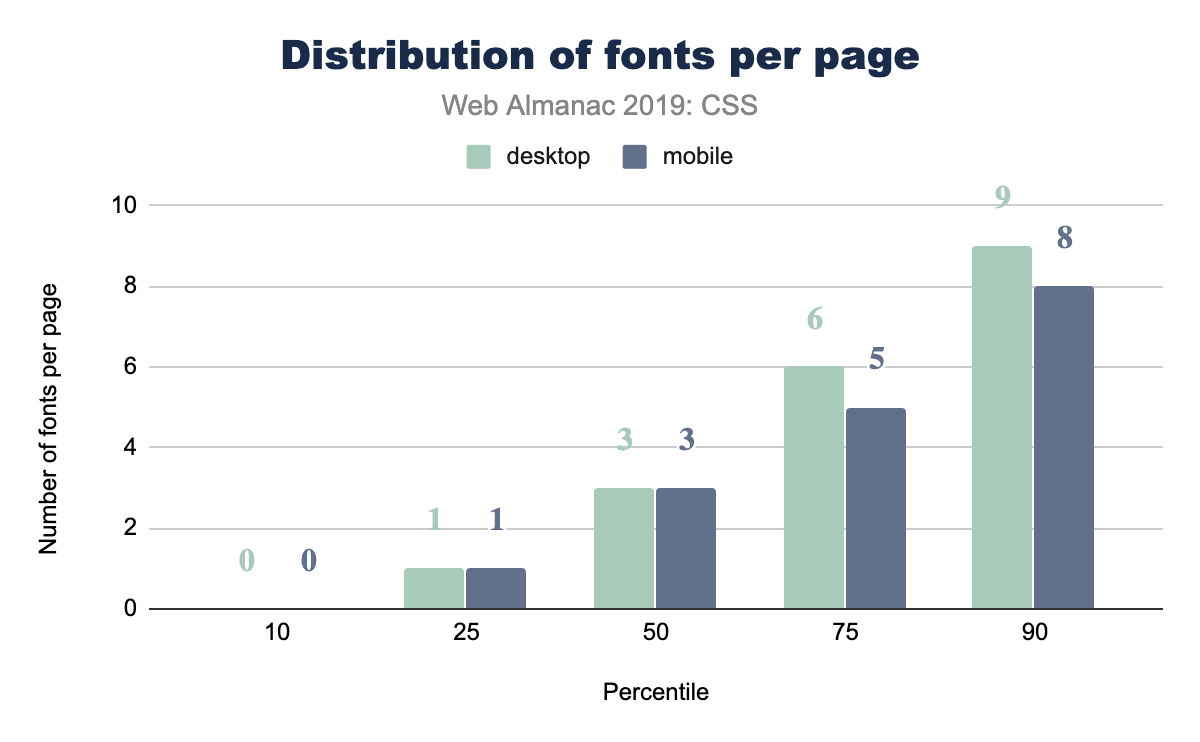

Web fonts per page

How many web fonts are you loading on your web page: 0? 10? The median number of web fonts per page is 3!

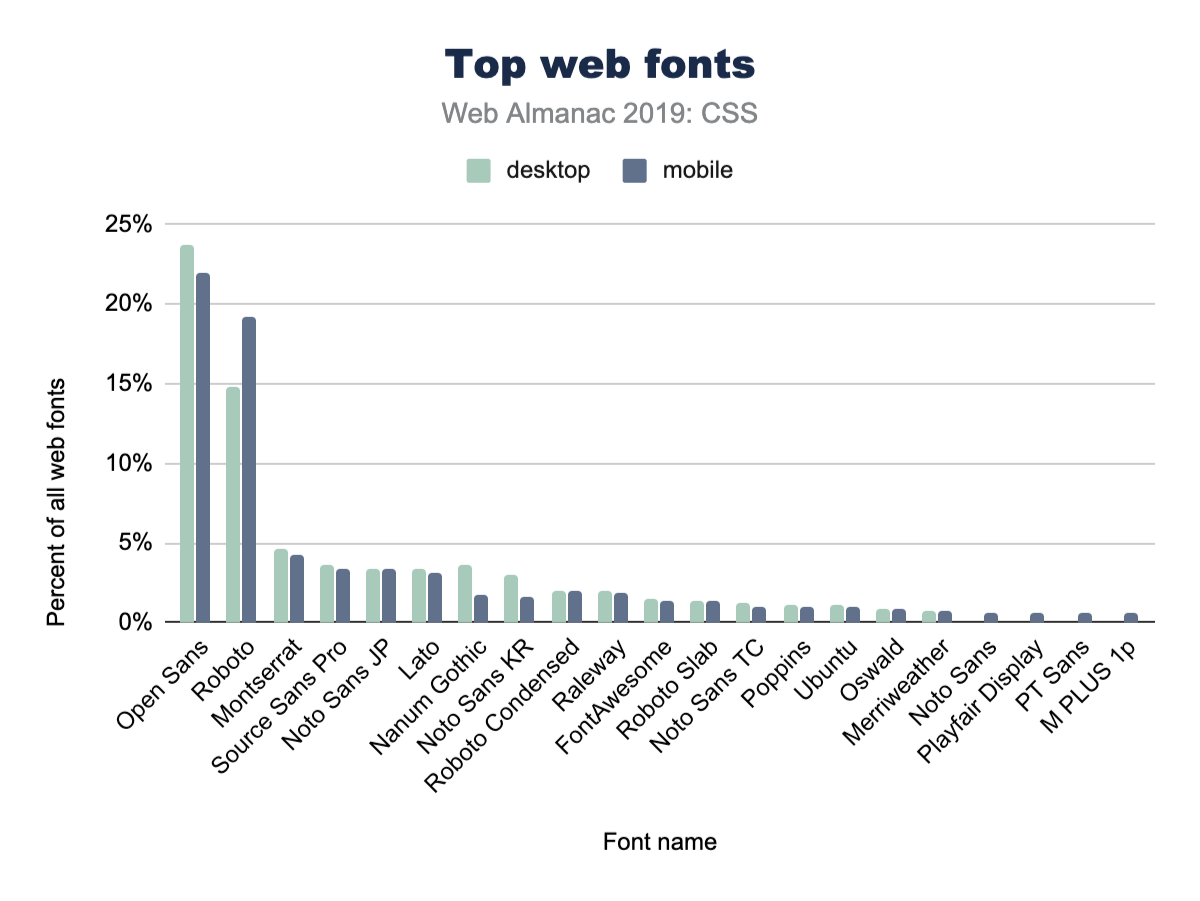

Popular font families

A natural follow up to the inquiry of total number of fonts per page, is: what fonts are they?! Designers, tune in, because you’ll now get to see if your choices are in line with what’s popular or not.

Open Sans is a huge winner here, with nearly 1 in 4 CSS @font-family declarations specifying it. We’ve definitely used Open Sans in projects at agencies.

It’s also interesting to note the differences between desktop and mobile adoption. For example, mobile pages use Open Sans slightly less often than desktop. Meanwhile, they also use Roboto slightly more often.

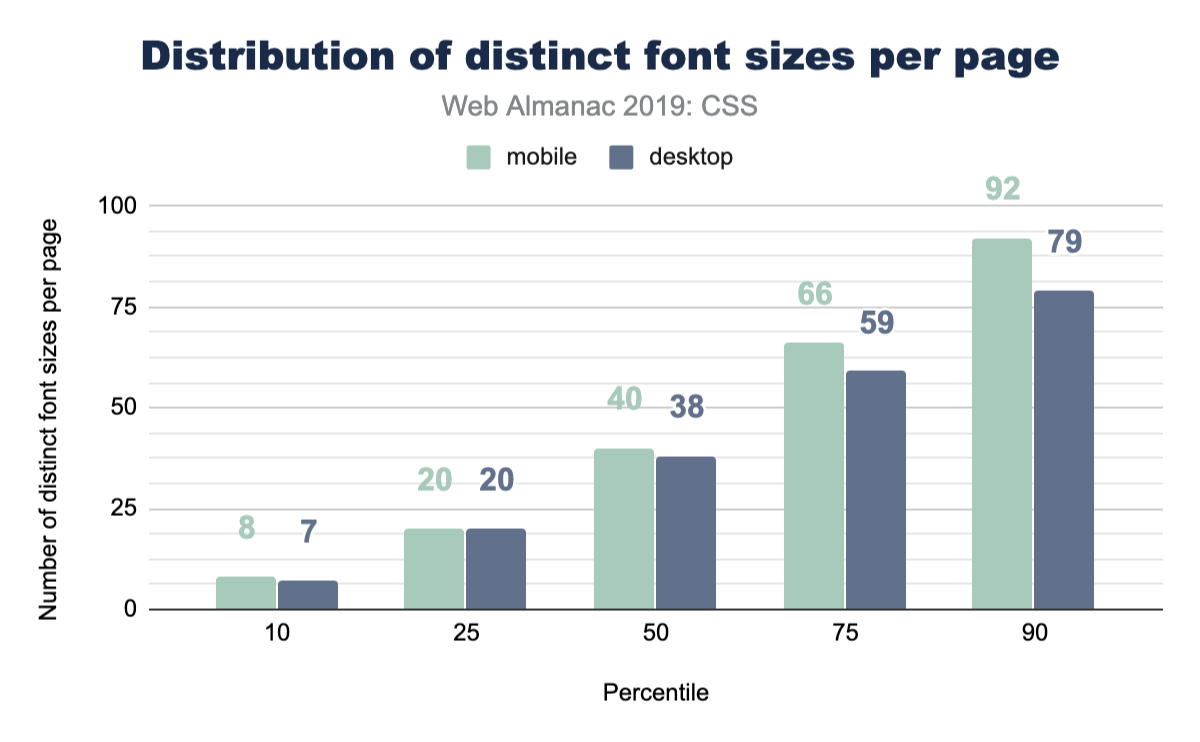

Font sizes

This is a fun one, because if you asked a user how many font sizes they feel are on a page, they’d generally return a number of 5 or definitely less than 10. Is that reality though? Even in a design system, how many font sizes are there? We queried the web and found the median to be 40 on mobile and 38 on desktop. Might be time to really think hard about custom properties or creating some reusable classes to help you distribute your type ramp.

Spacing

Margins

A margin is the space outside of elements, like the space you demand when you push your arms out from yourself. This often looks like the spacing between elements, but is not limited to that effect. In a website or app, spacing plays a huge role in UX and design. Let’s see how much margin spacing code goes into a stylesheet, shall we?

Quite a lot, it seems! The median desktop page has 96 distinct margin values and 104 on mobile. That makes for a lot of unique spacing moments in your design. Curious how many margins you have in your site? How can we make all this whitespace more manageable?

Logical properties

We estimate that the hegemony of margin-left and padding-top is of limited duration, soon to be supplemented by their writing direction agnostic, successive, logical property syntax. While we’re optimistic, current usage is quite low at 0.67% usage on desktop pages. To us, this feels like a habit change we’ll need to develop as an industry, while hopefully training new developers to use the new syntax.

z-index

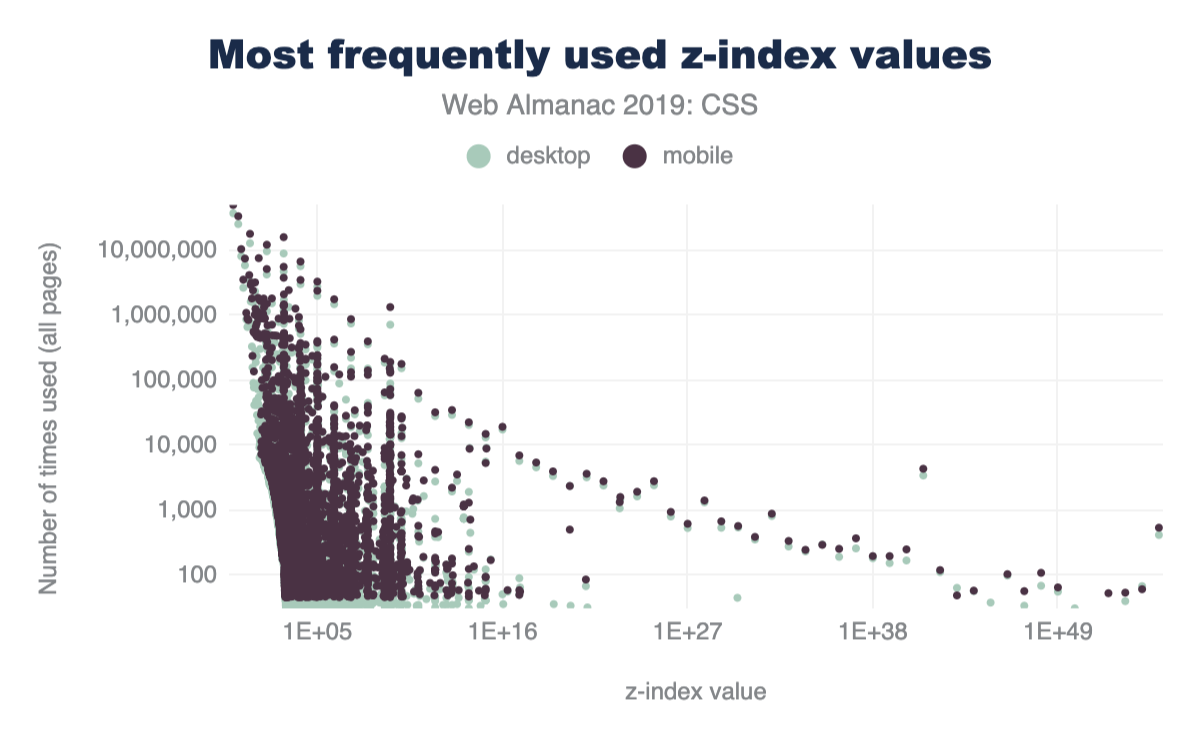

Vertical layering, or stacking, can be managed with z-index in CSS. We were curious how many different values folks use in their sites. The range of what z-index accepts is theoretically infinite, bounded only by a browser’s variable size limitations. Are all those stack positions used? Let’s see!

z-index values per page.

Popular z-index values

From our work experience, any number of 9’s seemed to be the most popular choice. Even though we taught ourselves to use the lowest number possible, that’s not the communal norm. So what is then?! If folks need things on top, what are the most popular z-index numbers to pass in? Put your drink down; this one is funny enough you might lose it.

z-index values.

z-index value.

Decoration

Filters

Filters are a fun and great way to modify the pixels the browser intends to draw to the screen. It’s a post-processing effect that is done against a flat version of the element, node, or layer that it’s being applied to. Photoshop made them easy to use, then Instagram made them accessible to the masses through bespoke, stylized combinations. They’ve been around since about 2012, there are 10 of them, and they can be combined to create unique effects.

filter property.

We were excited to see that 78% of stylesheets contain the filter property! That number was also so high it seemed a little fishy, so we dug in and sought to explain the high number. Because let’s be honest, filters are neat, but they don’t make it into all of our applications and projects. Unless!

Upon further investigation, we discovered FontAwesomehttps://fontawesome.com’s stylesheet comes with some filter usage, as well as a YouTubehttps://youtube.com embed. Therefore, we believe filter snuck in the back door by piggybacking onto a couple very popular stylesheets. We also believe that -ms-filter presence could have been included as well, contributing to the high percent of use.

Blend modes

Blend modes are similar to filters in that they are a post-processing effect that are run against a flat version of their target elements, but are unique in that they are concerned with pixel convergence. Said another way, blend modes are how 2 pixels should impact each other when they overlap. Whichever element is on the top or the bottom will affect the way that the blend mode manipulates the pixels. There are 16 blend modes -- let’s see which ones are the most popular.

*-blend-mode property.

Overall, usage of blend modes is much lower than of filters, but is still enough to be considered moderately used.

In a future edition of the Web Almanac, it would be great to drill down into blend mode usage to get an idea of the exact modes developers are using, like multiply, screen, color-burn, lighten, etc.

Animation

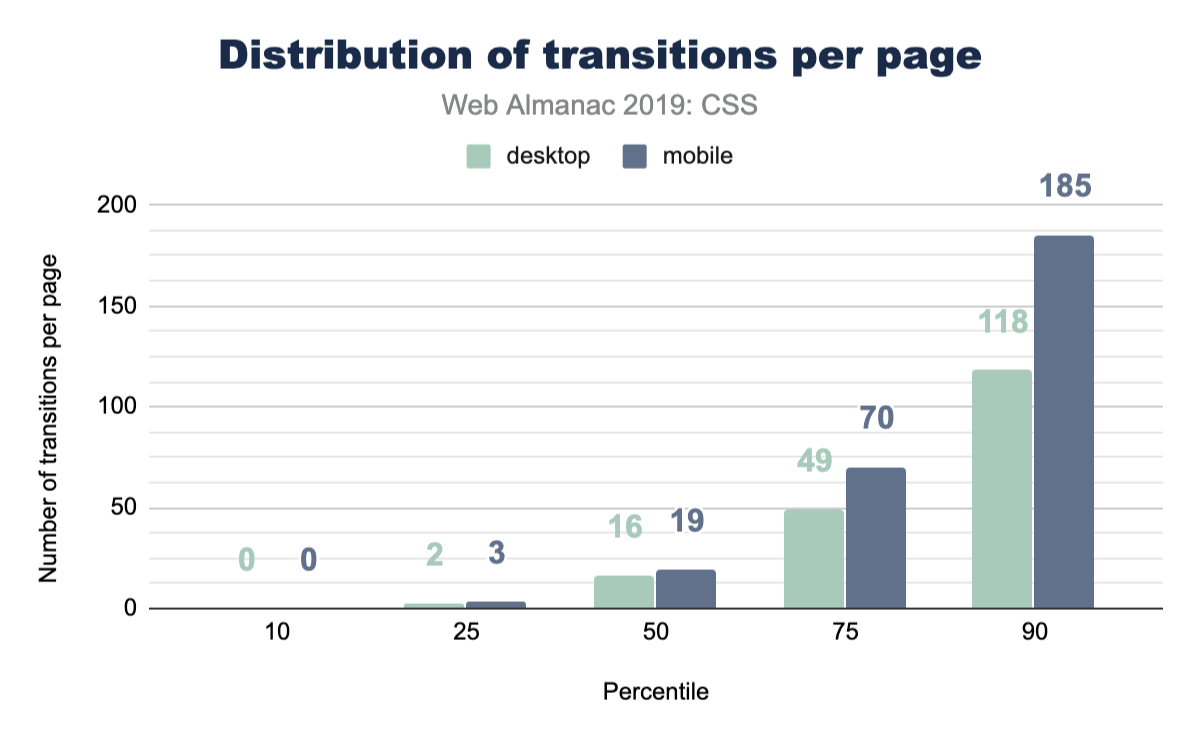

Transitions

CSS has this awesome interpolation power that can be simply used by just writing a single rule on how to transition those values. If you’re using CSS to manage states in your app, how often are you employing transitions to do the task? Let’s query the web!

That’s pretty good! We did see animate.css as a popular library to include, which brings in a ton of transition animations, but it’s still nice to see folks are considering transitioning their UIs.

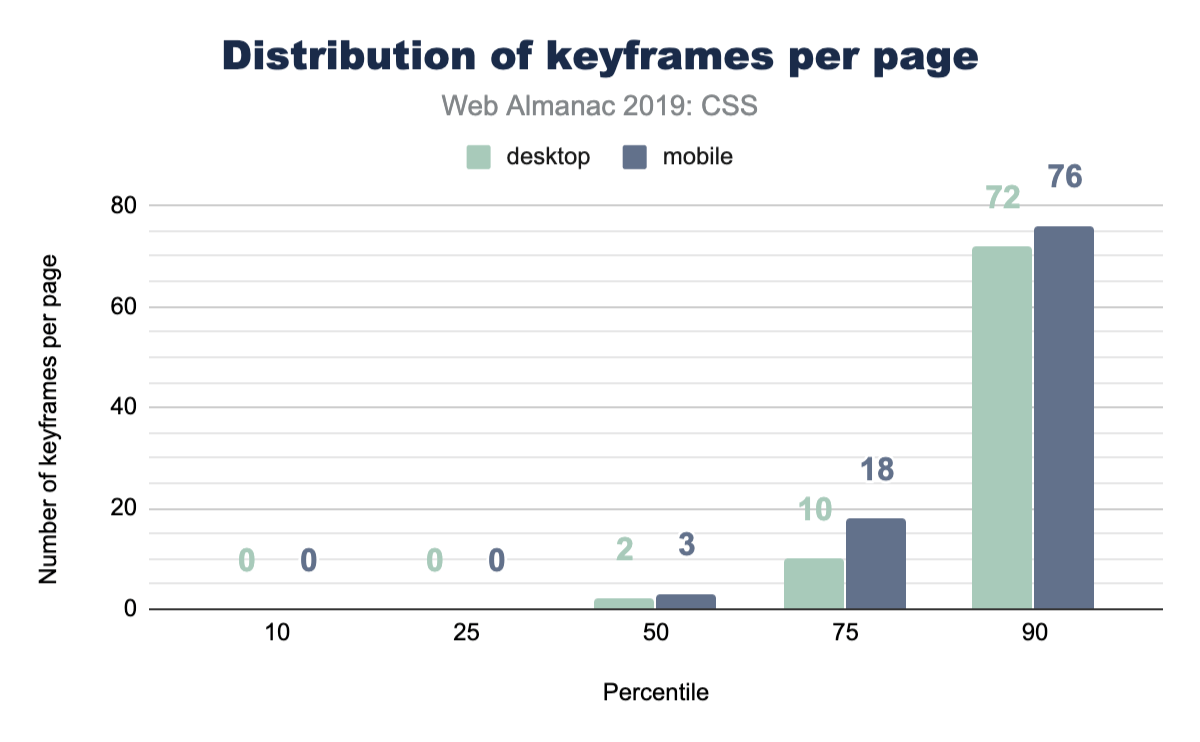

Keyframe animations

CSS keyframe animations are a great solution for your more complex animations or transitions. They allow you to be more explicit which provides higher control over the effects. They can be small, like one keyframe effect, or be large with many many keyframe effects composed into a robust animation. The median number of keyframe animations per page is much lower than CSS transitions.

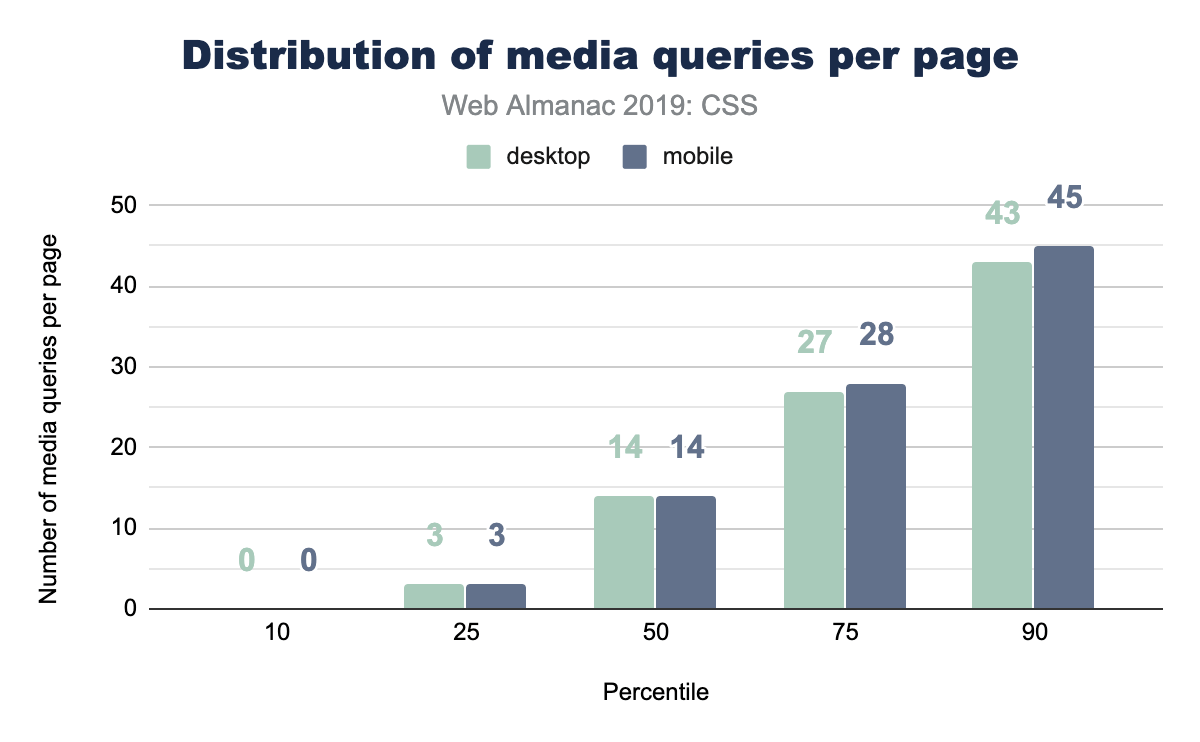

Media queries

Media queries let CSS hook into various system-level variables in order to adapt appropriately for the visiting user. Some of these queries could handle print styles, projector screen styles, and viewport/screen size. For a long time, media queries were primarily leveraged for their viewport knowledge. Designers and developers could adapt their layouts for small screens, large screens, and so forth. Later, the web started bringing more and more capabilities and queries, meaning media queries can now manage accessibility features on top of viewport features.

A good place to start with media queries, is just about how many are used per page? How many different moments or contexts does the typical page feel they want to respond to?

Popular media query breakpoint sizes

For viewport media queries, any type of CSS unit can be passed into the query expression for evaluation. In earlier days, folks would pass em and px into the query, but more units were added over time, making us very curious about what types of sizes were commonly found across the web. We assume most media queries will follow popular device sizes, but instead of assuming, let’s look at the data!

Figure 2.28 above shows that part of our assumptions were correct: there’s certainly a high amount of phone specific sizes in there, but there’s also some that aren’t. It’s interesting also how it’s very pixel dominant, with a few trickling entries using em beyond the scope of this chart.

Portrait vs landscape usage

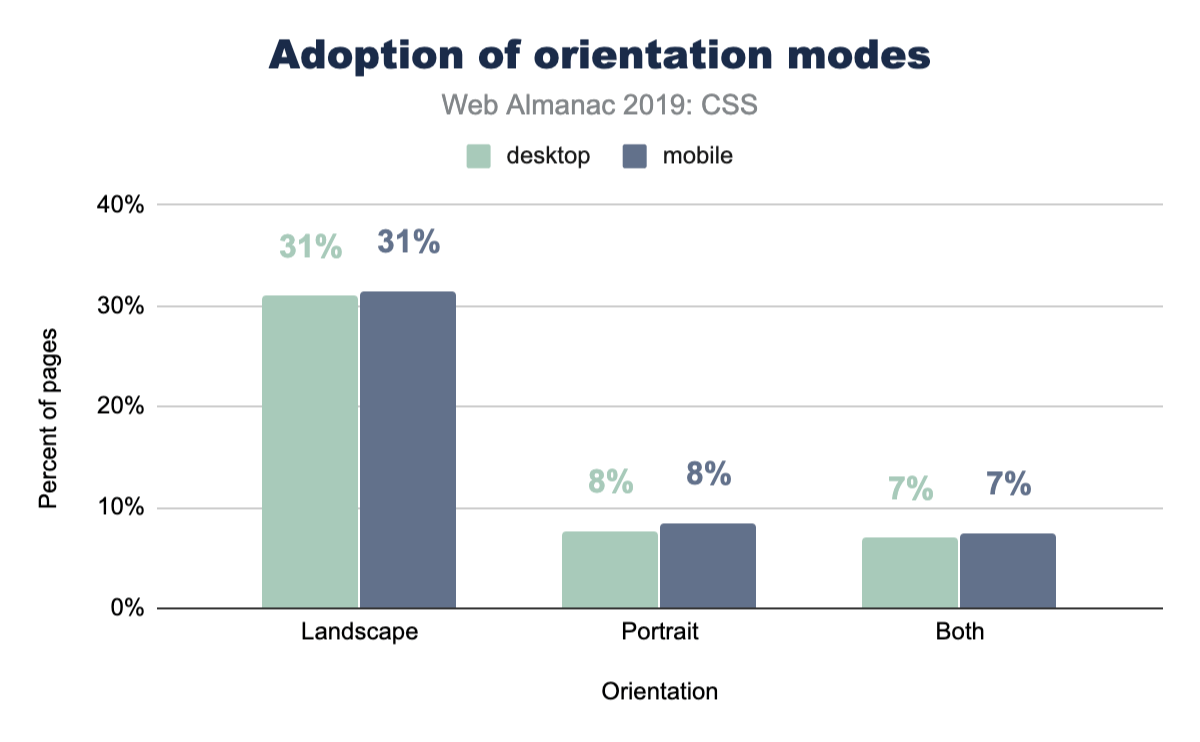

The most popular query value from the popular breakpoint sizes looks to be 768px, which made us curious. Was this value primarily used to switch to a portrait layout, since it could be based on an assumption that 768px represents the typical mobile portrait viewport? So we ran a follow up query to see the popularity of using the portrait and landscape modes:

Interestingly, portrait isn’t used very much, whereas landscape is used much more. We can only assume that 768px has been reliable enough as the portrait layout case that it’s reached for much less. We also assume that folks on a desktop computer, testing their work, can’t trigger portrait to see their mobile layout as easily as they can just squish the browser. Hard to tell, but the data is fascinating.

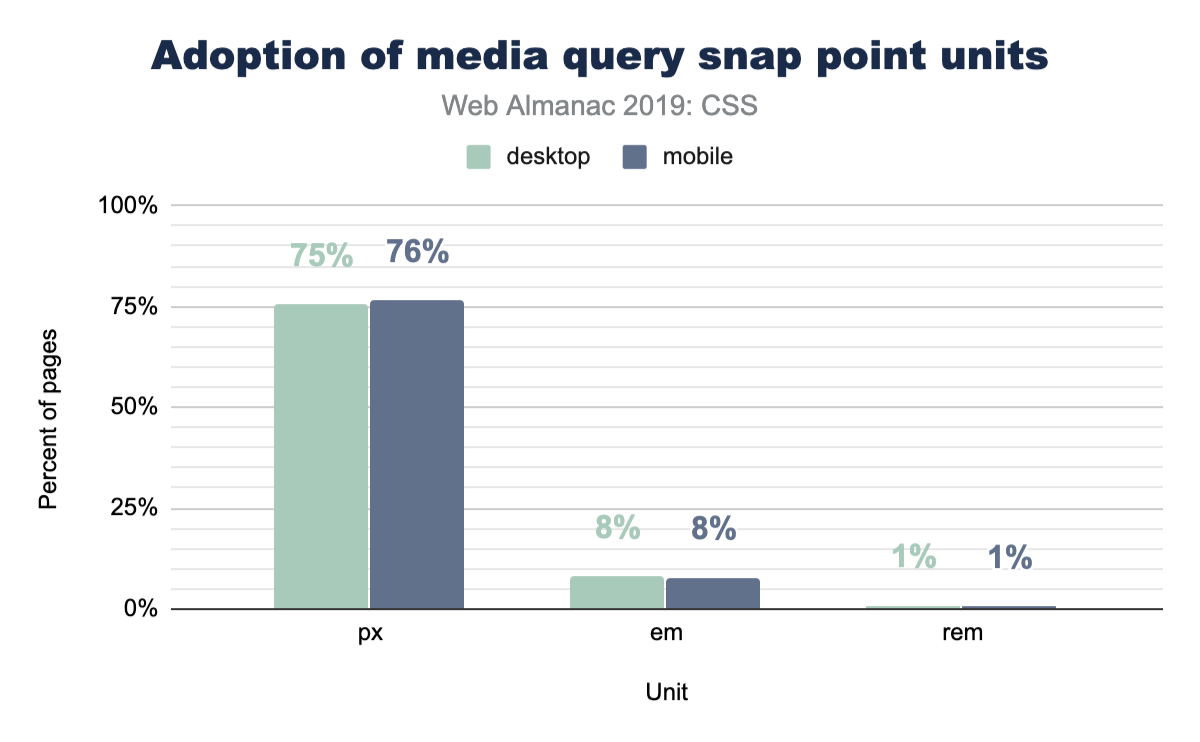

Most popular unit types

In the width and height media queries we’ve seen so far, pixels look like the dominant unit of choice for developers looking to adapt their UI to viewports. We wanted to exclusively query this though, and really take a look at the types of units folks use. Here’s what we found.

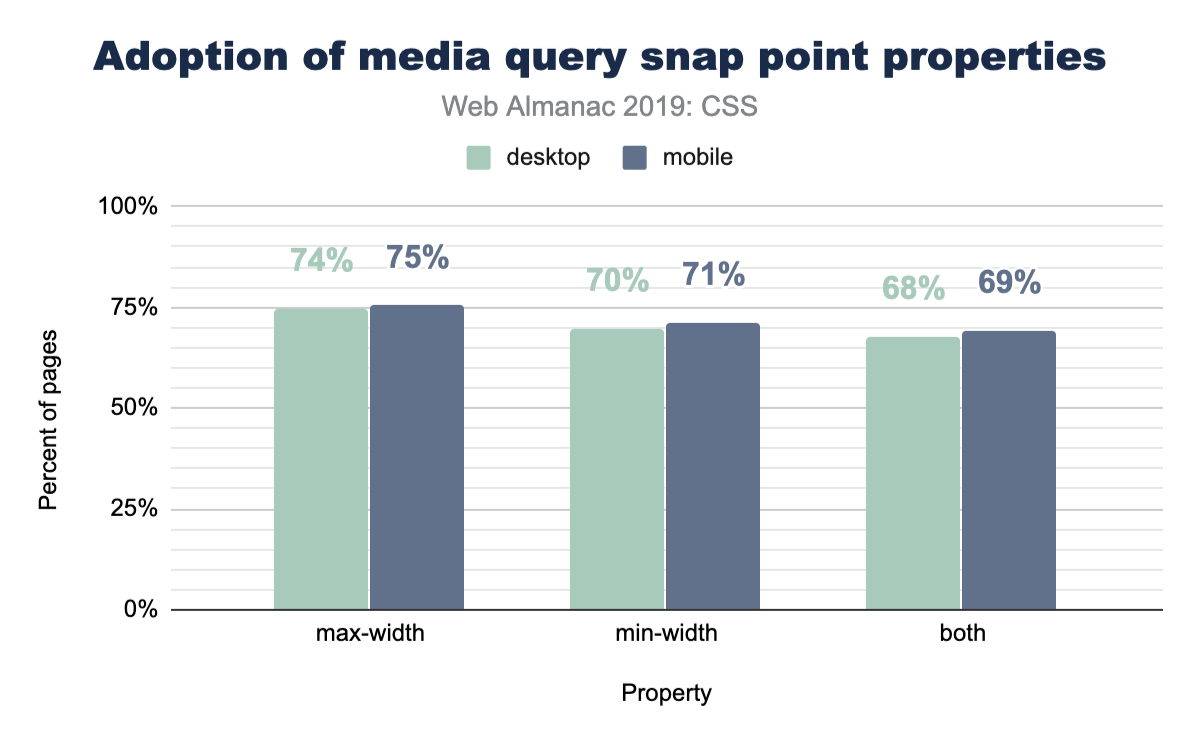

min-width vs max-width

When folks write a media query, are they typically checking for a viewport that’s over or under a specific range, or both, checking if it’s between a range of sizes? Let’s ask the web!

No clear winners here; max-width and min-width are nearly equally used.

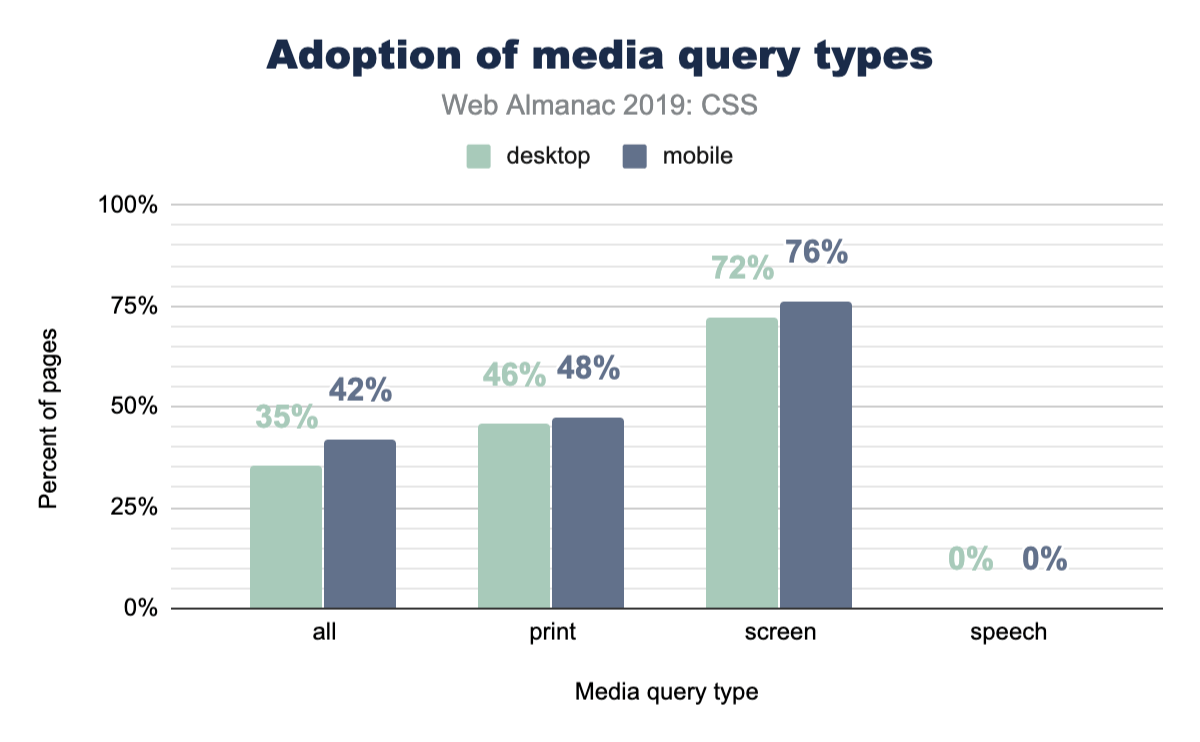

Print and speech

Websites feel like digital paper, right? As users, it’s generally known that you can just hit print from your browser and turn that digital content into physical content. A website isn’t required to change itself for that use case, but it can if it wants to! Lesser known is the ability to adjust your website in the use case of it being read by a tool or robot. So just how often are these features taken advantage of?

Page-level stats

Stylesheets

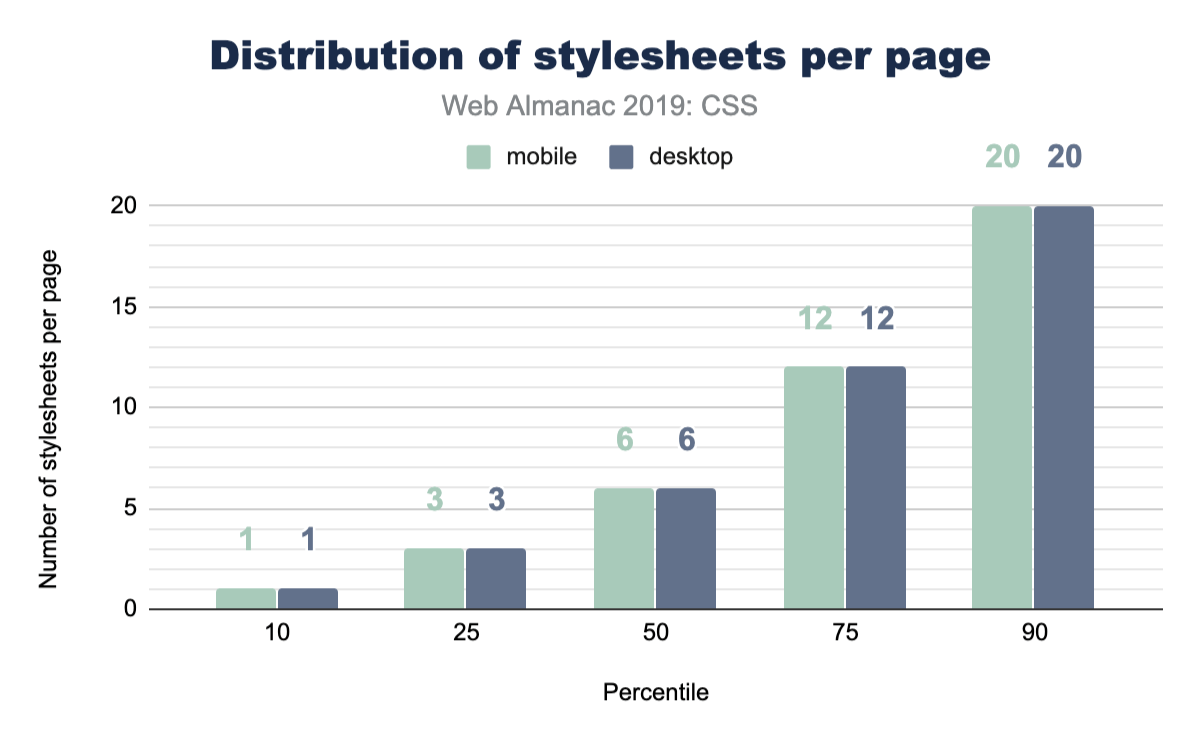

How many stylesheets do you reference from your home page? How many from your apps? Do you serve more or less to mobile vs desktop? Here’s a chart of everyone else!

Stylesheet names

What do you name your stylesheets? Have you been consistent throughout your career? Have you slowly converged or consistently diverged? This chart shows a small glimpse into library popularity, but also a large glimpse into popular names of CSS files.

| Stylesheet name | Desktop | Mobile |

|---|---|---|

| style.css | 2.43% | 2.55% |

| font-awesome.min.css | 1.86% | 1.92% |

| bootstrap.min.css | 1.09% | 1.11% |

| BfWyFJ2Rl5s.css | 0.67% | 0.66% |

| style.min.css?ver=5.2.2 | 0.64% | 0.67% |

| styles.css | 0.54% | 0.55% |

| style.css?ver=5.2.2 | 0.41% | 0.43% |

| main.css | 0.43% | 0.39% |

| bootstrap.css | 0.40% | 0.42% |

| font-awesome.css | 0.37% | 0.38% |

| style.min.css | 0.37% | 0.37% |

| styles__ltr.css | 0.38% | 0.35% |

| default.css | 0.36% | 0.36% |

| reset.css | 0.33% | 0.37% |

| styles.css?ver=5.1.3 | 0.32% | 0.35% |

| custom.css | 0.32% | 0.33% |

| print.css | 0.32% | 0.28% |

| responsive.css | 0.28% | 0.31% |

Look at all those creative file names! style, styles, main, default, all. One stood out though, do you see it? BfWyFJ2Rl5s.css takes the number four spot for most popular. We went researching it a bit and our best guess is that it’s related to Facebook “like” buttons. Do you know what that file is? Leave a comment, because we’d love to hear the story.

Stylesheet size

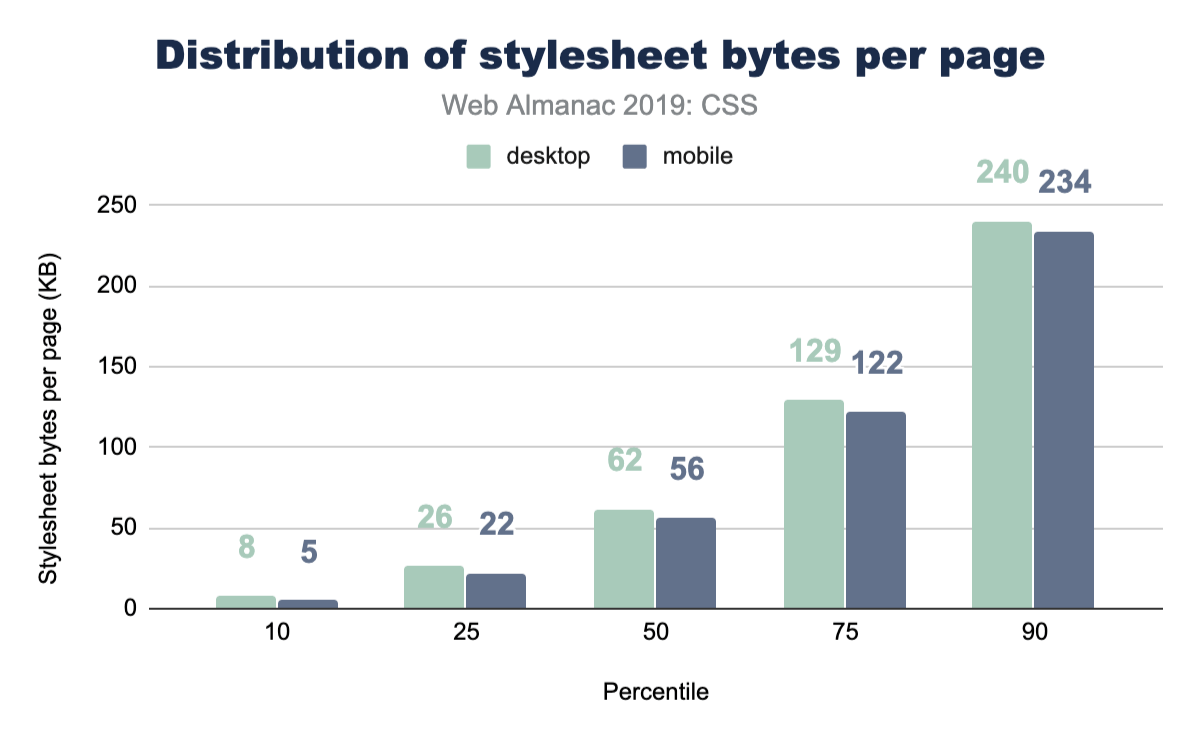

How big are these stylesheets? Is our CSS size something to worry about? Judging by this data, our CSS is not a main offender for page bloat.

See the Page Weight chapter for a more in-depth look at the number of bytes websites are loading for each content type.

Libraries

It’s common, popular, convenient, and powerful to reach for a CSS library to kick start a new project. While you may not be one to reach for a library, we’ve queried the web in 2019 to see which are leading the pack. If the results astound you, like they did us, I think it’s an interesting clue to just how small of a developer bubble we can live in. Things can feel massively popular, but when the web is inquired, reality is a bit different.

| Library | Desktop | Mobile |

|---|---|---|

| Bootstrap | 27.8% | 26.9% |

| animate.css | 6.1% | 6.4% |

| ZURB Foundation | 2.5% | 2.6% |

| UIKit | 0.5% | 0.6% |

| Material Design Lite | 0.3% | 0.3% |

| Materialize CSS | 0.2% | 0.2% |

| Pure CSS | 0.1% | 0.1% |

| Angular Material | 0.1% | 0.1% |

| Semantic-ui | 0.1% | 0.1% |

| Bulma | 0.0% | 0.0% |

| Ant Design | 0.0% | 0.0% |

| tailwindcss | 0.0% | 0.0% |

| Milligram | 0.0% | 0.0% |

| Clarity | 0.0% | 0.0% |

This chart suggests that Bootstraphttps://getbootstrap.com/ is a valuable library to know to assist with getting a job. Look at all the opportunity there is to help! It’s also worth noting that this is a positive signal chart only: the math doesn’t add up to 100% because not all sites are using a CSS framework. A little bit over half of all sites are not using a known CSS framework. Very interesting, no?!

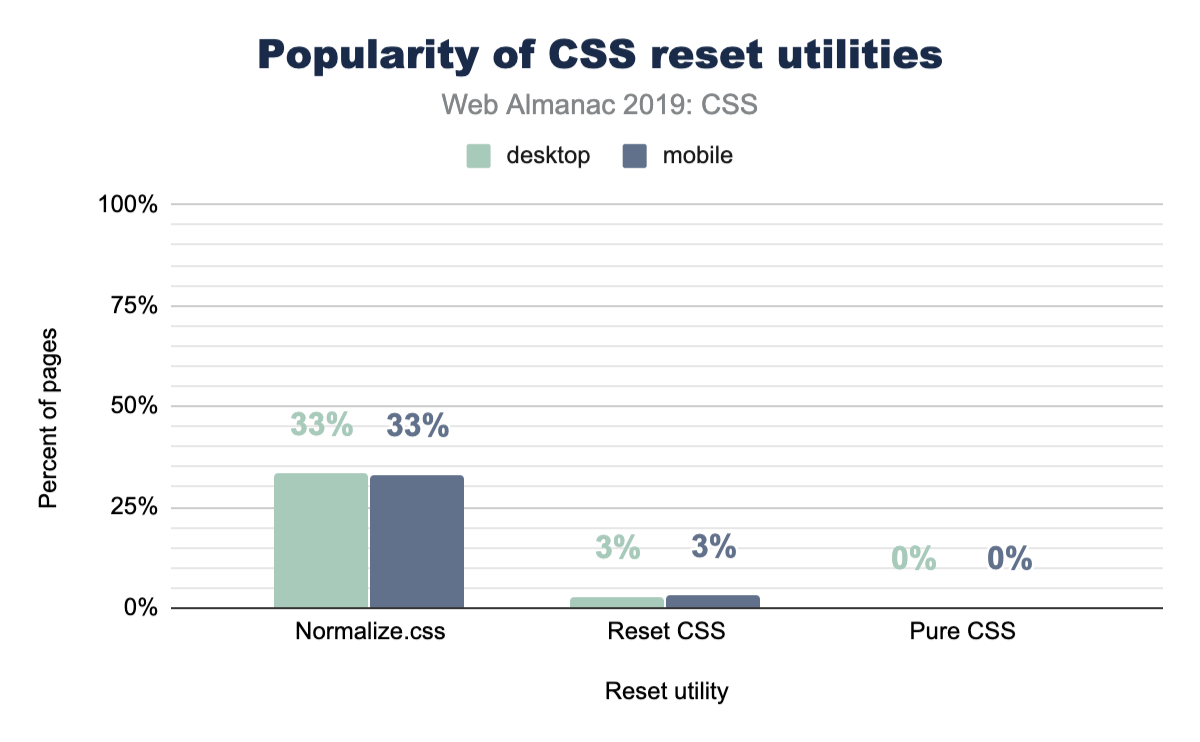

Reset utilities

CSS reset utilities intend to normalize or create a baseline for native web elements. In case you didn’t know, each browser serves its own stylesheet for all HTML elements, and each browser gets to make their own unique decisions about how those elements look or behave. Reset utilities have looked at these files, found their common ground (or not), and ironed out any differences so you as a developer can style in one browser and have reasonable confidence it will look the same in another.

So let’s take a peek at how many sites are using one! Their existence seems quite reasonable, so how many folks agree with their tactics and use them in their sites?

Turns out that about one-third of the web is using normalize.css, which could be considered a more gentle approach to the task then a reset is. We looked a little deeper, and it turns out that Bootstrap includes normalize.css, which likely accounts for a massive amount of its usage. It’s worth noting as well that normalize.css has more adoption than Bootstrap, so there are plenty of folks using it on its own.

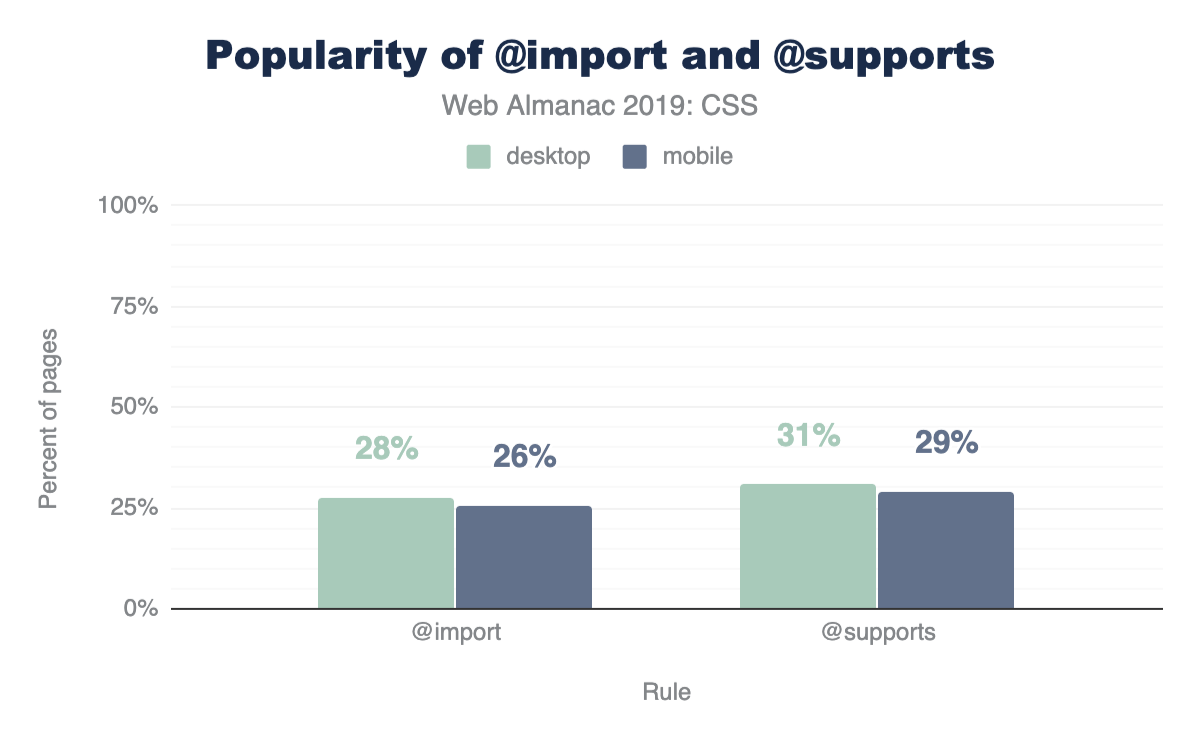

@supports and @import

CSS @supports is a way for the browser to check whether a particular property-value combination is parsed as valid, and then apply styles if the check returns as true.

Considering @supports was implemented across most browsers in 2013, it’s not too surprising to see a high amount of usage and adoption. We’re impressed at the mindfulness of developers here. This is considerate coding! 30% of all websites are checking for some display related support before using it.

An interesting follow up to this is that there’s more usage of @supports than @imports! We did not expect that! @import has been in browsers since 1994.

Conclusion

There is so much more here to datamine! Many of the results surprised us, and we can only hope that they’ve surprised you as well. This surprising data set made the summarizing very fun, and left us with lots of clues and trails to investigate if we want to hunt down the reasons why some of the results are the way they are.

Which results did you find the most alarming? Which results make you head to your codebase for a quick query?

We felt the biggest takeaway from these results is that custom properties offer the most bang for your buck in terms of performance, DRYness, and scalability of your stylesheets. We look forward to scrubbing the internet’s stylesheets again, hunting for new datums and provocative chart treats. Reach out to @una or @argyleink in the comments with your queries, questions, and assertions. We’d love to hear them!

Markup

Introduction

In 2005, Ian “Hixie” Hickson posted some analysis of markup datahttps://web.archive.org/web/20060203035414/http://code.google.com/webstats/index.html building upon various previous work. Much of this work aimed to investigate class names to see if there were common informal semantics that were being adopted by developers which it might make sense to standardize upon. Some of this research helped inform new elements in HTML5.

14 years later, it’s time to take a fresh look. Since then, we’ve also had the introduction of Custom Elementshttps://developer.mozilla.org/docs/Web/Web_Components/Using_custom_elements and the Extensible Web Manifestohttps://extensiblewebmanifesto.org/ encouraging that we find better ways to pave the cowpaths by allowing developers to explore the space of elements themselves and allow standards bodies to act more like dictionary editorshttps://bkardell.com/blog/Dropping-The-F-Bomb-On-Standards.html. Unlike CSS class names, which might be used for anything, we can be far more certain that authors who used a non-standard element really intended this to be an element.

As of July 2019, the HTTP Archive has begun collecting all used element names in the DOM for about 4.4 million desktop home pages, and about 5.3 million mobile home pages which we can now begin to research and dissect. (Learn more about our Methodology.)

This crawl encountered over 5,000 distinct non-standard element names in these pages, so we capped the total distinct number of elements that we count to the ’top’ (explained below) 5,048.

Methodology

Names of elements on each page were collected from the DOM itself, after the initial run of JavaScript.

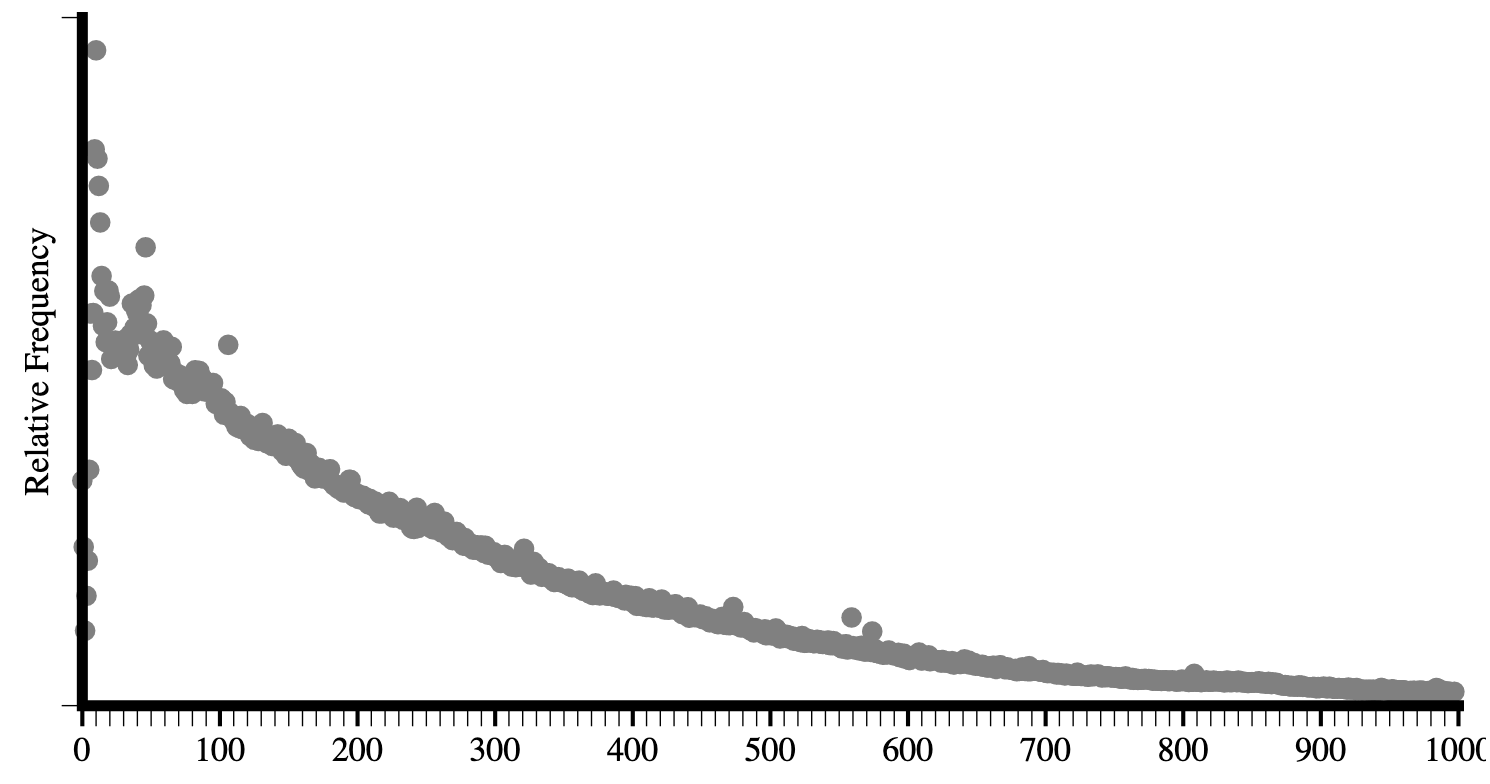

Looking at a raw frequency count isn’t especially helpful, even for standard elements: About 25% of all elements encountered are <div>. About 17% are <a>, about 11% are <span> -- and those are the only elements that account for more than 10% of occurrences. Languages are generally like thishttps://www.youtube.com/watch?v=fCn8zs912OE; a small number of terms are astoundingly used by comparison. Further, when we start looking at non-standard elements for uptake, this would be very misleading as one site could use a certain element a thousand times and thus make it look artificially very popular.

Instead, as in Hixie’s original study, what we will look at is how many sites include each element at least once in their home page.

Top elements and general info

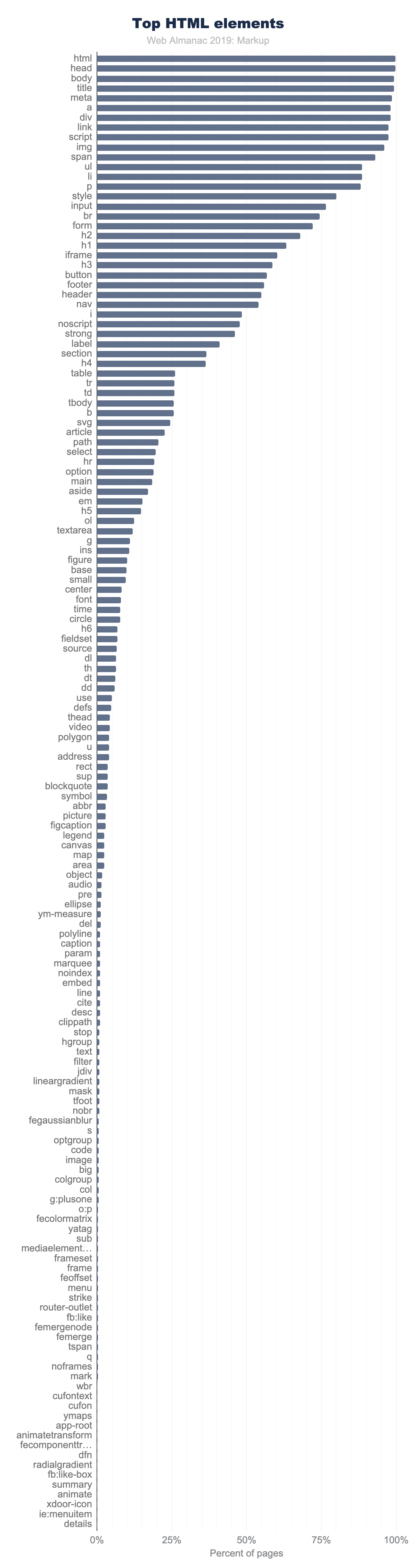

In 2005, Hixie’s survey listed the top few most commonly used elements on pages. The top 3 were html, head and body which he noted as interesting because they are optional and created by the parser if omitted. Given that we use the post-parsed DOM, they’ll show up universally in our data. Thus, we’ll begin with the 4th most used element. Below is a comparison of the data from then to now (I’ve included the frequency comparison here as well just for fun).

| 2005 (per site) | 2019 (per site) | 2019 (frequency) |

|---|---|---|

| title | title | div |

| a | meta | a |

| img | a | span |

| meta | div | li |

| br | link | img |

| table | script | script |

| td | img | p |

| tr | span | option |

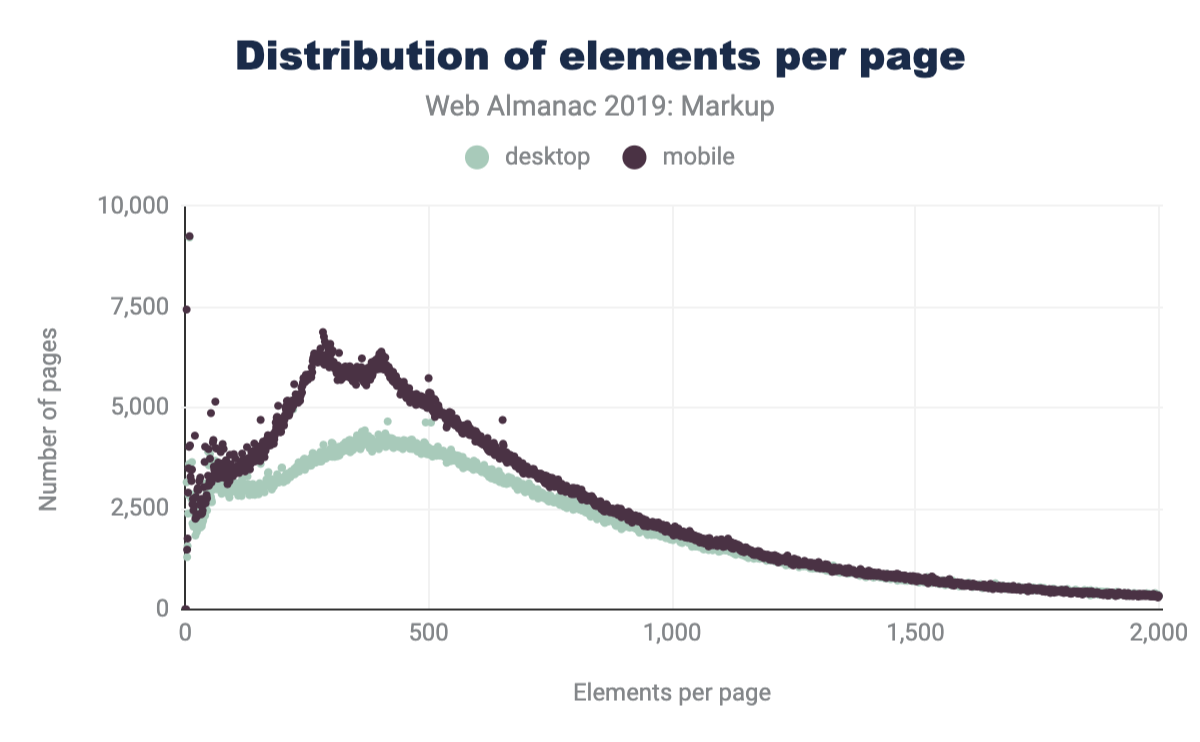

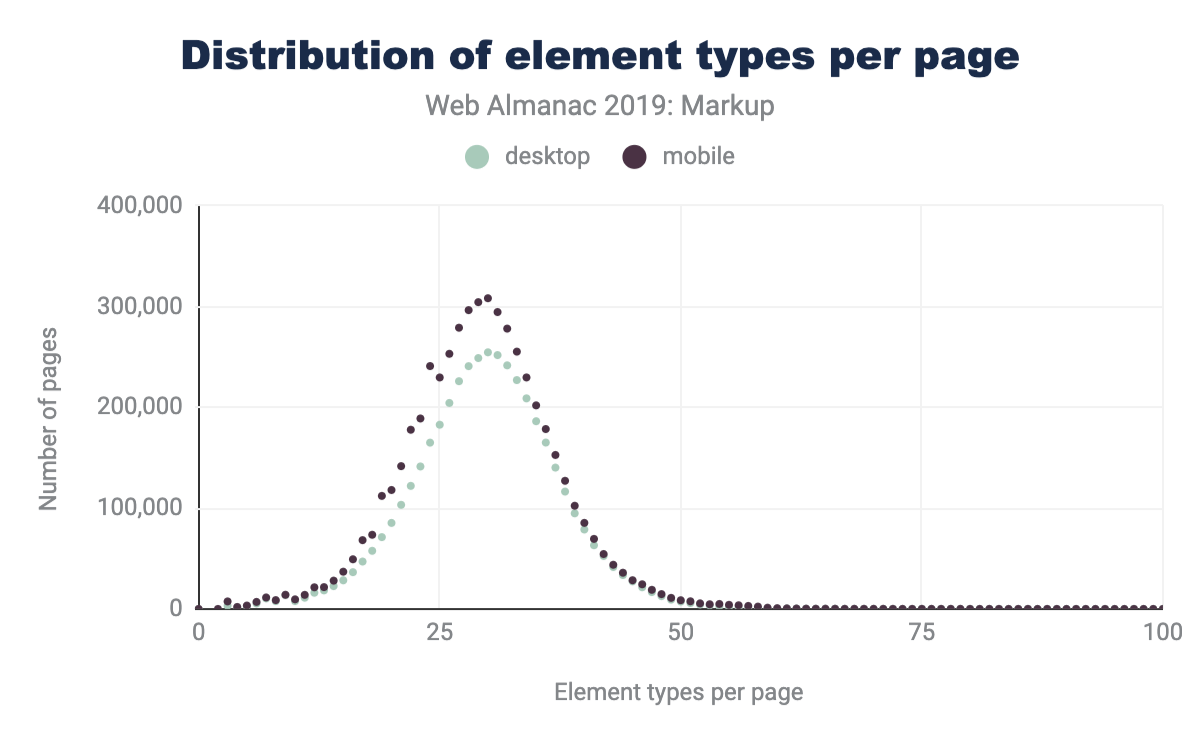

Elements per page

Comparing the latest data in Figure 3.3 to that of Hixie’s report from 2005 in Figure 3.2, we can see that the average size of DOM trees has gotten bigger.

We can see that both the average number of types of elements per page has increased, as well as the maximum numbers of unique elements that we encounter.

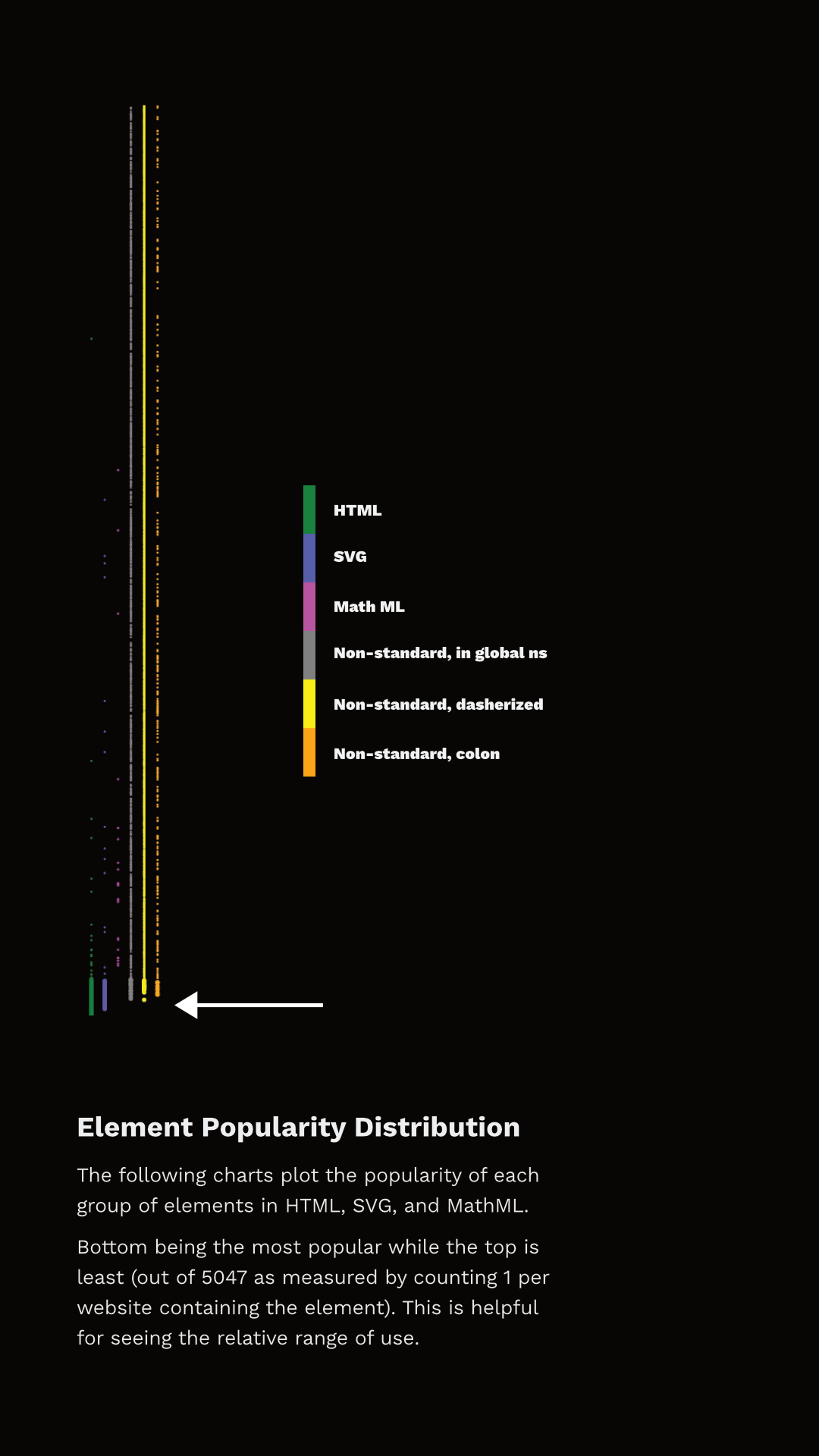

Custom elements

Most of the elements we recorded are custom (as in simply ’not standard’), but discussing which elements are and are not custom can get a little challenging. Written down in some spec or proposal somewhere are, actually, quite a few elements. For purposes here, we considered 244 elements as standard (though, some of them are deprecated or unsupported):

- 145 Elements from HTML

- 68 Elements from SVG

- 31 Elements from MathML

In practice, we encountered only 214 of these:

- 137 from HTML

- 54 from SVG

- 23 from MathML

In the desktop dataset we collected data for the top 4,834 non-standard elements that we encountered. Of these:

- 155 (3%) are identifiable as very probable markup or escaping errors (they contain characters in the parsed tag name which imply that the markup is broken)

- 341 (7%) use XML-style colon namespacing (though, as HTML, they don’t use actual XML namespaces)

- 3,207 (66%) are valid custom element names

- 1,211 (25%) are in the global namespace (non-standard, having neither dash, nor colon)

- 216 of these we have flagged as possible typos as they are longer than 2 characters and have a Levenshtein distance of 1 from some standard element name like

<cript>,<spsn>or<artice>. Some of these (like<jdiv>), however, are certainly intentional.

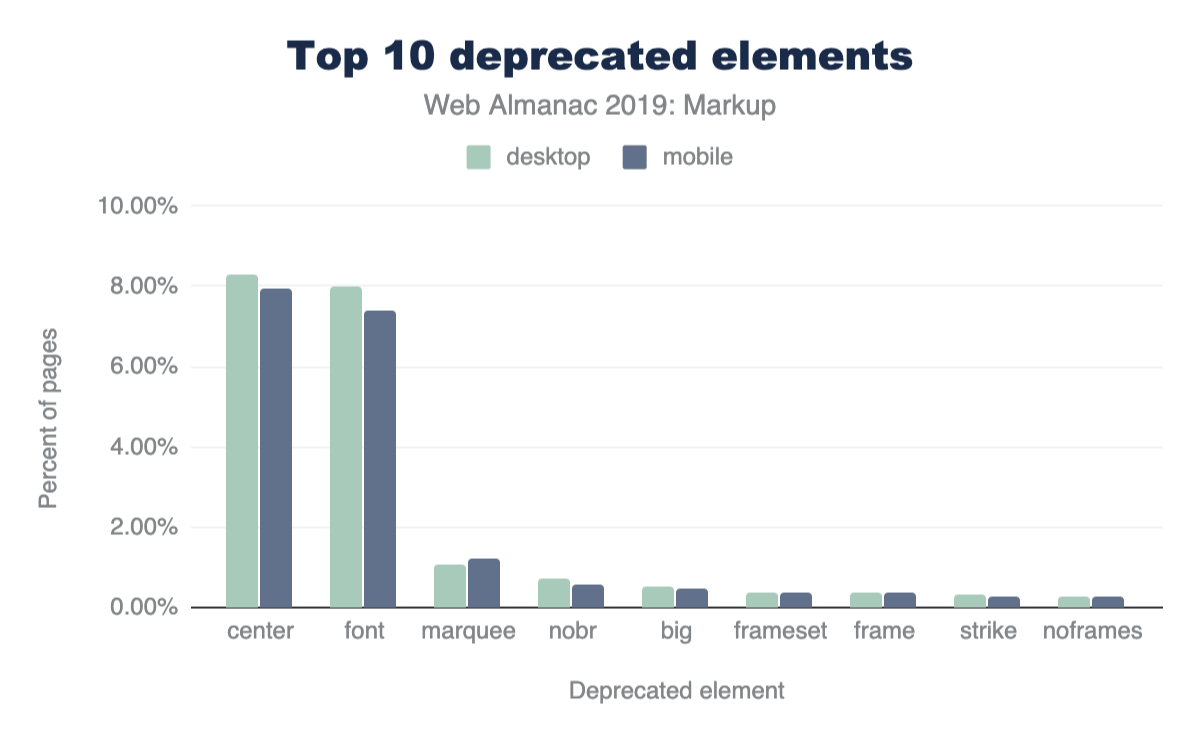

Additionally, 15% of desktop pages and 16% of mobile pages contain deprecated elements.

Figure 3.6 above shows the top 10 most frequently used deprecated elements. Most of these can seem like very small numbers, but perspective matters.

Perspective on value and usage

In order to discuss numbers about the use of elements (standard, deprecated or custom), we first need to establish some perspective.

In Figure 3.7 above, the top 150 element names, counting the number of pages where they appear, are shown. Note how quickly use drops off.

Only 11 elements are used on more than 90% of pages:

<html><head><body><title><meta><a><div><link><script><img><span>

There are only 15 other elements that occur on more than 50% of pages:

<ul><li><p><style><input><br><form><h2><h1><iframe><h3><button><footer><header><nav>

And there are only 40 other elements that occur on more than 5% of pages.

Even <video>, for example, doesn’t make that cut. It appears on only 4% of desktop pages in the dataset (3% on mobile). While these numbers sound very low, 4% is actually quite popular by comparison. In fact, only 98 elements occur on more than 1% of pages.

It’s interesting, then, to see what the distribution of these elements looks like and which ones have more than 1% use.

Figure 3.8 shows the rank of each element and which category they fall into. I’ve separated the data points into discrete sets simply so that they can be viewed (otherwise there just aren’t enough pixels to capture all that data), but they represent a single ’line’ of popularity; the bottom-most being the most common, the top-most being the least common. The arrow points to the end of elements that appear in more than 1% of the pages.

You can observe two things here. First, the set of elements that have more than 1% use are not exclusively HTML. In fact, 27 of the most popular 100 elements aren’t even HTML - they are SVG! And there are non-standard tags at or very near that cutoff too! Second, note that a whole lot of HTML elements are used by less than 1% of pages.

So, are all of those elements used by less than 1% of pages “useless”? Definitely not. This is why establishing perspective matters. There are around two billion web sites on the webhttps://www.websitehostingrating.com/internet-statistics-facts/. If something appears on 0.1% of all websites in our dataset, we can extrapolate that this represents perhaps two million web sites in the whole web. Even 0.01% extrapolates to two hundred thousand sites. This is also why removing support for elements, even very old ones which we think aren’t great ideas, is a very rare occurrence. Breaking hundreds of thousands or millions of sites just isn’t a thing that browser vendors can do lightly.

Many elements, even the native ones, appear on fewer than 1% of pages and are still very important and successful. <code>, for example, is an element that I both use and encounter a lot. It’s definitely useful and important, and yet it is used on only 0.57% of these pages. Part of this is skewed based on what we are measuring; home pages are generally less likely to include certain kinds of things (like <code> for example). Home pages serve a less general purpose than, for example, headings, paragraphs, links and lists. However, the data is generally useful.

We also collected information about which pages contained an author-defined (not native) .shadowRoot. About 0.22% of desktop pages and 0.15% of mobile pages had a shadow root. This might not sound like a lot, but it is roughly 6.5k sites in the mobile dataset and 10k sites on the desktop and is more than several HTML elements. <summary> for example, has about equivalent use on the desktop and it is the 146th most popular element. <datalist> appears on 0.04% of home pages and it’s the 201st most popular element.

In fact, over 15% of elements we’re counting as defined by HTML are outside the top 200 in the desktop dataset . <meter> is the least popular “HTML5 era” element, which we can define as 2004-2011, before HTML moved to a Living Standard model. It is around the 1,000th most popular element. <slot>, the most recently introduced element (April 2016), is only around the 1,400th most popular element.

Lots of data: real DOM on the real web

With this perspective in mind about what use of native/standard features looks like in the dataset, let’s talk about the non-standard stuff.

You might expect that many of the elements we measured are used only on a single web page, but in fact all of the 5,048 elements appear on more than one page. The fewest pages an element in our dataset appears on is 15. About a fifth of them occur on more than 100 pages. About 7% occur on more than 1,000 pages.

To help analyze the data, I hacked together a little tool with Glitchhttps://rainy-periwinkle.glitch.me. You can use this tool yourself, and please share a permalink back with the @HTTPArchive along with your observations. (Tommy Hodgins has also built a similar CLI toolhttps://github.com/tomhodgins/hade which you can use to explore.)

Let’s look at some data.

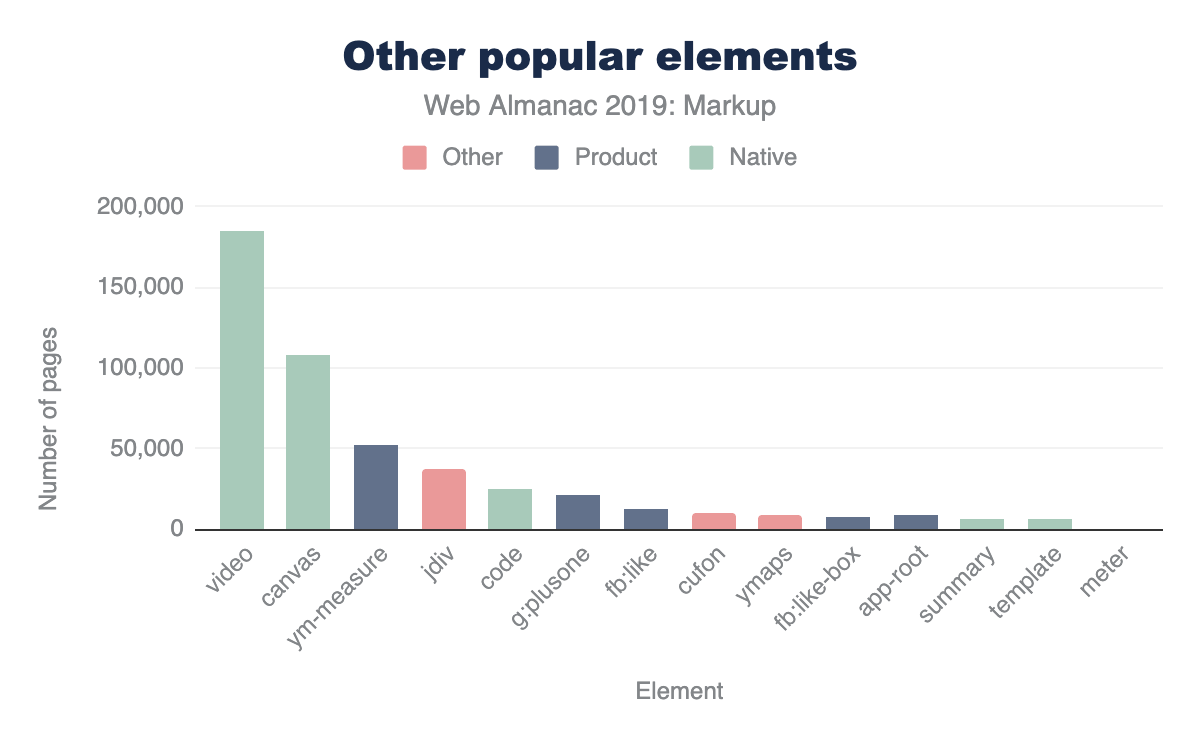

Products (and libraries) and their custom markup

For several non-standard elements, their prevalence may have more to do with their inclusion in popular third-party tools than first-party adoption. For example, the <fb:like> element is found on 0.3% of pages not because site owners are explicitly writing it out but because they include the Facebook widget. Many of the elements Hixie mentioned 14 years agohttps://web.archive.org/web/20060203031245/http://code.google.com/webstats/2005-12/editors.html seem to have dwindled, but others are still pretty huge:

- Popular elements created by Claris Home Pagehttps://en.wikipedia.org/wiki/Claris_Home_Page (whose last stable release was 21 years ago) still appear on over 100 pages.

<x-claris-window>, for example, appears on 130 pages. - Some of the

<actinic:*>elements from British ecommerce provider Oxatishttps://www.oxatis.co.uk appear on even more pages. For example,<actinic:basehref>still shows up on 154 pages in the desktop data. - Macromedia’s elements seem to have largely disappeared. Only one element,

<mm:endlock>, appears on our list and on only 22 pages. - Adobe Go-Live’s

<csscriptdict>still appears on 640 pages in the desktop dataset. - Microsoft Office’s

<o:p>element still appears on 0.5% of desktop pages, over 20k pages.

But there are plenty of newcomers that weren’t in Hixie’s original report too, and with even bigger numbers.

<ym-measure>is a tag injected by Yandex’s Metrica analytics packagehttps://www.npmjs.com/package/yandex-metrica-watch. It’s used on more than 1% of desktop and mobile pages, solidifying its place in the top 100 most used elements. That’s huge!<g:plusone>from the now-defunct Google Plus occurs on over 21k pages.- Facebook’s

<fb:like>occurs on 14k mobile pages. - Similarly,

<fb:like-box>occurs on 7.8k mobile pages. <app-root>, which is generally included in frameworks like Angular, appears on 8.2k mobile pages.

Let’s compare these to a few of the native HTML elements that are below the 5% bar, for perspective.

You could discover interesting insights like these all day long.

Here’s one that’s a little different: popular elements could be caused by outright errors in products. For example, <pclass="ddc-font-size-large"> occurs on over 1,000 sites. This was thanks to a missing space in a popular “as-a-service” kind of product. Happily, we reported this error during our research and it was quickly fixed.

In his original paper, Hixie mentions that:

The good thing, if we can be forgiven for trying to remain optimistic in the face of all this non-standard markup, is that at least these elements are all clearly using vendor-specific names. This massively reduces the likelihood that standards bodies will invent elements and attributes that clash with any of them.

However, as mentioned above, this is not universal. Over 25% of the non-standard elements that we captured don’t use any kind of namespacing strategy to avoid polluting the global namespace. For example, here is a list of 1157 elements like that from the mobile datasethttps://rainy-periwinkle.glitch.me/permalink/53567ec94b328de965eb821010b8b5935b0e0ba316e833267dc04f1fb3b53bd5.html. Many of those, as you can see, are likely to be non-problematic as they have obscure names, misspellings and so on. But at least a few probably present some challenges. You’ll note, for example, that <toast> (which Googlers recently tried to propose as <std-toast>) appears in this list.

There are some popular elements that are probably not so challenging:

<ymaps>from Yahoo Maps appears on ~12.5k mobile pages.<cufon>and<cufontext>from a font replacement library from 2008, appear on ~10.5k mobile pages.- The

<jdiv>element, which appears to be injected by the Jivo chat product, appears on ~40.3k mobile pages,

Placing these into our same chart as above for perspective looks something like this (again, it varies slightly based on the dataset)

The interesting thing about these results is that they also introduce a few other ways that our tool can come in very handy. If we’re interested in exploring the space of the data, a very specific tag name is just one possible measure. It’s definitely the strongest indicator if we can find good “slang” developing. However, what if that’s not all we’re interested in?

Common use cases and solutions

What if, for example, we were interested in people solving common use cases? This could be because we’re looking for solutions to use cases that we currently have ourselves, or for researching more broadly what common use cases people are solving with an eye toward incubating some standardization effort. Let’s take a common example: tabs. Over the years there have been a lot of requests for things like tabs. We can use a fuzzy search here and find that there are many variants of tabshttps://rainy-periwinkle.glitch.me/permalink/c6d39f24d61d811b55fc032806cade9f0be437dcb2f5735a4291adb04aa7a0ea.html. It’s a little harder to count usage here since we can’t as easily distinguish if two elements appear on the same page, so the count provided there conservatively simply takes the one with the largest count. In most cases the real number of pages is probably significantly larger.

There are also lots of accordionshttps://rainy-periwinkle.glitch.me/permalink/e573cf279bf1d2f0f98a90f0d7e507ac8dbd3e570336b20c6befc9370146220b.html, dialogshttps://rainy-periwinkle.glitch.me/permalink/0bb74b808e7850a441fc9b93b61abf053efc28f05e0a1bc2382937e3b78695d9.html, at least 65 variants of carouselshttps://rainy-periwinkle.glitch.me/permalink/651e592cb2957c14cdb43d6610b6acf696272b2fbd0d58a74c283e5ad4c79a12.html, lots of stuff about popupshttps://rainy-periwinkle.glitch.me/permalink/981967b19a9346ac466482c51b35c49fc1c1cc66177ede440ab3ee51a7912187.html, at least 27 variants of toggles and switcheshttps://rainy-periwinkle.glitch.me/permalink/2e6827af7c9d2530cb3d2f39a3f904091c523c2ead14daccd4a41428f34da5e8.html, and so on.

Perhaps we could research why we need 92 variants of button related elements that aren’t a native buttonhttps://rainy-periwinkle.glitch.me/permalink/5ae67c941395ca3125e42909c2c3881e27cb49cfa9aaf1cf59471e3779435339.html, for example, and try to fill the native gap.

If we notice popular things pop up (like <jdiv>, solving chat) we can take knowledge of things we know (like, that is what <jdiv> is about, or <olark>) and try to look at at least 43 things we’ve built for tackling thathttps://rainy-periwinkle.glitch.me/permalink/db8fc0e58d2d46d2e2a251ed13e3daab39eba864e46d14d69cc114ab5d684b00.html and follow connections to survey the space.

Conclusion

So, there’s lots of data here, but to summarize:

- Pages have more elements than they did 14 years ago, both on average and max.

- The lifetime of things on home pages is very long. Deprecating or discontinuing things doesn’t make them go away, and it might never.

- There is a lot of broken markup out there in the wild (misspelled tags, missing spaces, bad escaping, misunderstandings).

- Measuring what “useful” means is tricky. Lots of native elements don’t pass the 5% bar, or even the 1% bar, but lots of custom ones do, and for lots of reasons. Passing 1% should definitely grab our attention at least, but perhaps so should 0.5% because that is, according to the data, comparatively very successful.

- There is already a ton of custom markup out there. It comes in a lot of forms, but elements containing a dash definitely seem to have taken off.

- We need to increasingly study this data and come up with good observations to help find and pave the cowpaths.

That last one is where you come in. We’d love to tap into the creativity and curiosity of the larger community to help explore this data using some of the tools (like https://rainy-periwinkle.glitch.me/). Please share your interesting observations and help build our commons of knowledge and understanding.

Media

Introduction

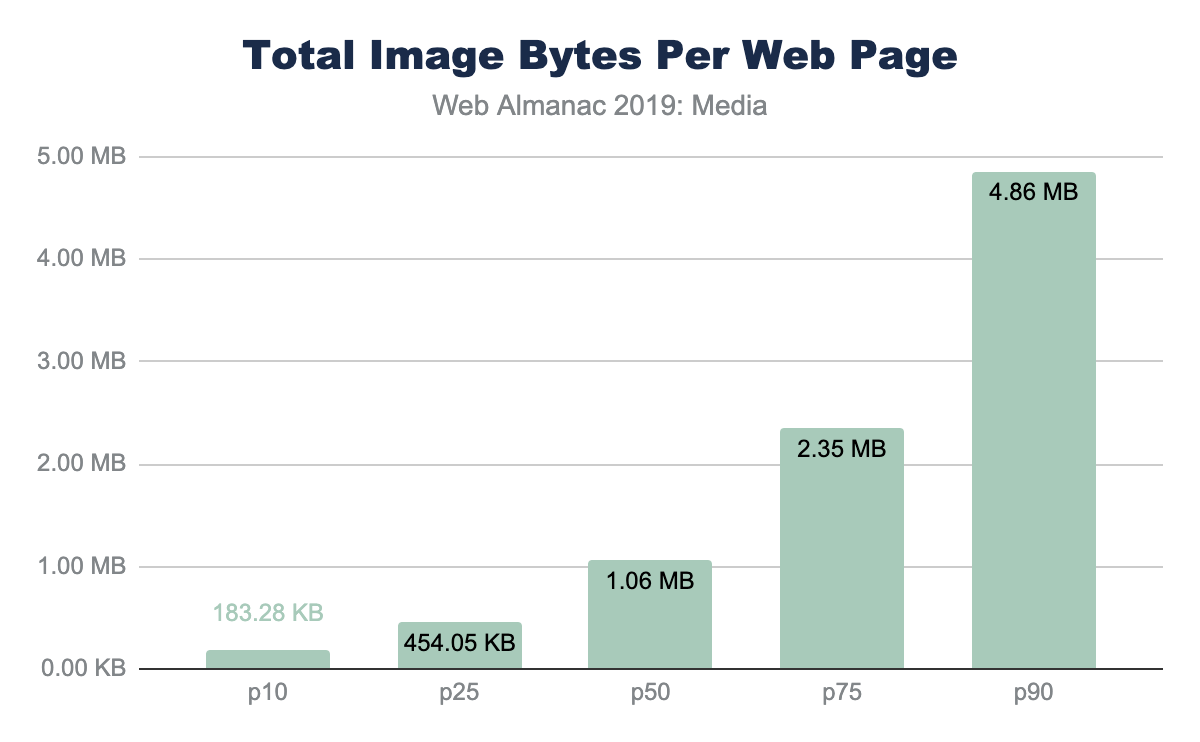

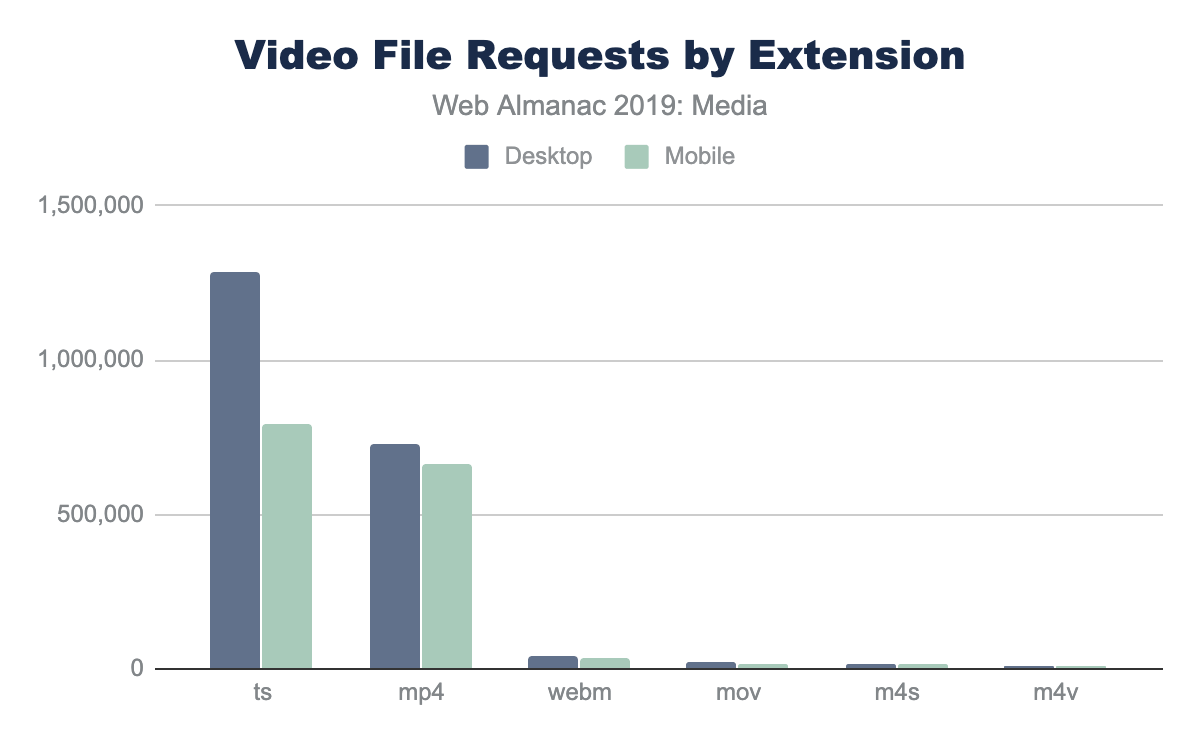

Images, animations, and videos are an important part of the web experience. They are important for many reasons: they help tell stories, engage audiences, and provide artistic expression in ways that often cannot be easily produced with other web technologies. The importance of these media resources can be demonstrated in two ways: by the sheer volume of bytes required to download for a page, and also the volume of pixels painted with media.

From a pure bytes perspective, HTTP Archive has historically reportedhttps://legacy.httparchive.org/interesting.php#bytesperpage an average of two-thirds of resource bytes associated from media. From a distribution perspective, we can see that virtually every web page depends on images and videos. Even at the tenth percentile, we see that 44% of the bytes are from media and can rise to 91% of the total bytes at the 90th percentile of pages.

While media are critical for the visual experience, the impact of this high volume of bytes has two side effects.

First, the network overhead required to download these bytes can be large and in cellular or slow network environments (like coffee shops or tethering when in an Uber) can dramatically slow down the page performance. Images are a lower priority request by the browser but can easily block CSS and JavaScript in the download. This by itself can delay the page rendering. Yet at other times, the image content is the visual cue to the user that the page is ready. Slow transfers of visual content, therefore, can give the perception of a slow web page.

The second impact is on the financial cost to the user. This is often an ignored aspect since it is not a burden on the website owner but a burden to the end-user. Anecdotally, it has been shared that some markets, like Japanhttps://x.com/yoavweiss/status/1195036487538003968?s=20, see a drop in purchases by students near the end of the month when data caps are reached, and users cannot see the visual content.

Further, the financial cost of visiting these websites in different parts of the world is disproportionate. At the median and 90th percentile, the volume of image bytes is 1 MB and 1.9 MB respectively. Using WhatDoesMySiteCost.comhttps://whatdoesmysitecost.com/#gniCost we can see that the gross national income (GNI) per capita cost to a user in Madagascar a single web page load at the 90th percentile would cost 2.6% of the daily gross income. By contrast, in Germany this would be 0.3% of the daily gross income.

Looking at bytes per page results in just looking at the costs—to page performance and the user—but it overlooks the benefits. These bytes are important to render pixels on the screen. As such, we can see the importance of the images and video resources by also looking at the number of media pixels used per page.

There are three metrics to consider when looking at pixel volume: CSS pixels, natural pixels, and screen pixels:

- CSS pixel volume is from the CSS perspective of layout. This measure focuses on the bounding boxes for which an image or video could be stretched or squeezed into. It also does not take into the actual file pixels nor the screen display pixels

- Natural pixels refer to the logical pixels represented in a file. If you were to load this image in GIMP or Photoshop, the pixel file dimensions would be the natural pixels.

- Screen pixels refer to the physical electronics on the display. Prior to mobile phones and modern high-resolution displays, there was a 1:1 relationship between CSS pixels and LED points on a screen. However, because mobile devices are held closer to the eye, and laptop screens are closer than the old mainframe terminals, modern screens have a higher ratio of physical pixels to traditional CSS pixels. This ratio is referred to as Device-Pixel-Ratio or colloquially referred to as Retina™ displays.

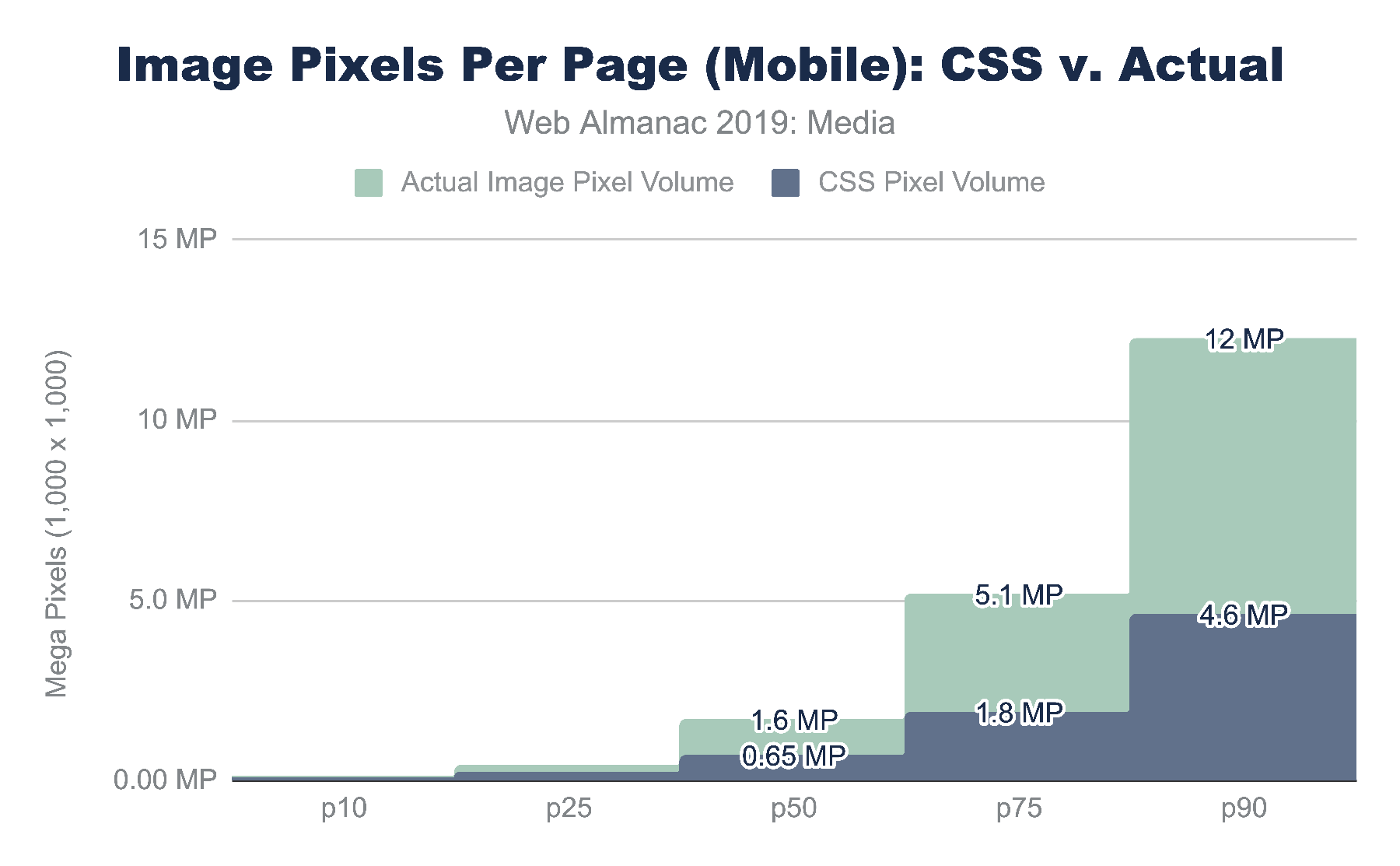

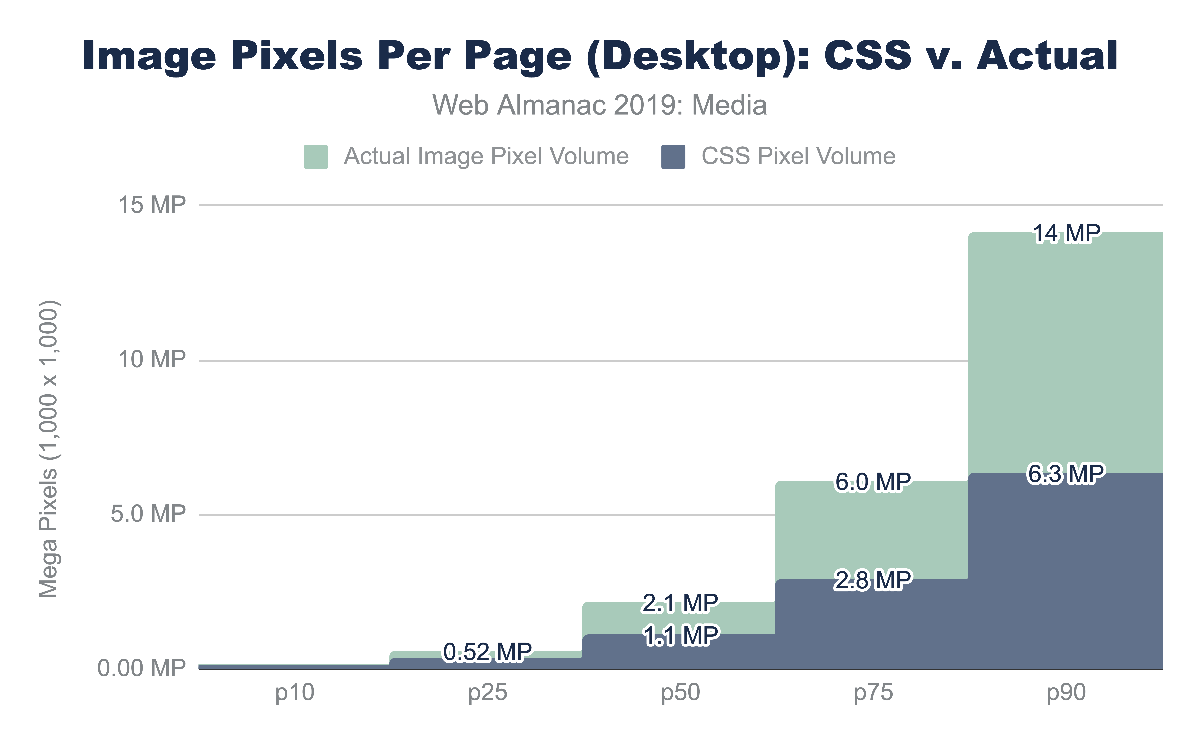

Looking at the CSS pixel and the natural pixel volume we can see that the median website has a layout that displays one megapixel (MP) of media content. At the 90th percentile, the CSS layout pixel volume grows to 4.6 MP and 6.3 MP mobile and desktop respectively. This is interesting not only because the responsive layout is likely different, but also because the form factor is different. In short, the mobile layout has less space allocated for media compared to the desktop.

In contrast, the natural, or file, pixel volume is between 2 and 2.6 times the layout volume. The median desktop web page sends 2.1MP of pixel content that is displayed in 1.1 MP of layout space. At the 90th percentile for mobile we see 12 MP squeezed into 4.6 MP.

Of course, the form factor for a mobile device is different than a desktop. A mobile device is smaller and usually held in portrait mode while the desktop is larger and used predominantly in landscape mode. As mentioned earlier, a mobile device also typically has a higher device pixel ratio (DPR) because it is held much closer to the eye, requiring more pixels per inch compared to what you would need on a billboard in Times Square. These differences force layout changes and users on mobile more commonly scroll through a site to consume the entirety of content.

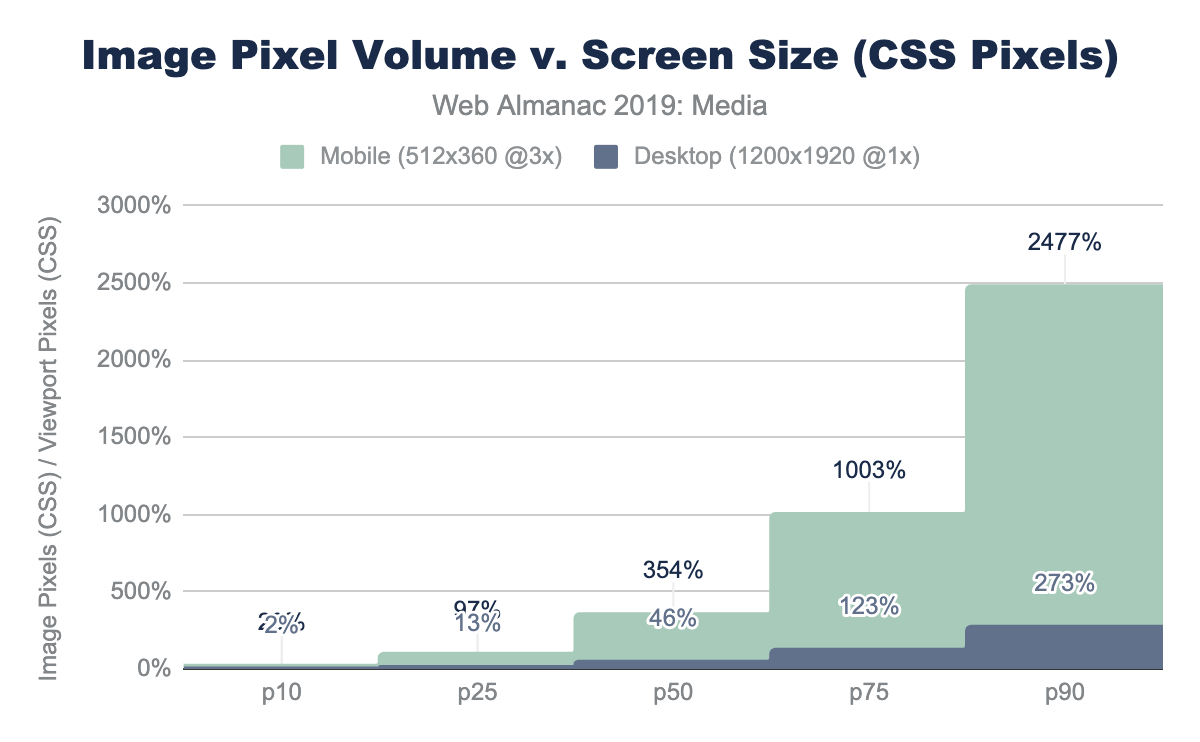

Megapixels are a challenging metric because it is a largely abstract metric. A useful way to express this volume of pixels being used on a web page is to represent it as a ratio relative to the display size.

For the mobile device used in the web page crawl, we have a display of 512 x 360 which is 0.18 MP of CSS content. (Not to be confused with the physical screen which is 3x or 3^2 more pixels, which is 1.7MP). Dividing this viewer pixel volume by the number of CSS pixels allocated to images we get a relative pixel volume.

If we had one image that filled the entire screen perfectly, this would be a 1x pixel fill rate. Of course, rarely does a website fill the entire canvas with a single image. Media content tends to be mixed in with the design and other content. A value greater than 1x implies that the layout requires the user to scroll to see the additional image content.

For the median web page on desktop, only 46% of the display would have layout containing images and video. In contrast, on mobile, the volume of media pixels fills 3.5 times the actual viewport size. The layout has more content than can be filled in a single screen, requiring the user to scroll. At a minimum, there is 3.5 scrolling pages of content per site (assuming 100% saturation). At the 90th percentile for mobile, this grows substantially to 25x the viewport size!

Media resources are critical for the user experience.

Images

Much has already been written on the subject of managing and optimizing images to help reduce the bytes and optimize the user experience. It is an important and critical topic for many because it is the creative media that define a brand experience. Therefore, optimizing image and video content is a balancing act between applying best practices that can help reduce the bytes transferred over the network while preserving the fidelity of the intended experience.

While the strategies that are utilized for images, videos, and animations are—in broad strokes—similar, the specific approaches can be very different. In general, these strategies boil down to:

- File formats - utilizing the optimal file format

- Responsive - applying responsive images techniques to transfer only the pixels that will be shown on screen

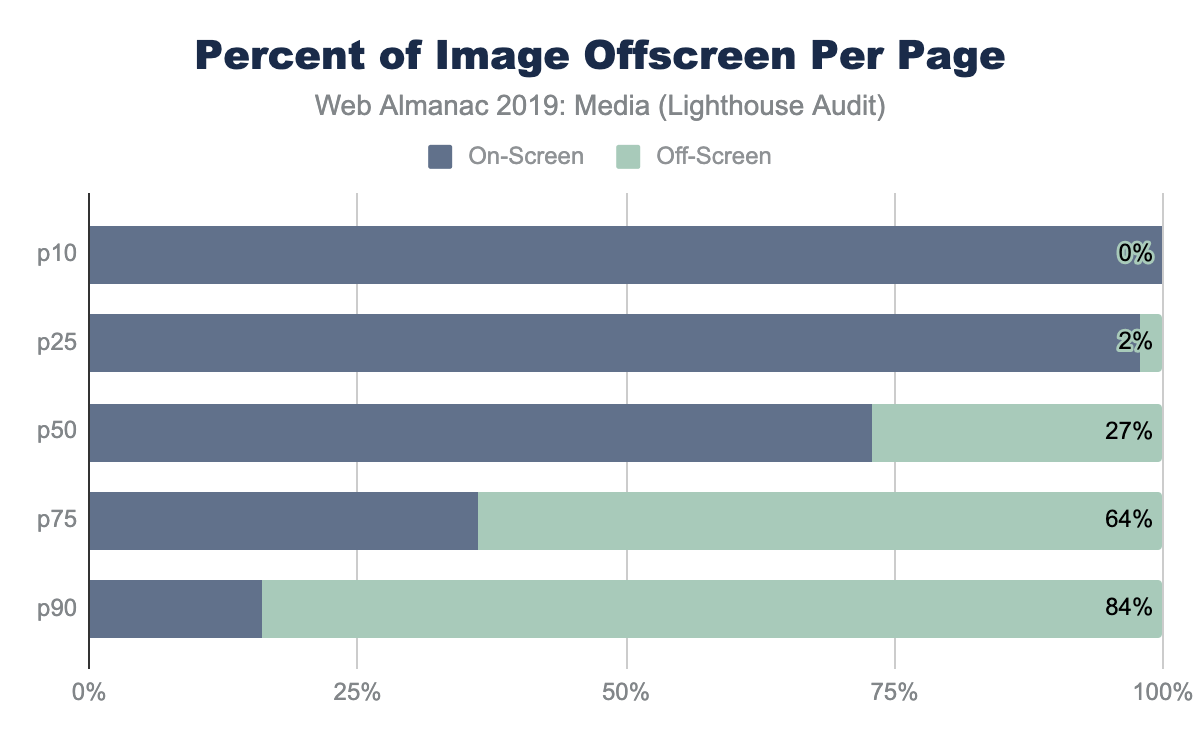

- Lazy loading - to transfer content only when a human will see it

- Accessibility - ensuring a consistent experience for all humans

It is rare to find a web page that does not utilize images. Over the years, many different file formats have emerged to help present content on the web, each addressing a different problem. Predominantly, there are 4 main universal image formats: JPEG, PNG, GIF, and SVG. In addition, Chrome has enhanced the media pipeline and added support for a fifth image format: WebP. Other browsers have likewise added support for JPEG2000 (Safari), JPEG-XL (IE and Edge) and HEIC (WebView only in Safari).

Each format has its own merits and has ideal uses for the web. A very simplified summary would break down as:

| Format | Highlights | Drawbacks |

|---|---|---|

| JPEG |

|

|

| PNG |

|

|

| GIF |

|

|

| SVG |

|

|

| WebP |

|

|

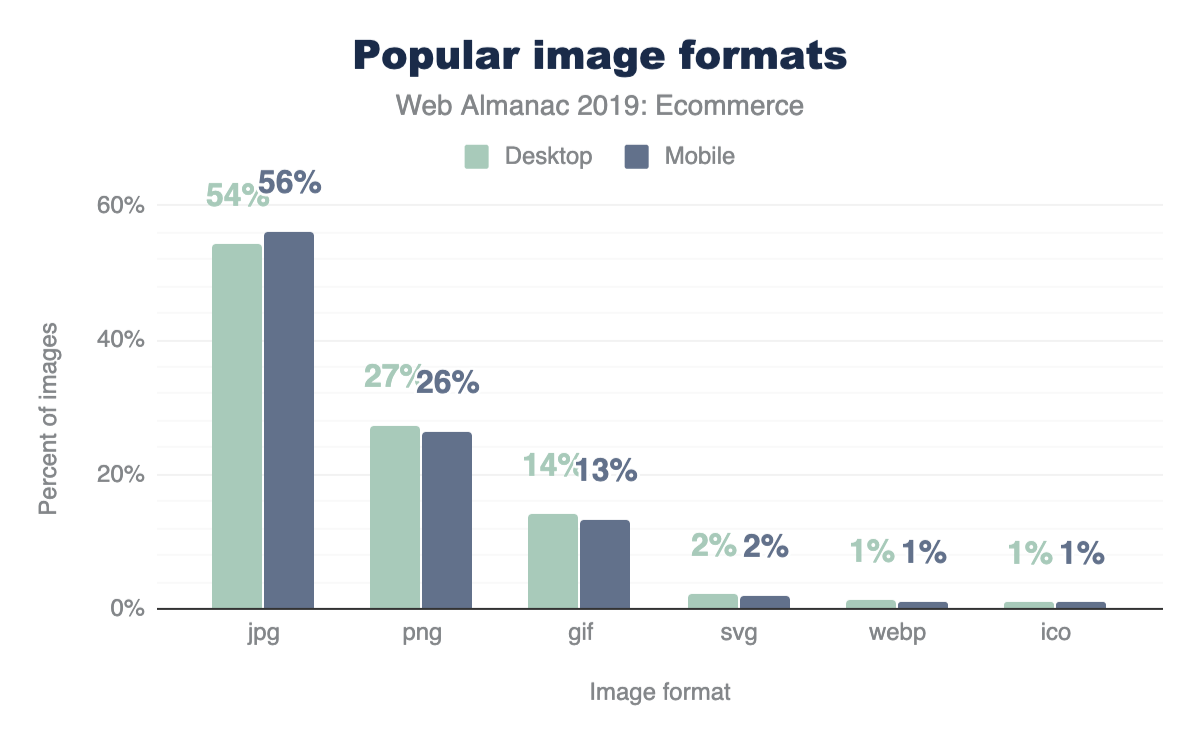

Image formats

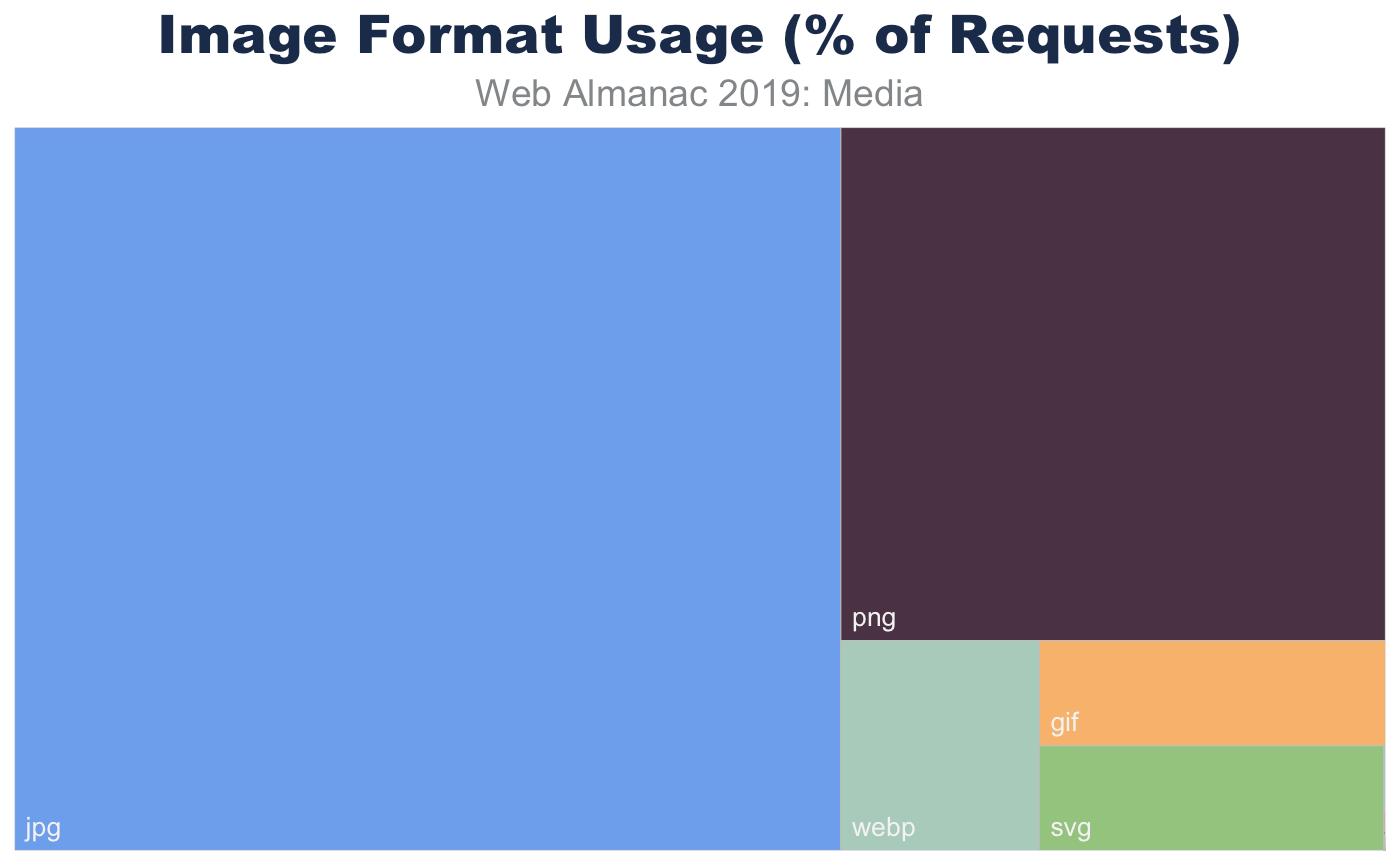

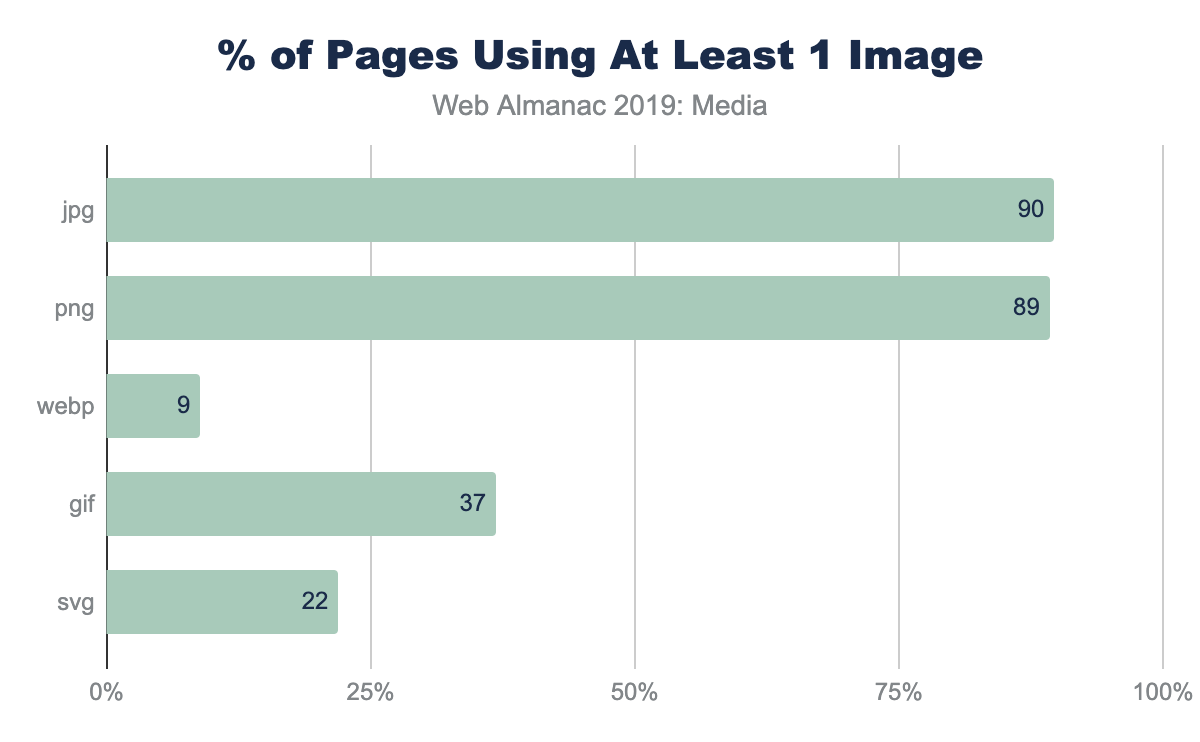

In aggregate, across all page, we indeed see the prevalence of these formats. JPEG, one of the oldest formats on the web, is by far the most commonly used image formats at 60% of the image requests and 65% of all image bytes. Interestingly, PNG is the second most commonly used image format 28% of image requests and bytes. The ubiquity of support along with the precision of color and creative content are likely explanations for its wide use. In contrast SVG, GIF, and WebP share nearly the same usage at 4%.

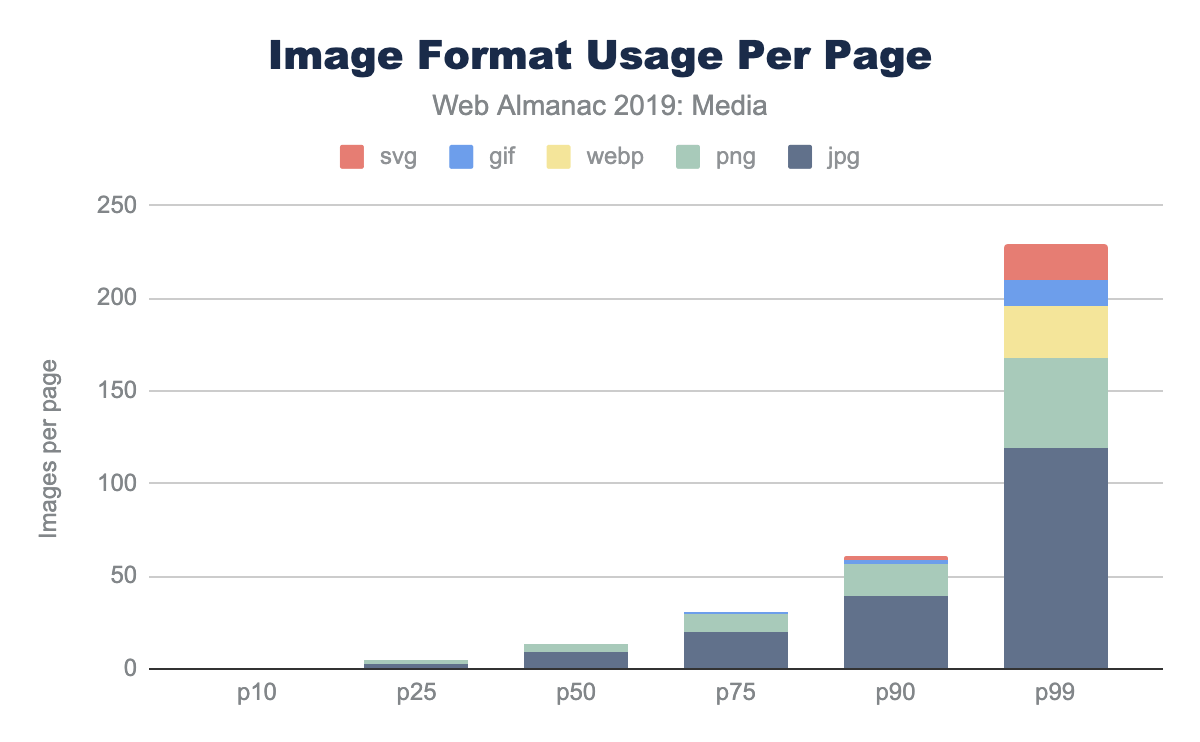

Of course, web pages are not uniform in their use of image content. Some depend on images more than others. Look no further than the home page of google.com and you will see very little imagery compared to a typical news website. Indeed, the median website has 13 images, 61 images at the 90th percentile, and a whopping 229 images at the 99th percentile.

While the median page has nine JPEGs and four PNGs, and only in the top 25% pages GIFs were used, this doesn’t report the adoption rate. The use and frequency of each format per page doesn’t provide insight into the adoption of the more modern formats. Specifically, what percent of pages include at least one image in each format?

This helps explain why—even at the 90th percentile of pages—the frequency of WebP is still zero; only 9% of web pages have even one resource. There are many reasons that WebP might not be the right choice for an image, but adoption of media best practices, like adoption of WebP itself, still remain nascent.

Image file sizes

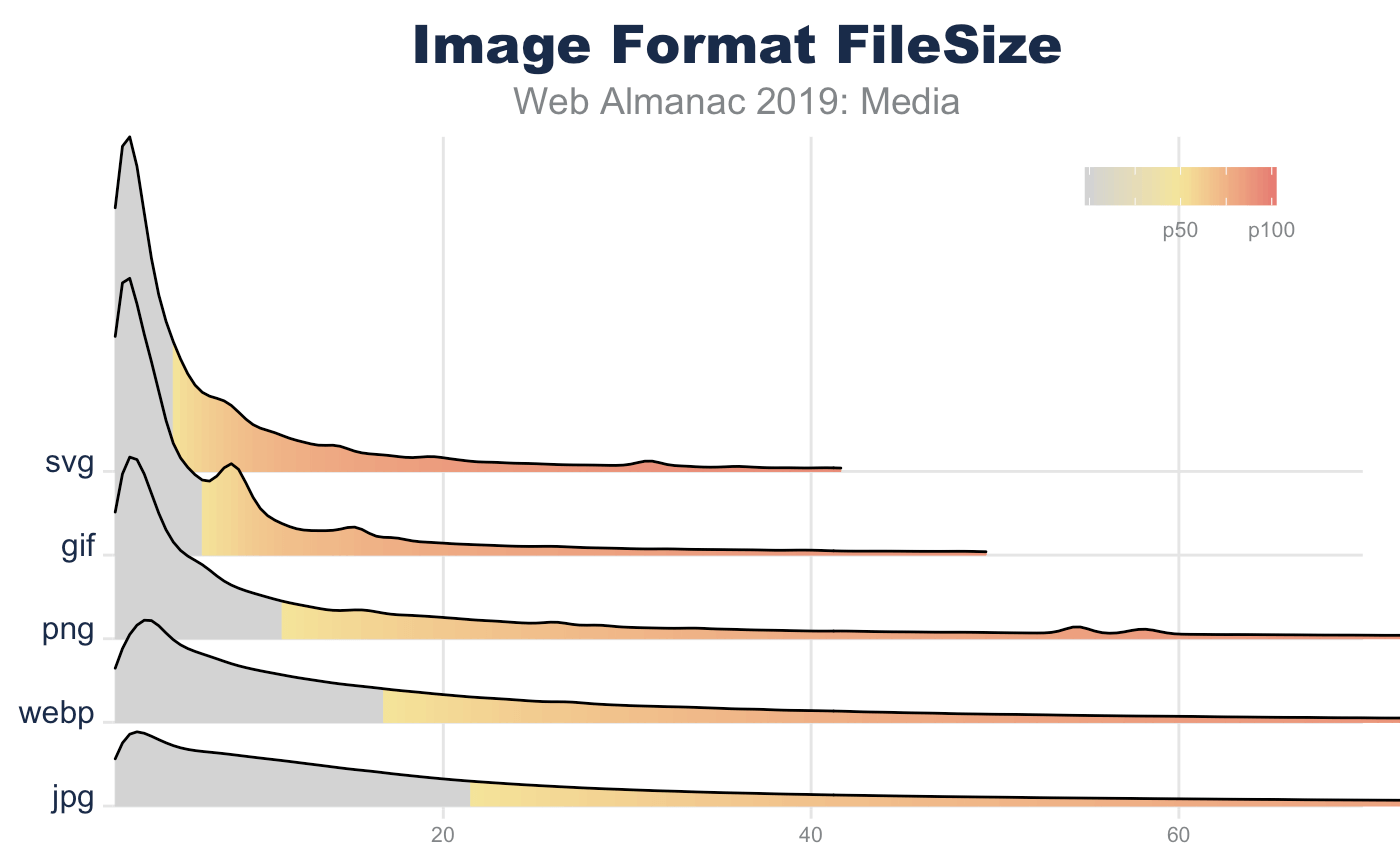

There are two ways to look at image file sizes: absolute bytes per resource and bytes-per-pixel.

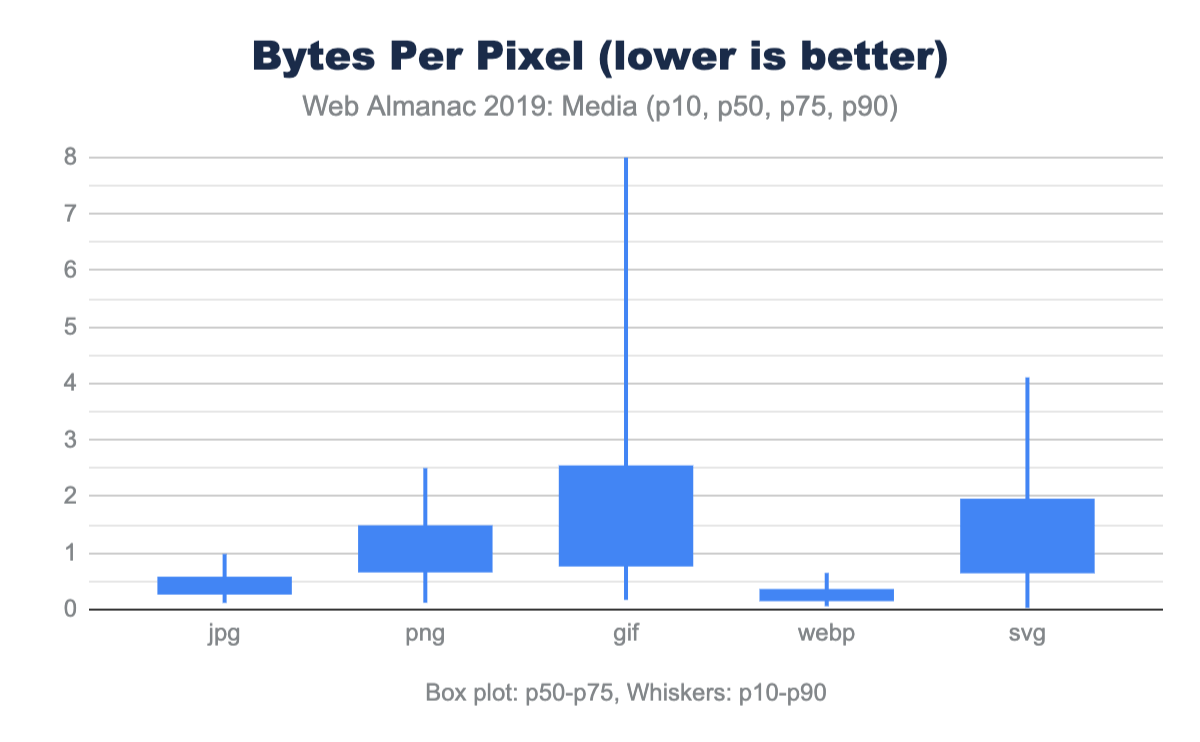

From this we can start to get a sense of how large or small a typical resource is on the web. However, this doesn’t give us a sense of the volume of pixels represented on screen for these file distributions. To do this we can divide each resource bytes by the natural pixel volume of the image. A lower bytes-per-pixel indicates a more efficient transmission of visual content.

While previously it appeared that GIF files were smaller than JPEG, we can now clearly see that the cause of the larger JPEG resources is due to the pixel volume. It is probably not a surprise that GIF shows a very low pixel density compared to the other formats. Additionally, while PNG can handle high bit depth and doesn’t suffer from chroma subsampling blurriness, it is about twice the size of JPG or WebP for the same pixel volume.

Of note, the pixel volume used for SVG is the size of the DOM element on screen (in CSS pixels). While considerably smaller for file sizes, this hints that SVGs are generally used in smaller portions of the layout. This is why the bytes-per-pixel appears worse than PNG.

Again, it is worth emphasizing, this comparison of pixel density is not comparing equivalent images. Rather it is reporting typical user experience. As we will discuss next, even in each of these formats there are techniques that can be used to further optimize and reduce the bytes-per-pixel.

Image format optimization

Selecting the best format for an experience is an art of balancing capabilities of the format and reducing the total bytes. For web pages one goal is to help improve web performance through optimizing images. Yet within each format there are additional features that can help reduce bytes.

Some features can impact the total experience. For example, JPEG and WebP can utilize quantization (commonly referred to as quality levels) and chroma subsampling, which can reduce the bits stored in the image without impacting the visual experience. Like MP3s for music, this technique depends on a bug in the human eye and allows for the same experience despite the loss of color data. However, not all images are good candidates for these techniques since this can create blocky or blurry images and may distort colors or make text overlays become unreadable.

Other format features simply organize the content and sometimes require contextual knowledge. For example, applying progressive encoding of a JPEG reorganizes the pixels into scan layers that allows the browser to complete layout sooner and coincidently reduces pixel volume.

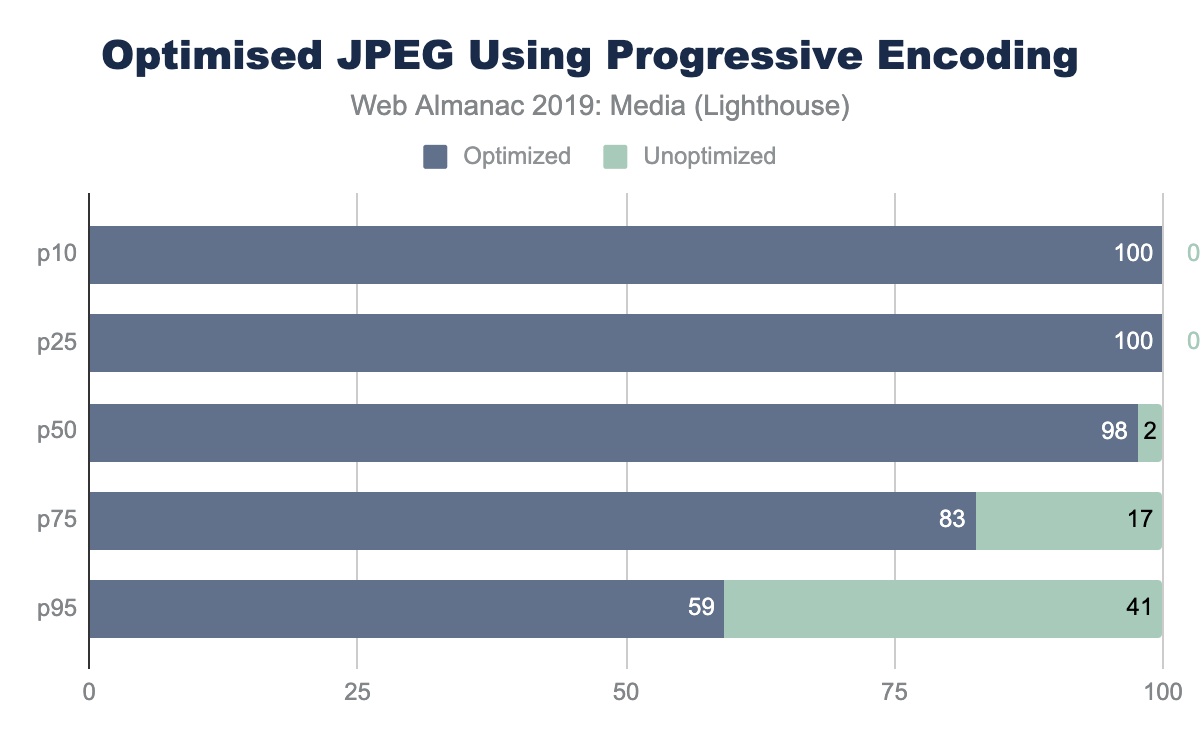

One Lighthouse test is an A/B comparing baseline with a progressively encoded JPEG. This provides a smell to indicate whether the images overall can be further optimized with lossless techniques and potentially with lossy techniques like using different quality levels.

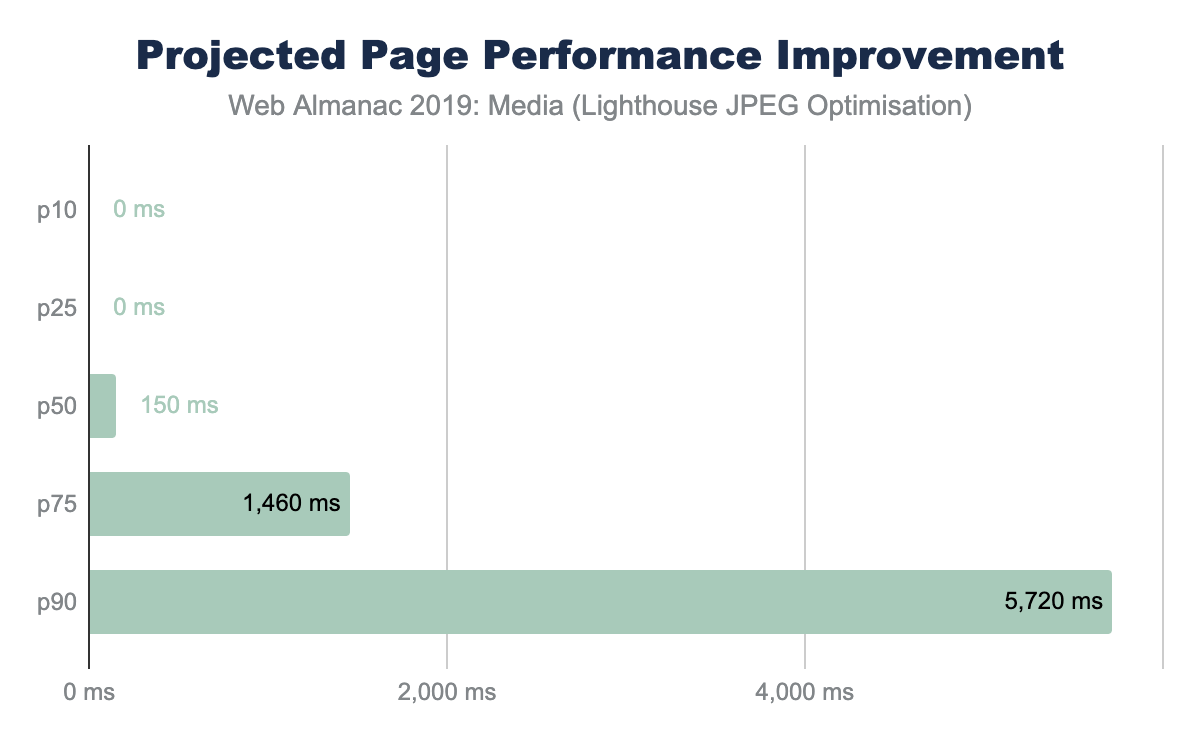

The savings in this AB Lighthouse test is not just about potential byte savings, which can accrue to several MBs at the 95th percentile, it also demonstrates the page performance improvement.

Responsive images

Another axis for improving page performance is to apply responsive images. This technique focuses on reducing image bytes by reducing the extra pixels that are not shown on the display because of image shrinking. At the beginning of this chapter, you saw that the median web page on desktop used one MP of image placeholders yet transferred 2.1 MP of actual pixel volume. Since this was a 1x DPR test, 1.1 MP of pixels were transferred over the network, but not displayed. To reduce this overhead, we can use one of two (possibly three) techniques:

- HTML markup - using a combination of the

<picture>and<source>elements along with thesrcsetandsizesattributes allows the browser to select the best image based on the dimensions of the viewport and the density of the display. - Client Hints - this moves the selection of possible resized images to HTTP content negotiation.

- BONUS: JavaScript libraries to delay image loading until the JavaScript can execute and inspect the Browser DOM and inject the correct image based on the container.

Use of HTML markup

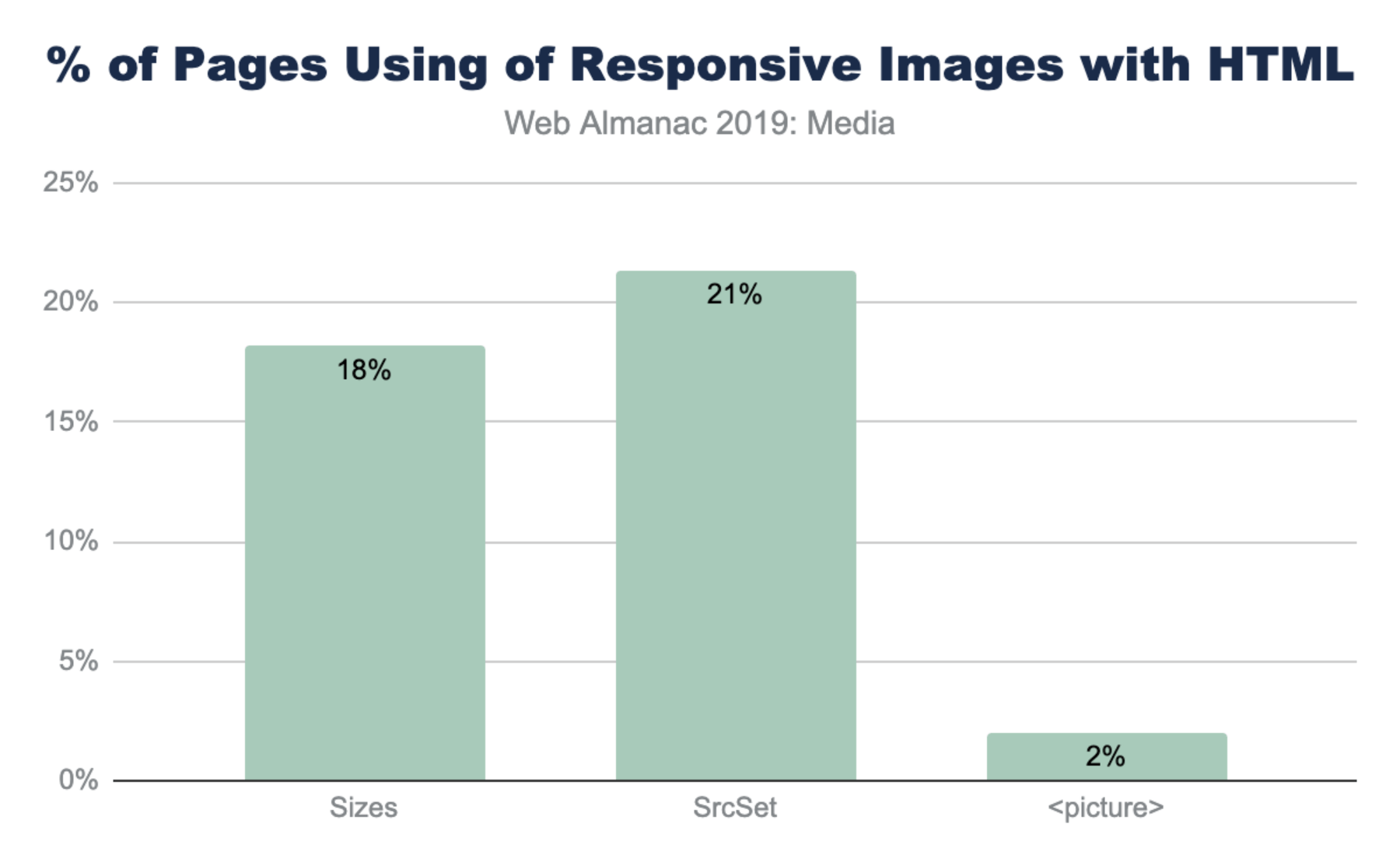

The most common method to implement responsive images is to build a list of alternative images using either <img srcset> or <source srcset>. If the srcset is based on DPR, the browser can select the correct image from the list without additional information. However, most implementations also use <img sizes> to help instruct the browser how to perform the necessary layout calculation to select the correct image in the srcset based on pixel dimensions.

The notably lower use of <picture> is not surprising given that it is used most often for advanced responsive web design (RWD) layouts like art directionhttps://developer.mozilla.org/docs/Learn/HTML/Multimedia_and_embedding/Responsive_images#Art_direction.

Use of sizes

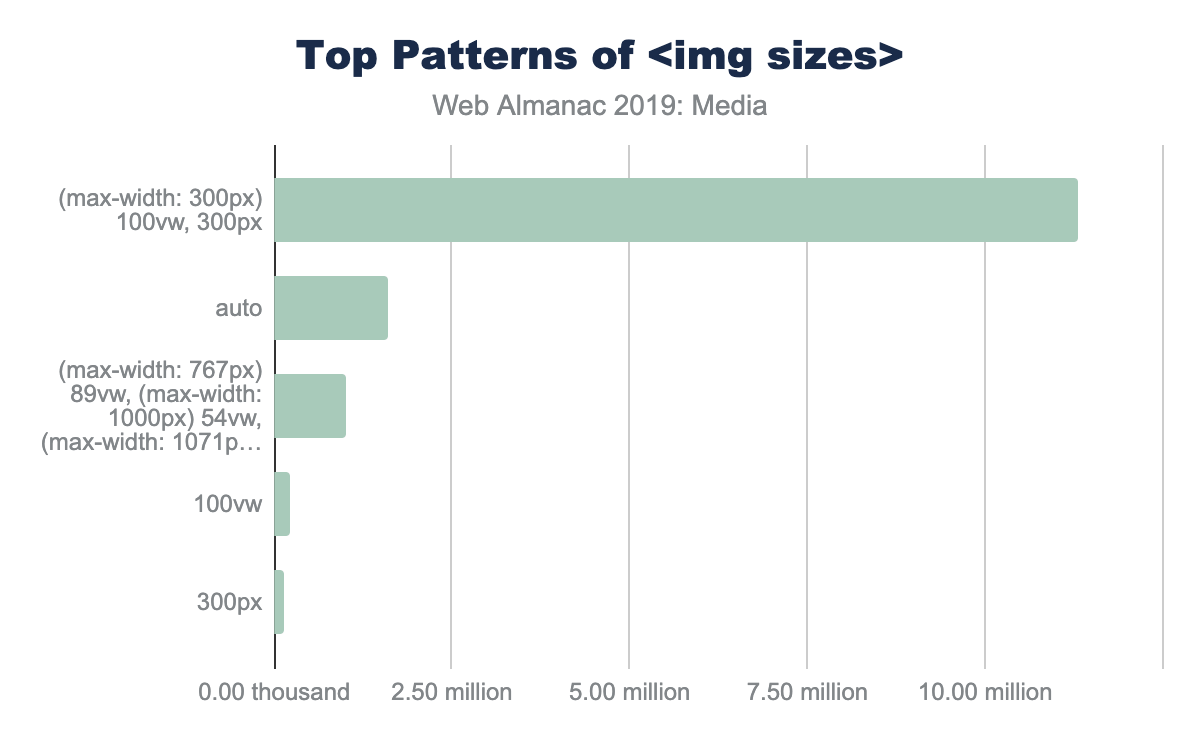

The utility of srcset is usually dependent on the precision of the sizes media query. Without sizes the browser will assume the <img> tag will fill the entire viewport instead of smaller component. Interestingly, there are five common patterns that web developers have adopted for <img sizes>:

<img sizes="100vw">- this indicates that the image will fill the width of the viewport (also the default).<img sizes="200px">- this is helpful for browsers selecting based on DPR.<img sizes="(max-width: 300px) 100vw, 300px">- this is the second most popular design pattern. It is the one auto generated by WordPress and likely a few other platforms. It appears auto generated based on the original image size (in this case 300px).<img sizes="(max-width: 767px) 89vw, (max-width: 1000px) 54vw, ...">- this pattern is the custom built design pattern that is aligned with the CSS responsive layout. Each breakpoint has a different calculation for sizes to use.

<img sizes> |

Frequency (millions) | % |

|---|---|---|

(max-width: 300px) 100vw, 300px |

1.47 | 5% |

(max-width: 150px) 100vw, 150px |

0.63 | 2% |

(max-width: 100px) 100vw, 100px |

0.37 | 1% |

(max-width: 400px) 100vw, 400px |

0.32 | 1% |

(max-width: 80px) 100vw, 80px |

0.28 | 1% |

sizes patterns.

<img sizes="auto">- this is the most popular use, which is actually non-standard and is an artifact of the use of thelazy_sizesJavaScript library. This uses client-side code to inject a bettersizescalculation for the browser. The downside of this is that it depends on the JavaScript loading and DOM to be fully ready, delaying image loading substantially.

(max-width: 300px) 100vw, 300px’, 1.60 million use ’auto’, 1.00 million use ’img sizes=(max-width: 767px) 89vw...etc.’, 0.23 million use ’100vw’ and 0.13 million use ’300px’<img sizes>.

Client Hints

Client Hintshttps://web.dev/user-agent-client-hints/ allow content creators to move the resizing of images to HTTP content negotiation. In this way, the HTML does not need additional <img srcset> to clutter the markup, and instead can depend on a server or image CDN to select an optimal imagehttps://cloudinary.com/blog/client_hints_and_responsive_images_what_changed_in_chrome_67 for the context. This allows simplifying of HTML and enables origin servers to adapt overtime and disconnect the content and presentation layers.

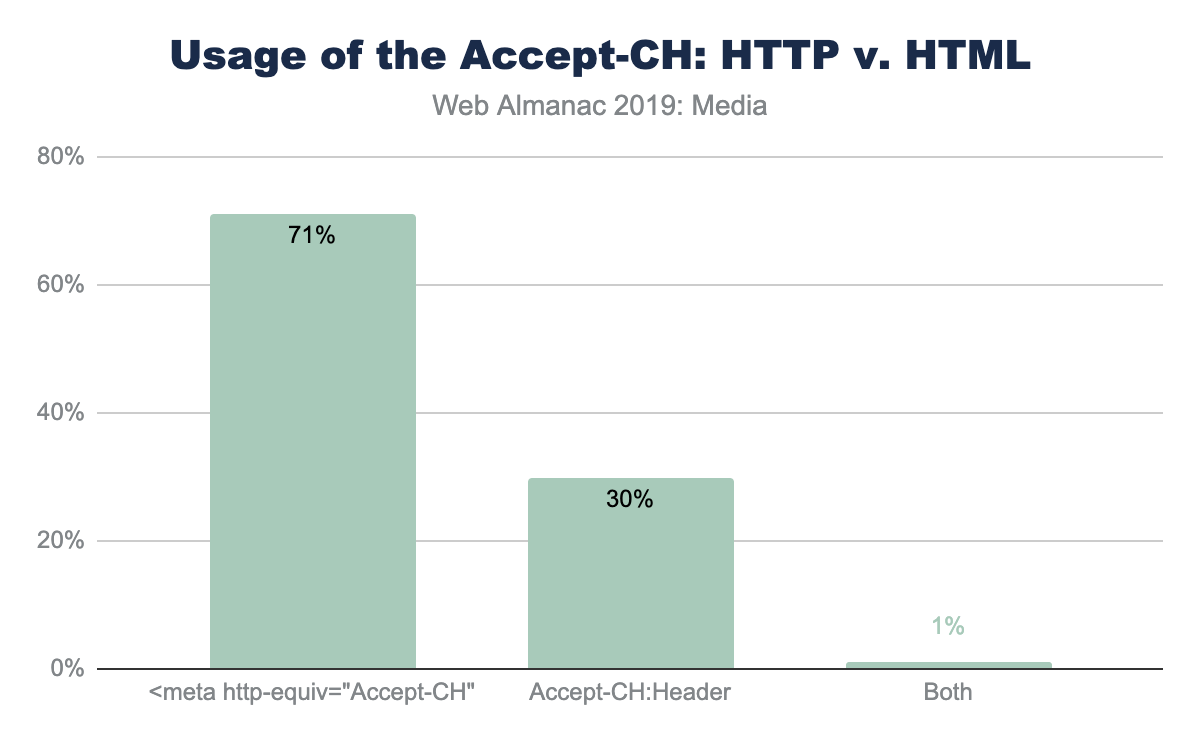

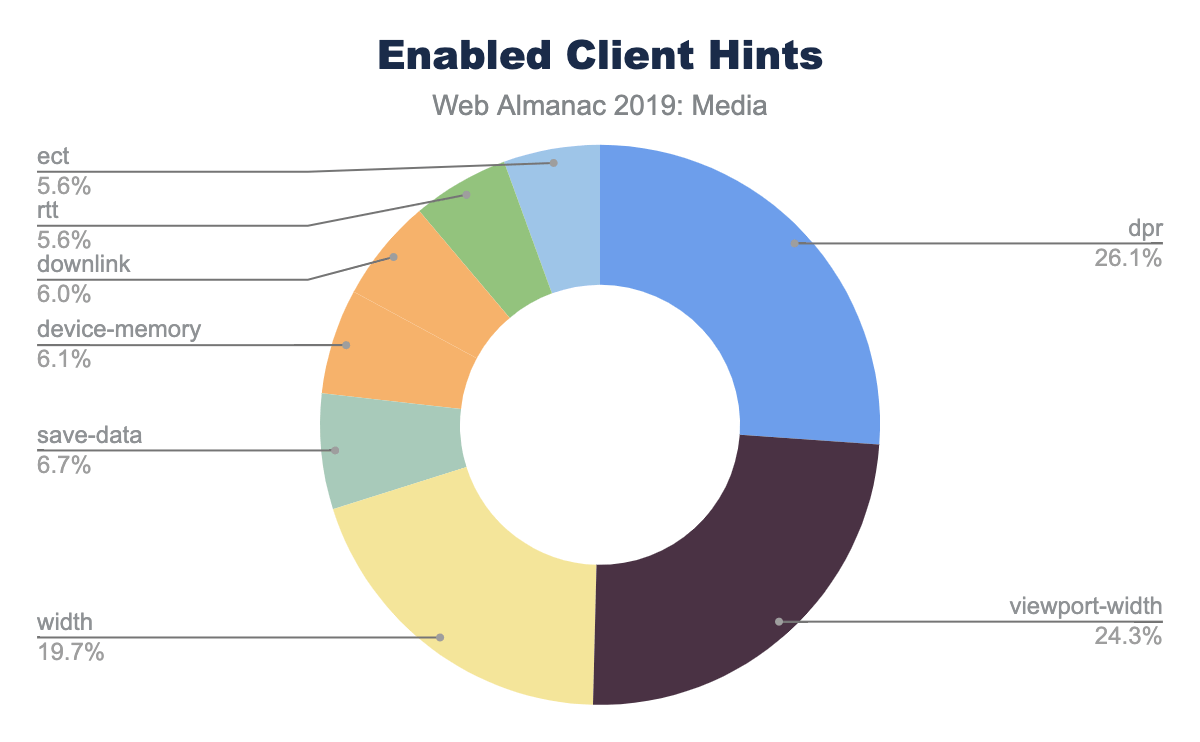

To enable Client Hints, the web page must signal to the browser using either an extra HTTP header Accept-CH: DPR, Width, Viewport-Width or by adding the HTML <meta http-equiv="Accept-CH" content="DPR, Width, Viewport-Width">. The convenience of one or the other technique depends on the team implementing and both are offered for convenience.