Compression

Introduction

A user’s time is valuable, so they shouldn’t have to wait a long time for a web page to load. The HTTP protocol allows the responses to be compressed, which decreases the time needed to transfer the content. Compression often leads to significant improvement in the user experience. It can reduce page weight, improve web performance and boost search rankings. As such, it’s an important part of Search Engine Optimization.

This chapter discusses lossless compression applied on a HTTP response. Lossy and lossless compression used in media formats such as images, audio and video are equally (if not more) important for increasing the page loading speed. However, these are not in the scope of this chapter, as they usually are part of the file format itself.

Content types using HTTP compression

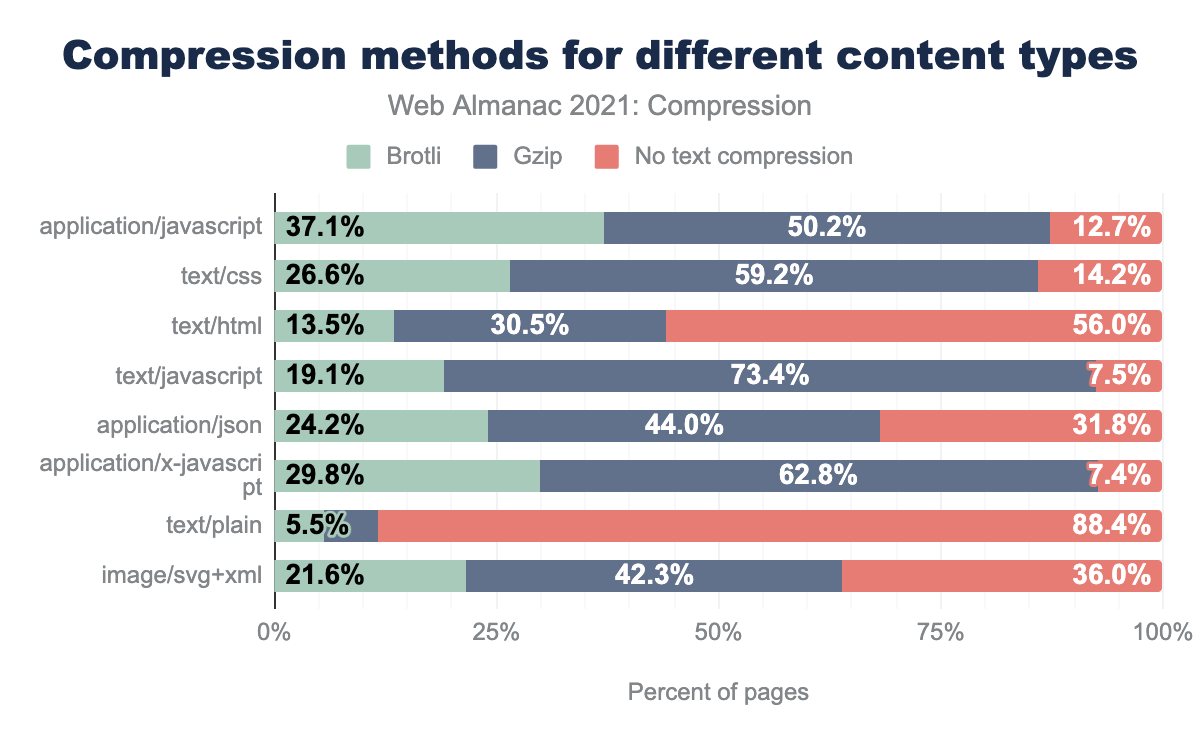

HTTP compression is recommended for text-based content, such as HTML, CSS, JavaScript, JSON, or SVG, as well as for woff, ttf and ico files. Media files such as images that are already compressed do not benefit from HTTP compression since, as mentioned previously, their representation already includes internal compression.

text/plain and text/html are the only content types that are compressed less than 50% of the time. application/json is compressed approximately 68% of the time, image/svg+xml approximately 64%. text/css and application/javascript are each compressed over 85% of the time, and application/x-javascript and text/javascript are compressed more than 90% of the time.Compared to the other content types, text/plain and text/html use the least amount of compression, with merely 12% and 14% using compression at all. This might be because text/html is more often dynamically generated than static content such as JavaScript and CSS, even though compressing dynamically generated content also has a positive impact. More analysis about the compression of JavaScript content is available in the JavaScript chapter.

Server settings for HTTP compression

For HTTP content encoding, the HTTP standard defines the Accept-Encoding request header, with which a HTTP client can announce to the server what content encodings it can handle. The server’s response can then contain a Content-Encoding header field that specifies which of the encodings was chosen to transform the data in the response body.

Practically all text compression is done by one of two HTTP content encodings: Gzip and Brotli. Both Brotli and Gzip are supported by virtually all browsers. On the server side, most popular servers like nginx and Apache can be configured to use Brotli and/or Gzip. The configuration is different depending on when the content is generated:

- Static content: this content can be precompressed. The web server can be set up to map the URLs to the appropriate compressed files, e.g. based on the filename extension. For example, CSS and JavaScript are often static content and so can be precompressed to reduce effort for the web server to compress for each request.

- Dynamically generated content: this has to be compressed on the fly for each request by the web server (or a plugin) itself. For example, HTML or JSON can be dynamic content in some cases.

When compressing text with Brotli or Gzip it is possible to select different compression levels. Higher compression levels will result in smaller compressed files, but take a longer time to compress. During decompression, CPU usage tends not to be higher for more heavily compressed files. Rather, files that are compressed with a higher compression level are slightly faster to decode.

Depending on the web server software used, compression needs to be enabled, and the configuration may be separate for precompressed and dynamically compressed content. For Apache, Brotli can be enabled with mod_brotli, and Gzip with mod_deflate. For nginx instructions for enabling Brotli and for enabling Gzip are available as well.

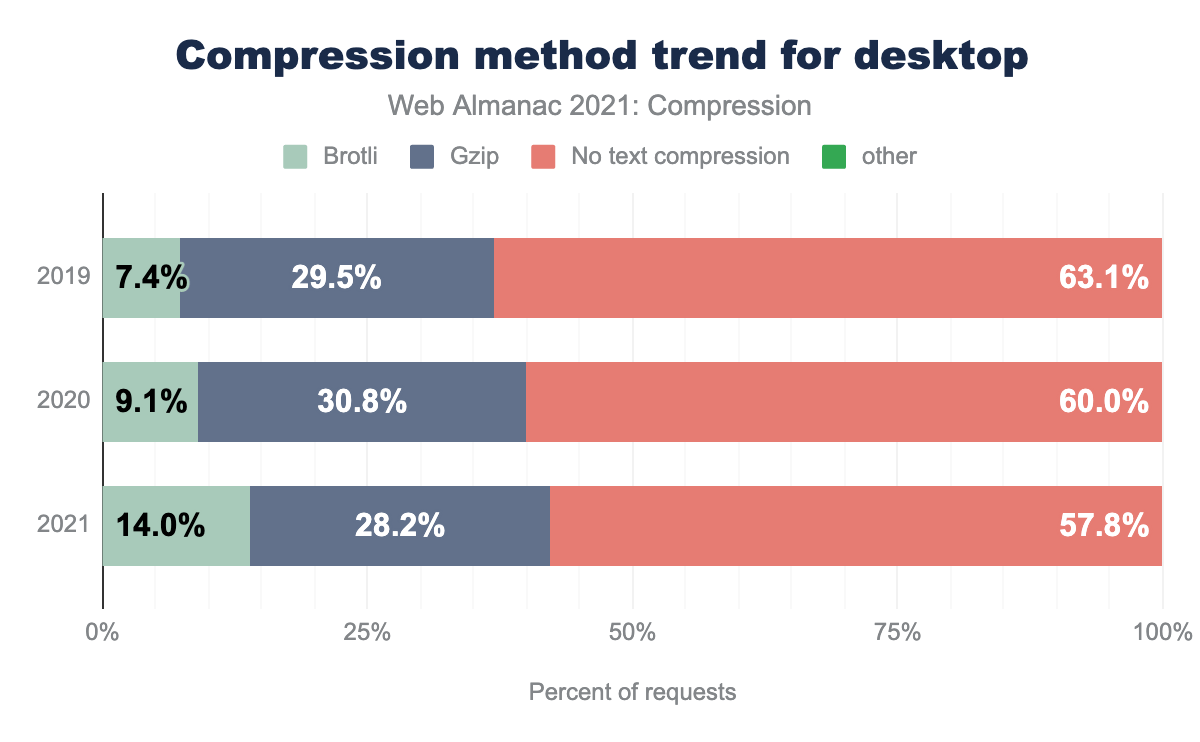

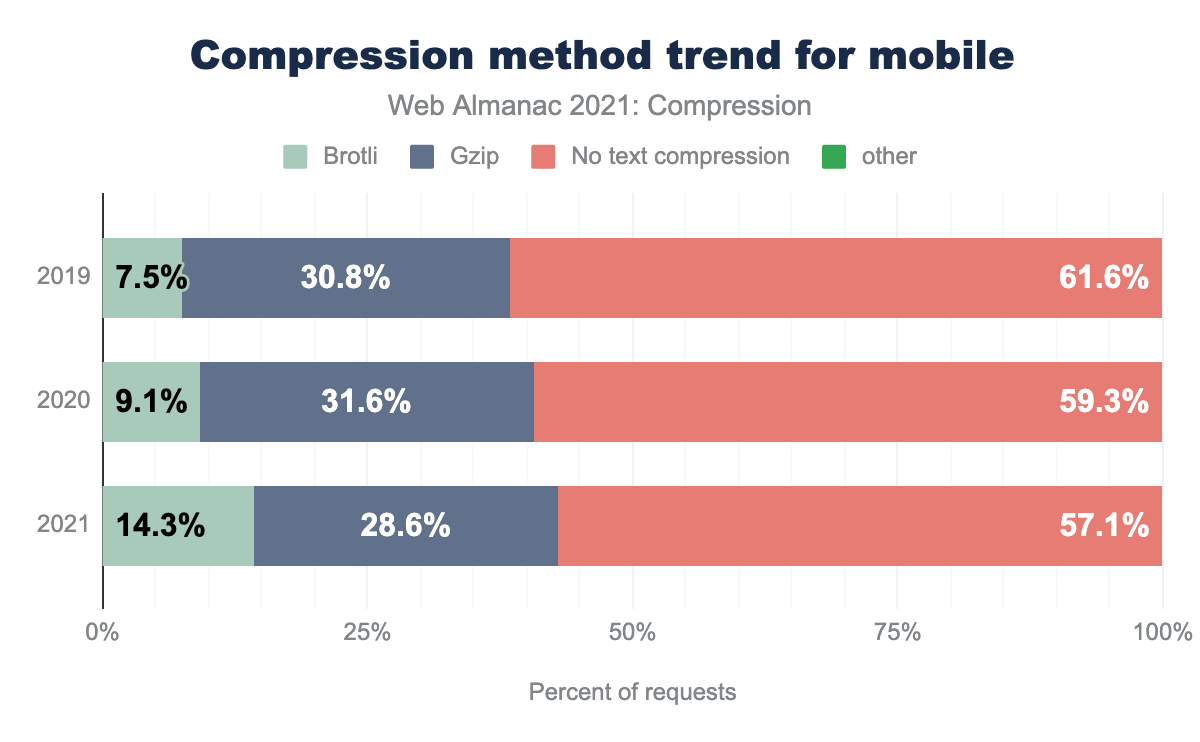

Trends in HTTP compression

The graph below shows the usage share trend of lossless compression from the HTTP Archive metrics over the last 3 years. The usage of Brotli has doubled since 2019, while the usage of Gzip has slightly decreased, and overall the use of HTTP compression is growing on desktop and on mobile.

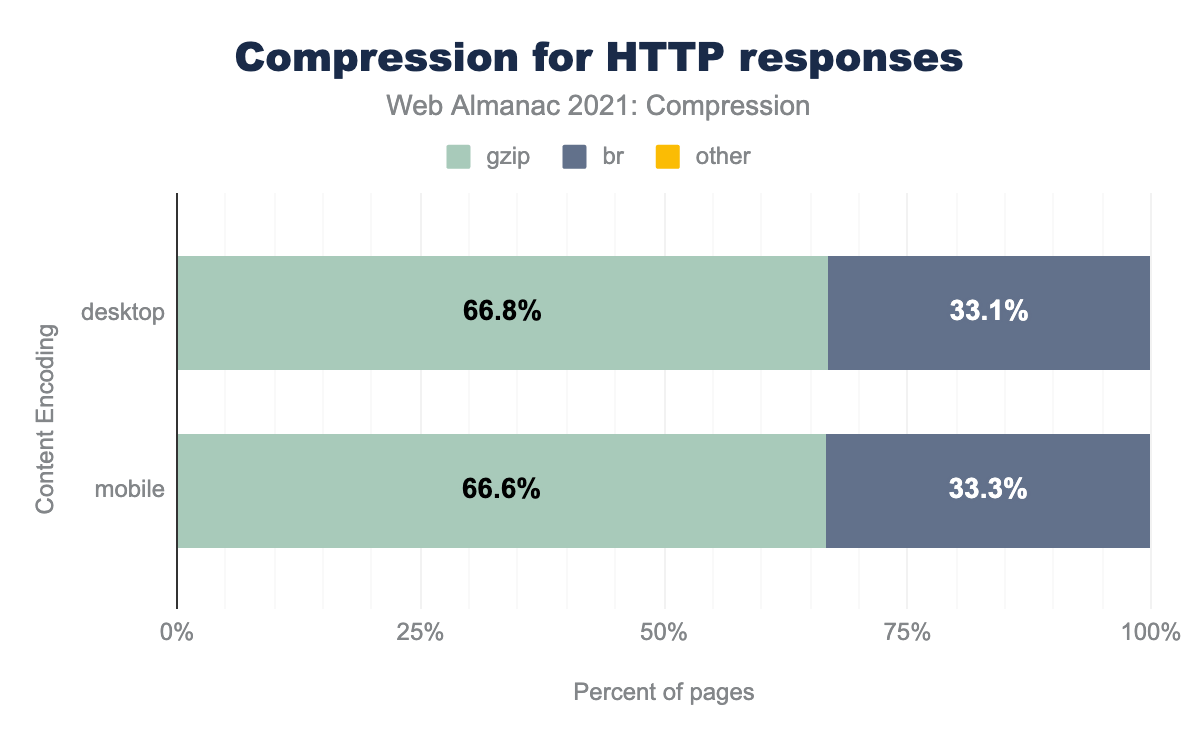

Of the resources that are served compressed, the majority are using either Gzip (66%) or Brotli (33%). The other compression algorithms are used infrequently. This split is virtually the same for desktop and mobile.

First-party vs third-party compression

Third Parties have an impact on the user experience of a website. Historically the amount of compression used by first parties compared with third parties was significantly different.

| Desktop | Mobile | |||

|---|---|---|---|---|

| Content-encoding | First-party | Third-party | First-party | Third-party |

| No text compression | 58.0% | 57.5% | 56.1% | 58.3% |

| Gzip | 28.1% | 28.4% | 29.1% | 28.1% |

| Brotli | 13.9% | 14.1% | 14.9% | 13.7% |

| Deflate | 0.0% | 0.0% | 0.0% | 0.0% |

| Other / Invalid | 0.0% | 0.0% | 0.0% | 0.0% |

From these results we can see that, compared to 2020, first party content has caught up with third party content in the use of compression and they use compression in comparable ways. Usage of compression and especially Brotli has grown in both categories. Brotli compression has doubled in percentage for first party content compared to a year ago.

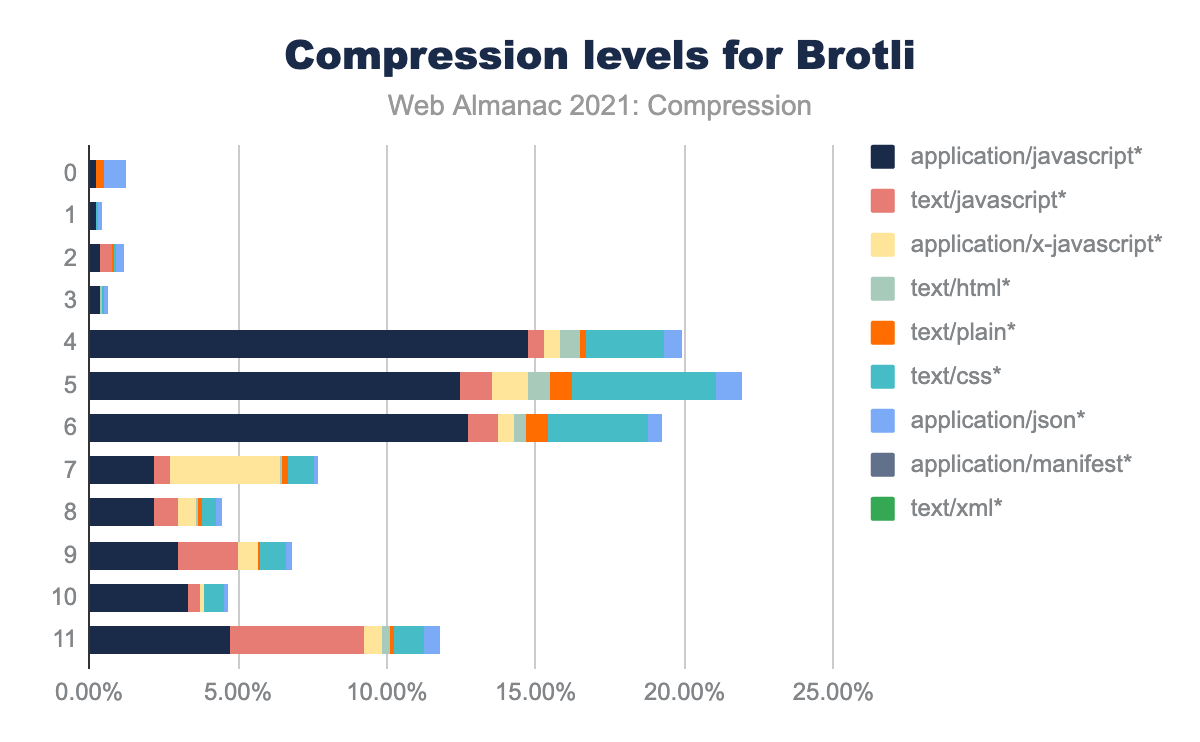

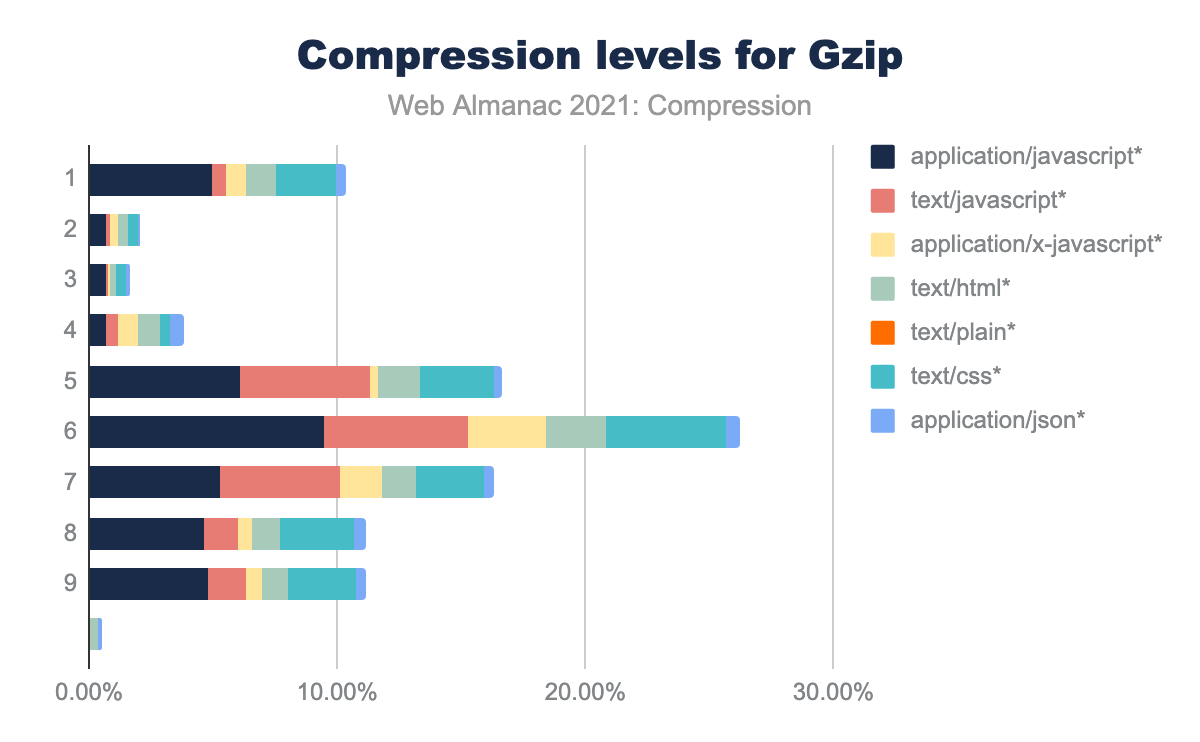

Compression levels

Compression level is a parameter given to the encoder to adjust the amount of effort is applied to find redundancy in the input in order to consequently achieve higher compression density. A higher compression level results in slower compression, but does not substantially affect the decompression speed, even making it slightly faster. For precompressed content, the time needed to compress the data has no effect on the user experience because it can be done beforehand. For dynamic content, the amount time the CPU needs to compress the resource can be traded off to the gain in speed to send the reduced, compressed data over the network.

Brotli encoding allows compression levels from 0 to 11, while Gzip uses levels 1 to 9. Higher levels can be achieved for Gzip as well, with a tool such as Zopfli. This is indicated as opt in the graph below.

We used the HTTP Archive summary_response_bodies data table to analyze the compression levels currently used on the web. This is estimated by re-compressing the responses with different compression level settings and taking the closest actual size, based on around 14,000 compressed responses that used Brotli, and 11,000 that used Gzip.

When plotting the amount of instances of each level, it shows two peaks for the most commonly used Brotli compression levels, one around compression level 5, and another at the maximum compression level. Usage of compression levels below 4 is rare.

Gzip compression is applied largely around compression level 6, extending to level 9. The peak at level 1 might be explained because this is the default compression level of the popular web server nginx. For comparison, Gzip level 9 attempts thousands of redundancy matches, level 6 limits it to about a hundred, while level 1 means limiting redundancy matching to only four candidates and 15% worse compression.

opt which corresponds to optimizers such as Zopfli. It shows peaks at level 1 and at level 6 extending through level 9. Each level is also broken down by content type.The figure breaks down each compression level by content type. JavaScript is the most common content type in almost all cases. For Brotli, the proportion of JavaScript in the highest compression levels is higher than in the lower compression levels, while JSON is more common in the lower compression levels. For Gzip, the distribution of the JavaScript content type is roughly equal at all levels.

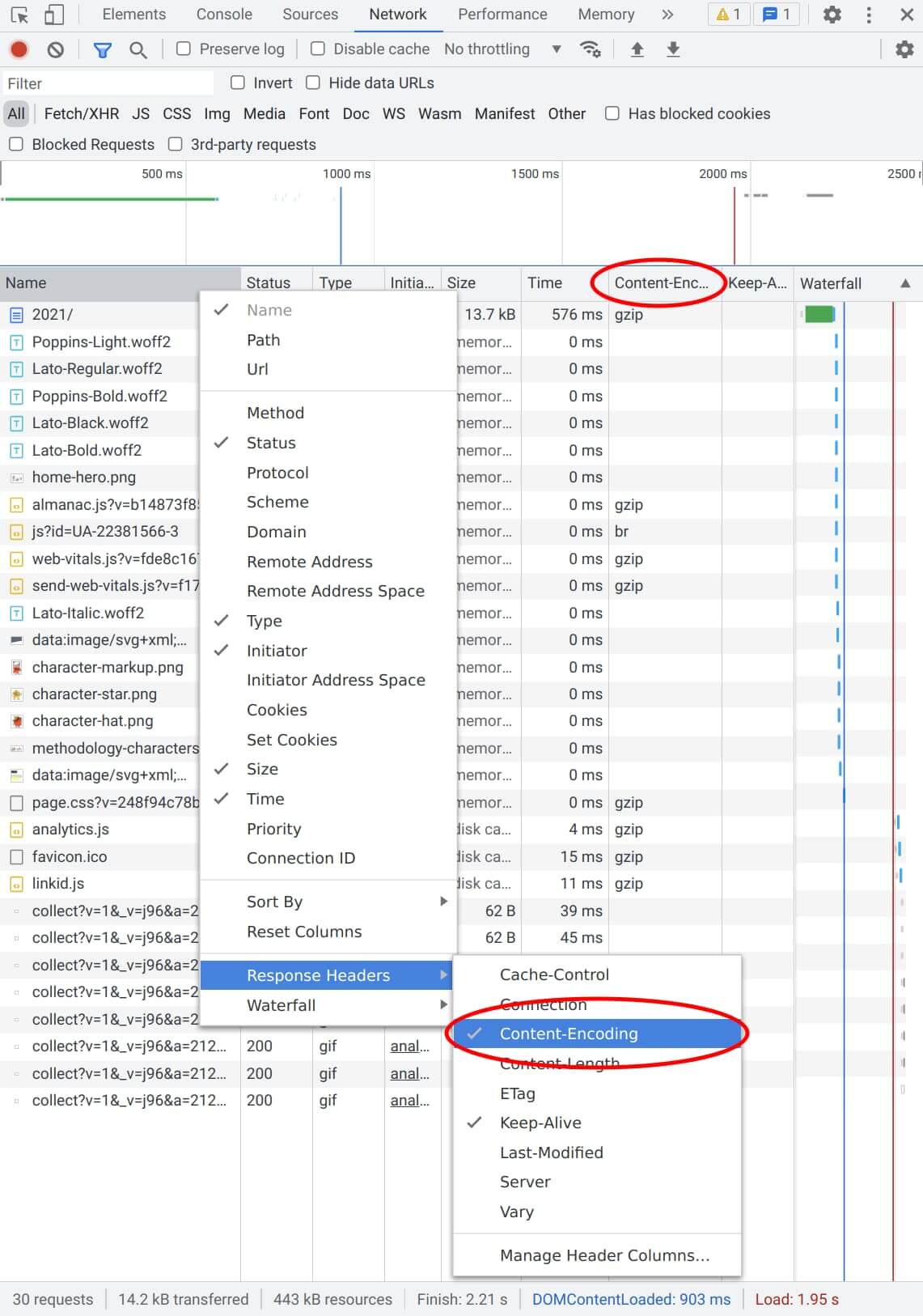

How to analyze compression on sites

To check which content of a website is using HTTP compression, the Firefox Developer Tools or the Chrome DevTools can be used. In the developer tools, open the Network tab and reload your site. A list of responses such as HTML, CSS, JavaScript, fonts and images should appear. To see which ones are compressed, you can check the content encoding in their response header. You can enable a column to easily see this for all responses at once. To do this, right click on the column titles, and in the menu navigate to Response Headers and enable Content-Encoding.

Responses that are Gzip compressed will show “gzip”, while those compressed with Brotli will show “br”. If the value is blank, no HTTP compression is used. For images this is normal, since these resources are already compressed on their own.

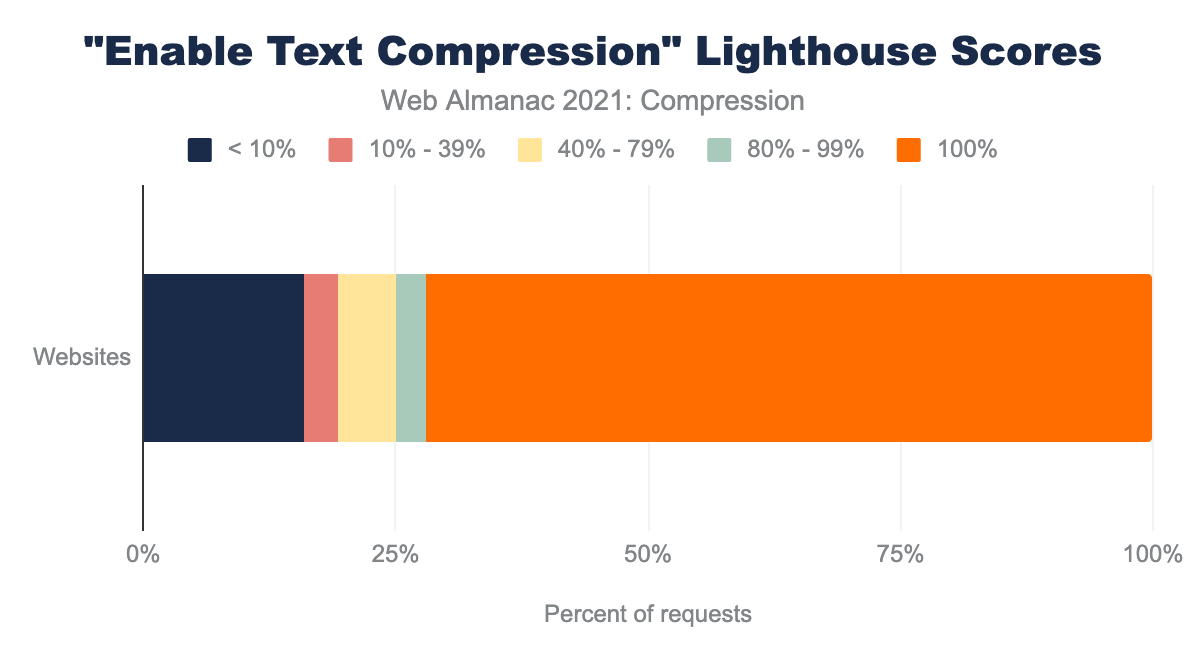

A different tool that can analyze compression on a site is Google’s Lighthouse tool. It runs a series of audits, including the “Enable text compression” audit. This audit attempts to compress resources to check if they reduced by at least 10% and 1,400 bytes. Depending on the score, it can show a compression recommendation in the results, with a list of the resources that can be compressed to benefit a website.

The HTTP Archive runs Lighthouse audits for every mobile page, and from this data we observed that 72% of websites pass this audit. This is 2% less than last year’s 74%, which is despite more usage of text compression overall compared to last year, a slight drop.

How to improve on compression

Before thinking about how to compress content, it is often wise to reduce the content transmitted to begin with. One way of achieving this is to use so-called “minimizers”, such as HTMLMinifier, CSSNano, or UglifyJS.

After having the minimal form of the content to transmit, the next step is to ensure compression is enabled. You can verify it is enabled as highlighted in the previous section, and configure your web server if needed.

If using only Gzip compression (also known as Deflate or Zlib), adding support for Brotli can be beneficial. In comparison to Gzip, Brotli compresses to smaller files at the same speed and decompresses at the same speed.

You can choose a well-tuned compression level. What compression level is right for your application might depend on multiple factors, but keep in mind that a more heavily compressed text file does not need more CPU when decoding, so for precompressed assets there’s no drawback from the user’s perspective to set the compression levels as high as possible. For dynamic compression, we have to make sure that the user doesn’t have to wait longer for a more heavily compressed file, taking both the time it takes to compress as well as the potentially decreased transmission time into account. This difference is borne out when looking at compression level recommendations for both methods.

When using Gzip compression for precompressed resources, consider using Zopfli, which generates smaller Gzip compatible files. Zopfli uses an iterative approach to find an very compact parsing, leading to 3-8% denser output, but taking substantially longer to compute, whereas Gzip uses a more straightforward but less effective approach. See this comparison between multiple compressors, and this comparison between Gzip and Zopfli that takes into account different compression levels for Gzip.

| Brotli | Gzip | |

|---|---|---|

| Precompressed | 11 | 9 or Zopfli |

| Dynamically compressed | 5 | 6 |

Improving the default settings on web server software would provide significant improvements to those who are not able to invest time into web performance, especially Gzip quality level 1 seems to be an outlier and would benefit from a default of 6, which compresses 15% better on the HTTP Archive summary_response_bodies data. Enabling Brotli by default instead of Gzip for user agents that support it would also provide a significant benefit.

Conclusion

The analysis of compression levels used on 28,000 HTTP responses reveals that about 0.5% of Gzip-compressed content uses more advanced compressors such as Zopfli, while a similar “optimal parsing” approach is used for 17% of Brotli-compressed content. This indicates that when more efficient methods are available, even if slower, a significant number of users will deploy these methods for their static content.

Usage of HTTP compression continues to grow, and especially Brotli has increased significantly compared to the previous year’s chapter. The number of HTTP responses using any text compression increased by 2%, while Brotli increased by over 4%. Despite the increase, we still see opportunities to use more HTTP compression by tweaking the compression settings of servers. You can benefit from taking a closer look at your own website’s responses and your server configuration. Where compression is not used, you may consider enabling it, and where it is used you may consider tweaking the compression methods towards higher compression levels, both for dynamic content such as HTML generated on the fly, and static content. Changing the default compression settings in popular HTTP servers could have a great impact for users.